Columbia engineers develop new AI technology that amplifies correct speaker from a group; breakthrough could lead to better hearing aids.

Columbia engineers develop new AI technology that amplifies correct speaker from a group; breakthrough could lead to better hearing aids.

Our brains have a remarkable knack for picking out individual voices in a noisy environment, like a crowded coffee shop or a busy city street. This is something that even the most advanced hearing aids struggle to do. But now Columbia engineers are announcing an experimental technology that mimics the brain’s natural aptitude for detecting and amplifying any one voice from many. Powered by artificial intelligence, this brain-controlled hearing aid acts as an automatic filter, monitoring wearers’ brain waves and boosting the voice they want to focus on.

Though still in early stages of development, the technology is a significant step toward better hearing aids that would enable wearers to converse with the people around them seamlessly and efficiently. This achievement is described today in Science Advances.

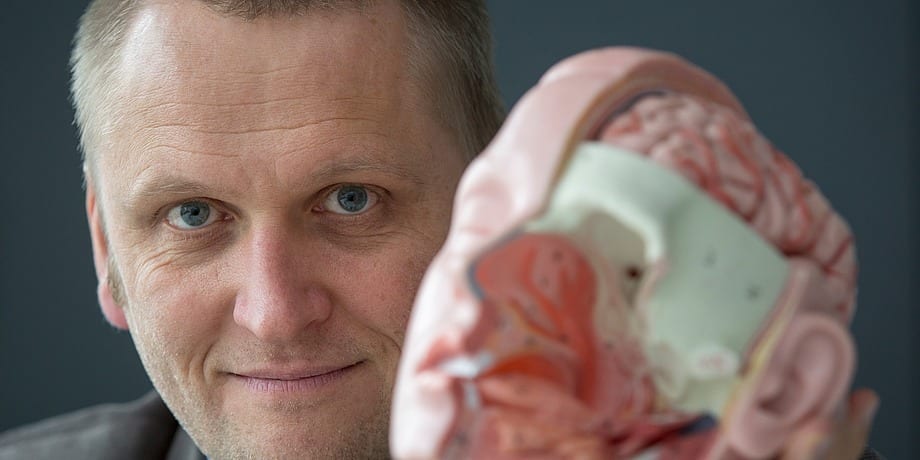

“The brain area that processes sound is extraordinarily sensitive and powerful; it can amplify one voice over others, seemingly effortlessly, while today’s hearings aids still pale in comparison,” said Nima Mesgarani, PhD, a principal investigator at Columbia’s Mortimer B. Zuckerman Mind Brain Behavior Institute and the paper’s senior author. “By creating a device that harnesses the power of the brain itself, we hope our work will lead to technological improvements that enable the hundreds of millions of hearing-impaired people worldwide to communicate just as easily as their friends and family do.”

Our end result was a speech-separation algorithm that performed similarly to previous versions but with an important improvement. It could recognize and decode a voice — any voice — right off the bat.

Modern hearing aids are excellent at amplifying speech while suppressing certain types of background noise, such as traffic. But they struggle to boost the volume of an individual voice over others. Scientists calls this the cocktail party problem, named after the cacophony of voices that blend together during loud parties.

“In crowded places, like parties, hearing aids tend to amplify all speakers at once,” said Dr. Mesgarani, who is also an associate professor of electrical engineering at Columbia Engineering. “This severely hinders a wearer’s ability to converse effectively, essentially isolating them from the people around them.”

The Columbia team’s brain-controlled hearing aid is different. Instead of relying solely on external sound-amplifiers, like microphones, it also monitors the listener’s own brain waves.

“Previously, we had discovered that when two people talk to each other, the brain waves of the speaker begin to resemble the brain waves of the listener,” said Dr. Mesgarani.

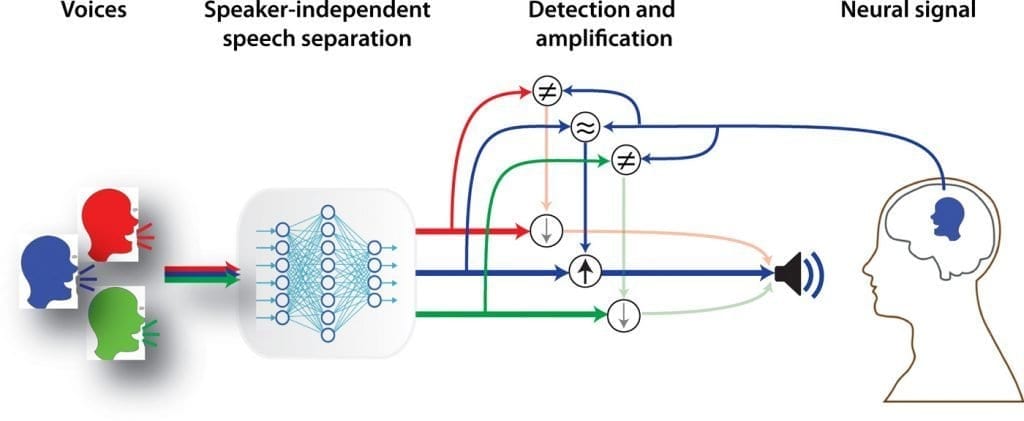

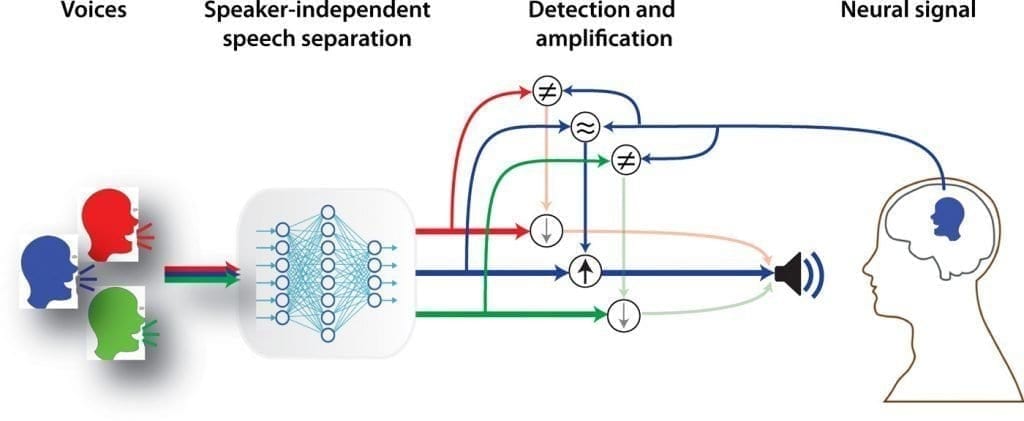

Using this knowledge the team combined powerful speech-separation algorithms with neural networks, complex mathematical models that imitate the brain’s natural computational abilities. They created a system that first separates out the voices of individual speakers from a group, and then compares the voices of each speaker to the brain waves of the person listening. The speaker whose voice pattern most closely matches the listener’s brain waves is then amplified over the rest.

The researchers published an earlier version of this system in 2017 that, while promising, had a key limitation: It had to be pretrained to recognize specific speakers.

“If you’re in a restaurant with your family, that device would recognize and decode those voices for you,” explained Dr. Mesgarani. “But as soon as a new person, such as the waiter, arrived, the system would fail.”

Today’s advance largely solves that issue. With funding from Columbia Technology Ventures to improve their original algorithm, Dr. Mesgarani and first authors Cong Han and James O’Sullivan, PhD, again harnessed the power of deep neural networks to build a more sophisticated model that could be generalized to any potential speaker that the listener encountered.

This technology works by mimicking what the brain would normally do. First, the device automatically separates out multiple speakers into separate streams, and then compares each speaker with the neural data from the user’s brain. The speaker that best matches a user’s neural data is then amplified above the others (Credit: Nima Mesgarani/Columbia’ Zuckerman Institute).

“Our end result was a speech-separation algorithm that performed similarly to previous versions but with an important improvement,” said Dr. Mesgarani. “It could recognize and decode a voice — any voice — right off the bat.”

To test the algorithm’s effectiveness, the researchers teamed up with Ashesh Dinesh Mehta, MD, PhD, a neurosurgeon at the Northwell Health Institute for Neurology and Neurosurgery and coauthor of today’s paper. Dr. Mehta treats epilepsy patients, some of whom must undergo regular surgeries.

“These patients volunteered to listen to different speakers while we monitored their brain waves directly via electrodes implanted in the patients’ brains,” said Dr. Mesgarani. “We then applied the newly developed algorithm to that data.”

The team’s algorithm tracked the patients’ attention as they listened to different speakers that they had not previously heard. When a patient focused on one speaker, the system automatically amplified that voice. When their attention shifted to a different speaker, the volume levels changed to reflect that shift.

Encouraged by their results, the researchers are now investigating how to transform this prototype into a noninvasive device that can be placed externally on the scalp or around the ear. They also hope to further improve and refine the algorithm so that it can function in a broader range of environments.

“So far, we’ve only tested it in an indoor environment,” said Dr. Mesgarani. “But we want to ensure that it can work just as well on a busy city street or a noisy restaurant, so that wherever wearers go, they can fully experience the world and people around them.”

The Latest on: Brain-controlled hearing aid

[google_news title=”” keyword=”brain-controlled hearing aid” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Brain-controlled hearing aid

- My father's last lesson: What I learned as my dad grappled with Alzheimer'son April 30, 2024 at 1:08 am

I felt helpless witnessing my dad’s devolution from happy warrior to hapless prisoner. Still, I was able to learn from my father's Alzheimer's.

- Cerebrozen Reviews - (Exposed) - Does It Really Work?on April 29, 2024 at 6:20 am

Moreover, a money-back guarantee is in effect for 60 days. Thus, within 60 days of purchase, I may receive a complete refund if I'm unhappy with the outcome. This statement demonstrates the ...

- Former Virginia hospital medical director acquitted of sexually abusing ex-patientson April 26, 2024 at 2:01 pm

The former medical director of a Virginia hospital that treats vulnerable children and young adults has been acquitted of sexually abusing two teenage patients during physical exams.

- Urgent warning as popular diet could be causing long-lasting brain damageon April 24, 2024 at 5:21 am

The famous saying goes, “you are what you eat” and now research has shown that what many of us are eating could be damaging to our brains. A study, by scientists in California, has revealed that a ...

- Why consistent hearing aid use may prevent fallson April 22, 2024 at 4:59 pm

Studies suggest that consistent use of hearing aids might just be the unexpected secret weapon in the battle against falls, especially among older adults.

- Indian American-run company’s non-invasive device could become a ‘hearing aid for the spine’on April 22, 2024 at 3:42 am

In an interview with indianexpress.com, Parag Gad of SpineX tells us how his company is developing two spinal neuromodulation devices targeting use in the treatment of Neurogenic Bladder and Pediatric ...

- Working routine job could boost risk of cognitive impairment, dementia: studyon April 18, 2024 at 12:12 pm

Study in Neurology says routine jobs may increase risk of dementia, cognitive decline. Is your job helping or hurting?

- Over-the-counter hearing aids: Are they safe?on April 18, 2024 at 4:00 am

Like readers for improving age-related sight loss — these aids are not perfectly attuned and don't fit every audio need.

- SM Entertainment helps children with hearing impairments through K-popon April 17, 2024 at 11:23 pm

SM Entertainment has collaborated with Samsung Medical Center to provide vocal and dance lessons from K-pop trainers to children recovering from implant surgeries through the SMile WoW Art School ...

- Catch up on the day’s news: Mayorkas impeachment fails, dementia risks, NBA player bannedon April 17, 2024 at 2:27 pm

1️⃣ Alejandro Mayorkas: The Senate killed the articles of impeachment against the Homeland Security secretary as the trial barely got underway. The articles were for “willful and systemic refusal to ...

via Bing News