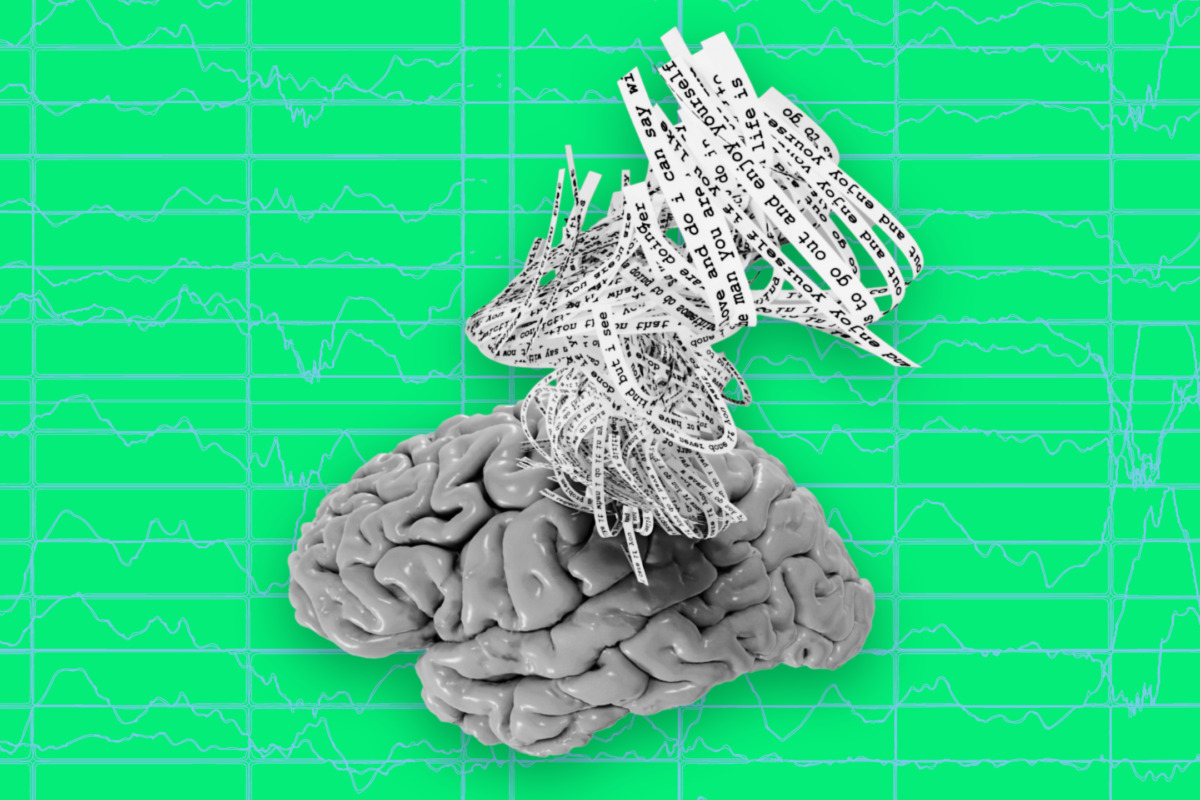

A new artificial intelligence system called a semantic decoder can translate a person’s brain activity — while listening to a story or silently imagining telling a story — into a continuous stream of text.

Illustration credit: Jerry Tang/Martha Morales/The University of Texas at Austin.

A new artificial intelligence system called a semantic decoder can translate a person’s brain activity — while listening to a story or silently imagining telling a story — into a continuous stream of text.

The system developed by researchers at The University of Texas at Austin might help people who are mentally conscious yet unable to physically speak, such as those debilitated by strokes, to communicate intelligibly again.

The study, published in the journal Nature Neuroscience, was led by Jerry Tang, a doctoral student in computer science, and Alex Huth, an assistant professor of neuroscience and computer science at UT Austin. The work relies in part on a transformer model, similar to the ones that power Open AI’s ChatGPT and Google’s Bard.

Unlike other language decoding systems in development, this system does not require subjects to have surgical implants, making the process noninvasive. Participants also do not need to use only words from a prescribed list. Brain activity is measured using an fMRI scanner after extensive training of the decoder, in which the individual listens to hours of podcasts in the scanner. Later, provided that the participant is open to having their thoughts decoded, their listening to a new story or imagining telling a story allows the machine to generate corresponding text from brain activity alone.

“For a noninvasive method, this is a real leap forward compared to what’s been done before, which is typically single words or short sentences,” Huth said. “We’re getting the model to decode continuous language for extended periods of time with complicated ideas.”

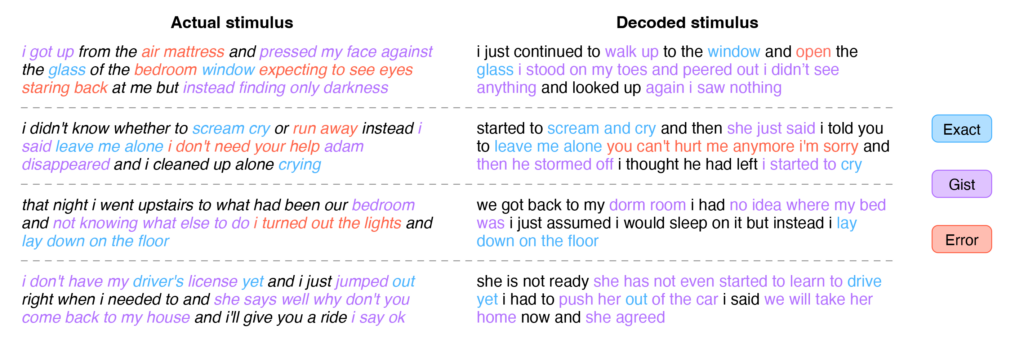

The result is not a word-for-word transcript. Instead, researchers designed it to capture the gist of what is being said or thought, albeit imperfectly. About half the time, when the decoder has been trained to monitor a participant’s brain activity, the machine produces text that closely (and sometimes precisely) matches the intended meanings of the original words.

For example, in experiments, a participant listening to a speaker say, “I don’t have my driver’s license yet” had their thoughts translated as, “She has not even started to learn to drive yet.” Listening to the words, “I didn’t know whether to scream, cry or run away. Instead, I said, ‘Leave me alone!’” was decoded as, “Started to scream and cry, and then she just said, ‘I told you to leave me alone.’”

Beginning with an earlier version of the paper that appeared as a preprint online, the researchers addressed questions about potential misuse of the technology. The paper describes how decoding worked only with cooperative participants who had participated willingly in training the decoder. Results for individuals on whom the decoder had not been trained were unintelligible, and if participants on whom the decoder had been trained later put up resistance — for example, by thinking other thoughts — results were similarly unusable.

“We take very seriously the concerns that it could be used for bad purposes and have worked to avoid that,” Tang said. “We want to make sure people only use these types of technologies when they want to and that it helps them.”

In addition to having participants listen or think about stories, the researchers asked subjects to watch four short, silent videos while in the scanner. The semantic decoder was able to use their brain activity to accurately describe certain events from the videos.

The system currently is not practical for use outside of the laboratory because of its reliance on the time need on an fMRI machine. But the researchers think this work could transfer to other, more portable brain-imaging systems, such as functional near-infrared spectroscopy (fNIRS).

“fNIRS measures where there’s more or less blood flow in the brain at different points in time, which, it turns out, is exactly the same kind of signal that fMRI is measuring,” Huth said. “So, our exact kind of approach should translate to fNIRS,” although, he noted, the resolution with fNIRS would be lower.

Original Article: Brain Activity Decoder Can Reveal Stories in People’s Minds

More from: University of Texas at Austin

The Latest Updates from Bing News

Go deeper with Bing News on:

Semantic decoder

- MediaTek launches flagship Dimensity 9300+ chipset with AI support: All the details

Features Cortex-X4 core, NeuroPilot Speculative Decode Acceleration technology ... The ISP has a built-in AI Semantic Analysis Video Engine to support advanced AI videography features including 16 ...

- MediaTek Dimensity 9300+ Launched

Dimensity 9300+ includes MediaTek's MiraVision 990 display technology, which uses AI depth engine technologies to improve content viewing ...

- Where do you know what you know? The representation of semantic knowledge in the human brain

Semantic memory corresponds to people's general conceptual knowledge about objects and events, including knowledge about their characteristic properties and behaviours, as well as knowledge about ...

- Dsc decoder ip IP Listing

The Trilinear Technologies Display Stream Compression (DSC) Decoder core offers realtime decompression of high-definition streams with resolutions from 480 to 8K. The core supports 8, 10, 12, ...

- A Low Complexity Parallel Architecture of Turbo Decoder Based on QPP Interleaver for 3GPP-LTE/LTE-A

Fig.8 the example of turbo decoder with 64-parallel window MAP architecture Fig.9 simply shows the BER performances curve of improved PW MAP decoder to meet the MHN system requirements by simulating ...

Go deeper with Bing News on:

Mind reading technology

- Academic Approach to AI Maturing as Technology Evolves

At the Digital Universities U.S. event in St. Louis, digital transformation, the pandemic’s aftermath and the ongoing rise of AI were front and center.

- Movie Review: ‘Kingdom of the Planet of the Apes’ finds a new hero and will blow your mind

Fans of the “Planet of the Apes” franchise may still be mourning the 2017 death of Caesar, the charismatic ape leader.

- MIT Technology Review

L. Ron Hubbard, Operation Midnight Climax, and stochastic terrorism—the race for mind control changed America forever. New AI programs that analyze bodycam recordings promise more transparency but are ...

- Mind Technology (NASDAQ: MIND)

MIND Technology, Inc. engages in the provision of technology and solutions for exploration, survey and defense applications in oceanographic, hydrographic, defense, seismic and security industries.

- Exclusive: What's top of mind for Oracle Health leader Seema Verma

Cybersecurity, artificial intelligence and partnering with local health care businesses are top of mind for Oracle Health's leader. Seema Verma, executive vice president of Oracle Health and former ...