Scientists like to think of science as self-correcting. To an alarming degree, it is not

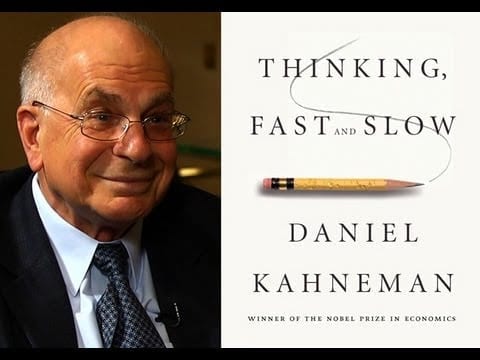

“I SEE a train wreck looming,” warned Daniel Kahneman, an eminent psychologist, in an open letter last year. The premonition concerned research on a phenomenon known as “priming”. Priming studies suggest that decisions can be influenced by apparently irrelevant actions or events that took place just before the cusp of choice. They have been a boom area in psychology over the past decade, and some of their insights have already made it out of the lab and into the toolkits of policy wonks keen on “nudging” the populace.

Dr Kahneman and a growing number of his colleagues fear that a lot of this priming research is poorly founded. Over the past few years various researchers have made systematic attempts to replicate some of the more widely cited priming experiments. Many of these replications have failed. In April, for instance, a paper in PLoS ONE, a journal, reported that nine separate experiments had not managed to reproduce the results of a famous study from 1998 purporting to show that thinking about a professor before taking an intelligence test leads to a higher score than imagining a football hooligan.

The idea that the same experiments always get the same results, no matter who performs them, is one of the cornerstones of science’s claim to objective truth. If a systematic campaign of replication does not lead to the same results, then either the original research is flawed (as the replicators claim) or the replications are (as many of the original researchers on priming contend). Either way, something is awry.

To err is all too common

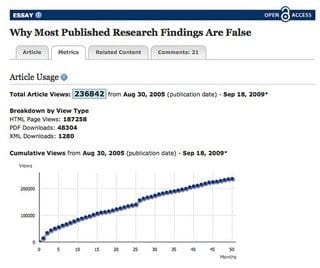

It is tempting to see the priming fracas as an isolated case in an area of science—psychology—easily marginalised as soft and wayward. But irreproducibility is much more widespread. A few years ago scientists at Amgen, an American drug company, tried to replicate 53 studies that they considered landmarks in the basic science of cancer, often co-operating closely with the original researchers to ensure that their experimental technique matched the one used first time round. According to a piece they wrote last year in Nature, a leading scientific journal, they were able to reproduce the original results in just six. Months earlier Florian Prinz and his colleagues at Bayer HealthCare, a German pharmaceutical giant, reported in Nature Reviews Drug Discovery, a sister journal, that they had successfully reproduced the published results in just a quarter of 67 seminal studies.

The governments of the OECD, a club of mostly rich countries, spent $59 billion on biomedical research in 2012, nearly double the figure in 2000. One of the justifications for this is that basic-science results provided by governments form the basis for private drug-development work. If companies cannot rely on academic research, that reasoning breaks down. When an official at America’s National Institutes of Health (NIH) reckons, despairingly, that researchers would find it hard to reproduce at least three-quarters of all published biomedical findings, the public part of the process seems to have failed.

Academic scientists readily acknowledge that they often get things wrong. But they also hold fast to the idea that these errors get corrected over time as other scientists try to take the work further. Evidence that many more dodgy results are published than are subsequently corrected or withdrawn calls that much-vaunted capacity for self-correction into question. There are errors in a lot more of the scientific papers being published, written about and acted on than anyone would normally suppose, or like to think.

Various factors contribute to the problem. Statistical mistakes are widespread. The peer reviewers who evaluate papers before journals commit to publishing them are much worse at spotting mistakes than they or others appreciate. Professional pressure, competition and ambition push scientists to publish more quickly than would be wise. A career structure which lays great stress on publishing copious papers exacerbates all these problems. “There is no cost to getting things wrong,” says Brian Nosek, a psychologist at the University of Virginia who has taken an interest in his discipline’s persistent errors. “The cost is not getting them published.”

First, the statistics, which if perhaps off-putting are quite crucial.

Go deeper with Bing News on:

Unreliable research

- Record-setting summer schedule to test American Airlines’ improved reliability

But American Airlines operations chief David Seymour says the Fort Worth-based air carrier has taken in the lessons learned looking ahead to this summer.

- $20 Million federal initiative targets low-emission silicon and thin film research

The Solar Energy Technologies Office has launched a dual initiative to propel upstream advancements in a collection of solar cell types, and to reduce the emissions of solar-grade polysilicon under 1 ...

- National Labs Guide Critical AI, Energy Storage, And Grid Research

Artificial intelligence and other technologies will take energy production and deliverty to a new level, helping increase reliability, reduce emissions, and cut costs.

- Two big reliability problems in advanced packaging

Today, newer advanced components have embraced heterogeneous integration and are being built from chiplets. Concerns relating to electromigration and thermo-mechanical stresses are two of the major ...

- Engineers evaluate reliability of pressure relief valves for liquid natural gas tanks in train derailment scenarios

Southwest Research Institute (SwRI) has helped determine the viability of pressure relief valves for liquid natural gas tanks in the event of a train derailment for the Federal Rail Administration ...

Go deeper with Google Headlines on:

Unreliable research

[google_news title=”” keyword=”Unreliable research” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Science as self-correcting

- Open Science: Why you should preprint your next paper

What are preprints and how do they benefit you and your research community? Plus find tools that help you keep up with the preprint literature.

- Why people say ‘bless you’ when someone sneezes

This article explores the origins of this common saying, examining its historical context, cultural variations, and the science behind sneezing ... While sneezing is typically a benign, ...

- ‘AI Sat Down at the Table and Began To Negotiate the Deal’: The Emerging World of AI Agents

If you are wondering what the next big thing in AI is, it’s AI agents. No doubt about it. Their capabilities are exciting, a little scary and essentially unknown. For legal practitioners, the issues ...

- Most science is not as simple as basic astronomy

Climate science, of course ... Some changes to the climate are self-correcting, and some are self-perpetuating. Sunspots, volcanoes, and even earthquakes can affect climate, and those can be ...

- Science is littered with zombie studies. Here’s how to stop their spread.

Even if science is self-correcting, the scientific record is not. Researchers are paid to publish — not to curate the literature and especially not to correct it. So, who will do this work?

Go deeper with Google Headlines on:

Science as self-correcting

[google_news title=”” keyword=”science as self-correcting” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]