The rapid pace of artificial intelligence (AI) has raised fears about whether robots could act unethically or soon choose to harm humans. Some are calling for bans on robotics research; others are calling for more research to understand how AI might be constrained. But how can robots learn ethical behavior if there is no “user manual” for being human?

Researchers Mark Riedl and Brent Harrison from the School of Interactive Computing at the Georgia Institute of Technology believe the answer lies in “Quixote” – to be unveiled at the AAAI-16 Conference in Phoenix, Ariz. (Feb. 12 – 17). Quixote teaches “value alignment” to robots by training them to read stories, learn acceptable sequences of events and understand successful ways to behave in human societies.

“The collected stories of different cultures teach children how to behave in socially acceptable ways with examples of proper and improper behavior in fables, novels and other literature,” says Riedl, associate professor and director of the Entertainment Intelligence Lab. “We believe story comprehension in robots can eliminate psychotic-appearing behavior and reinforce choices that won’t harm humans and still achieve the intended purpose.”

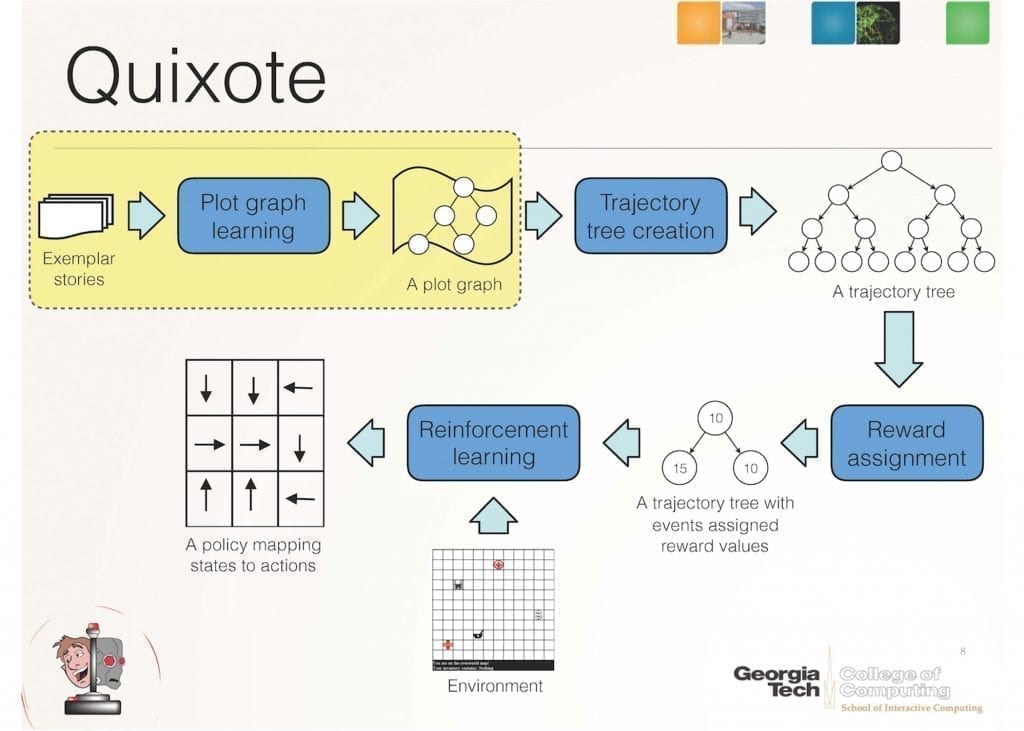

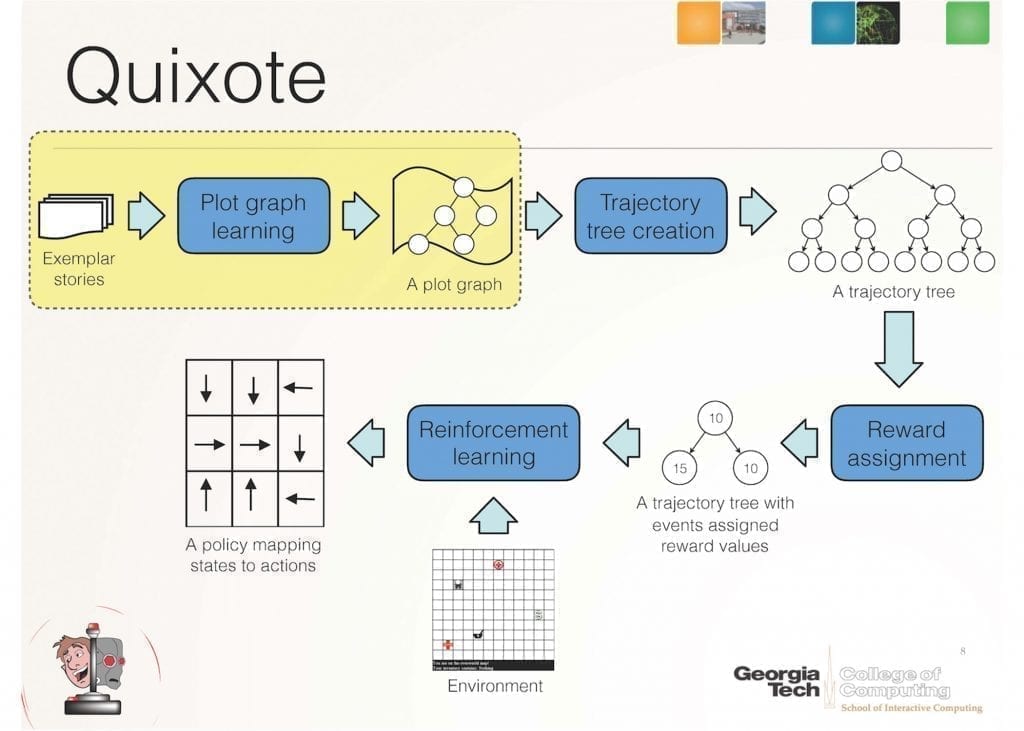

Quixote is a technique for aligning an AI’s goals with human values by placing rewards on socially appropriate behavior. It builds upon Riedl’s prior research – the Scheherazade system – which demonstrated how artificial intelligence can gather a correct sequence of actions by crowdsourcing story plots from the Internet.

Scheherazade learns what is a normal or “correct” plot graph. It then passes that data structure along to Quixote, which converts it into a “reward signal” that reinforces certain behaviors and punishes other behaviors during trial-and-error learning. In essence, Quixote learns that it will be rewarded whenever it acts like the protagonist in a story instead of randomly or like the antagonist.

Learn more: Using Stories to Teach Human Values to Artificial Agents

The Latest on: Using Stories to Teach Human Values to Artificial Agents

- Is artificial intelligence combat ready?on April 23, 2024 at 6:55 pm

Serco's machine learning leader Mike Colony explains the potential and challenges of human-machine teaming on the battlefield.

- More Companies Are Rushing to Hire A Chief AI Officer — But Do You Need One? Here's What You Need to Know.on April 23, 2024 at 3:00 pm

Companies are appointing executives to oversee AI. A better approach: infuse the technology throughout the organization.

- How companies can use generative AI for empathetic customer relationships to create lifetime valueon April 23, 2024 at 1:10 pm

Researchers from National Taiwan University and the University of Maryland have published a new Journal of Marketing article that examines how marketers can use GenAI to provide empathetic customer ...

- Lux Capital’s Josh Wolfe on Investing in Roboticson April 23, 2024 at 7:29 am

We spoke to him last July and had a great conversation about what he was seeing in this space. So I'm really pleased to welcome him back on the show. We're going to be speaking with Josh Wolf, ...

- Beyond Expectations: AI Agents and the Next Chapter of Workon April 23, 2024 at 2:43 am

AI agents, or autonomous agents, are in their early days. Very early – the bottom of the first inning early. The field is buzzing with innovation, from groundbreaking research to proof of concepts to ...

- Teaching a computer to type like a humanon April 20, 2024 at 12:31 pm

'We created a simulated user with a human-like visual and motor system. Then we trained it millions of times in a keyboard simulator. Eventually, it learned typing skills that can also be used to type ...

- Using Fair-Thinking Prompting Technique To Fake Out Generative AI And Get Hidden AI Prejudices Out In The Open And Dealt Withon April 18, 2024 at 5:15 am

Generative AI contains biases. That's a fact. How are you dealing with the biases? Here's a close look at a handy prompt engineering technique known as fair-thinking.

- How to design artificial intelligence that acts nice — and only niceon April 18, 2024 at 3:30 am

Today’s bots can’t turn against us, but they can cause harm. “AI safety” aims to train this tech so it will always be honest, harmless and helpful.

- Rossmann: Fear and loathing in math classon April 17, 2024 at 1:30 am

In Great Britain math is referred to in the plural, as in, “Jimmy is not good at maths.” The plural is logical since the discipline includes algebra, geometry and calculus.

- Preventing U.S. Election Violence in 2024on April 11, 2024 at 9:29 am

Violence around U.S. elections in 2024 could not only destabilize American democracy but also embolden autocrats across the world. Jacob Ware recommends that political leaders take steps to shore up ...

via Bing News