via Army Research Laboratory

Army researchers developed a reinforcement learning approach that will allow swarms of unmanned aerial and ground vehicles to optimally accomplish various missions while minimizing performance uncertainty.

Swarming is a method of operations where multiple autonomous systems act as a cohesive unit by actively coordinating their actions.

Army researchers said future multi-domain battles will require swarms of dynamically coupled, coordinated heterogeneous mobile platforms to overmatch enemy capabilities and threats targeting U.S. forces.

The Army is looking to swarming technology to be able to execute time-consuming or dangerous tasks, said Dr. Jemin George of the U.S. Army Combat Capabilities Development Command’s Army Research Laboratory.

“Finding optimal guidance policies for these swarming vehicles in real-time is a key requirement for enhancing warfighters’ tactical situational awareness, allowing the U.S. Army to dominate in a contested environment,” George said.

Reinforcement learning provides a way to optimally control uncertain agents to achieve multi-objective goals when the precise model for the agent is unavailable; however, the existing reinforcement learning schemes can only be applied in a centralized manner, which requires pooling the state information of the entire swarm at a central learner. This drastically increases the computational complexity and communication requirements, resulting in unreasonable learning time, George said.

In order to solve this issue, in collaboration with Prof. Aranya Chakrabortty from North Carolina State University and Prof. He Bai from Oklahoma State University, George created a research effort to tackle the large-scale, multi-agent reinforcement learning problem. The Army funded this effort through the Director’s Research Award for External Collaborative Initiative, a laboratory program to stimulate and support new and innovative research in collaboration with external partners.

The main goal of this effort is to develop a theoretical foundation for data-driven optimal control for large-scale swarm networks, where control actions will be taken based on low-dimensional measurement data instead of dynamic models.

The current approach is called Hierarchical Reinforcement Learning, or HRL, and it decomposes the global control objective into multiple hierarchies – namely, multiple small group-level microscopic control, and a broad swarm-level macroscopic control.

“Each hierarchy has its own learning loop with respective local and global reward functions,” George said. “We were able to significantly reduce the learning time by running these learning loops in parallel.”

According to George, online reinforcement learning control of swarm boils down to solving a large-scale algebraic matrix Riccati equation using system, or swarm, input-output data.

The researchers’ initial approach for solving this large-scale matrix Riccati equation was to divide the swarm into multiple smaller groups and implement group-level local reinforcement learning in parallel while executing a global reinforcement learning on a smaller dimensional compressed state from each group.

Their current HRL scheme uses a decoupling mechanism that allows the team to hierarchically approximate a solution to the large-scale matrix equation by first solving the local reinforcement learning problem and then synthesizing the global control from local controllers (by solving a least squares problem) instead of running a global reinforcement learning on the aggregated state. This further reduces the learning time.

Experiments have shown that compared to a centralized approach, HRL was able to reduce the learning time by 80% while limiting the optimality loss to 5%.

“Our current HRL efforts will allow us to develop control policies for swarms of unmanned aerial and ground vehicles so that they can optimally accomplish different mission sets even though the individual dynamics for the swarming agents are unknown,” George said.

George stated that he is confident that this research will be impactful on the future battlefield, and has been made possible by the innovative collaboration that has taken place.

“The core purpose of the ARL science and technology community is to create and exploit scientific knowledge for transformational overmatch,” George said. “By engaging external research through ECI and other cooperative mechanisms, we hope to conduct disruptive foundational research that will lead to Army modernization while serving as Army’s primary collaborative link to the world-wide scientific community.”

The team is currently working to further improve their HRL control scheme by considering optimal grouping of agents in the swarm to minimize computation and communication complexity while limiting the optimality gap.

They are also investigating the use of deep recurrent neural networks to learn and predict the best grouping patterns and the application of developed techniques for optimal coordination of autonomous air and ground vehicles in Multi-Domain Operations in dense urban terrain.

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Drone swarms

- Tesseract will build warfighting drones for military client

An Overland Park tech company continues its rapid growth with a military contract to develop a compact warfighting drone.

- UVify Sets New Guinness World Record With 5,293 IFO Drones in Spectacular Aerial Display

UVify, the leader in swarm drone innovation, has achieved a new Guinness World Record for the most unmanned aerial vehicles (UAVs) airborne simultaneously. This historic event took place in Songdo, Ko ...

- This video flies through the Army’s first small drone obstacle course

Soldiers are competing in the Army’s first small drone competition with obstacles modeled off the Ukraine war and threats in the Middle East.

- Insight: Sea drone warfare has arrived. The U.S. is floundering.

The U.S. Navy's efforts to build a fleet of unmanned vessels are faltering because the Pentagon remains wedded to big shipbuilding projects, according to some officials and company executives, ...

- From AI to drone swarms, here’s some of what Air Force Academy Cadets will study in new Cyber Innovation Center

The building will help cadets learn the technology of the future, ‘because our adversaries are doing the same thing.’ ...

Go deeper with Google Headlines on:

Drone swarms

[google_news title=”” keyword=”drone swarms” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Swarming technology

- Tesseract will build warfighting drones for military client

An Overland Park tech company continues its rapid growth with a military contract to develop a compact warfighting drone.

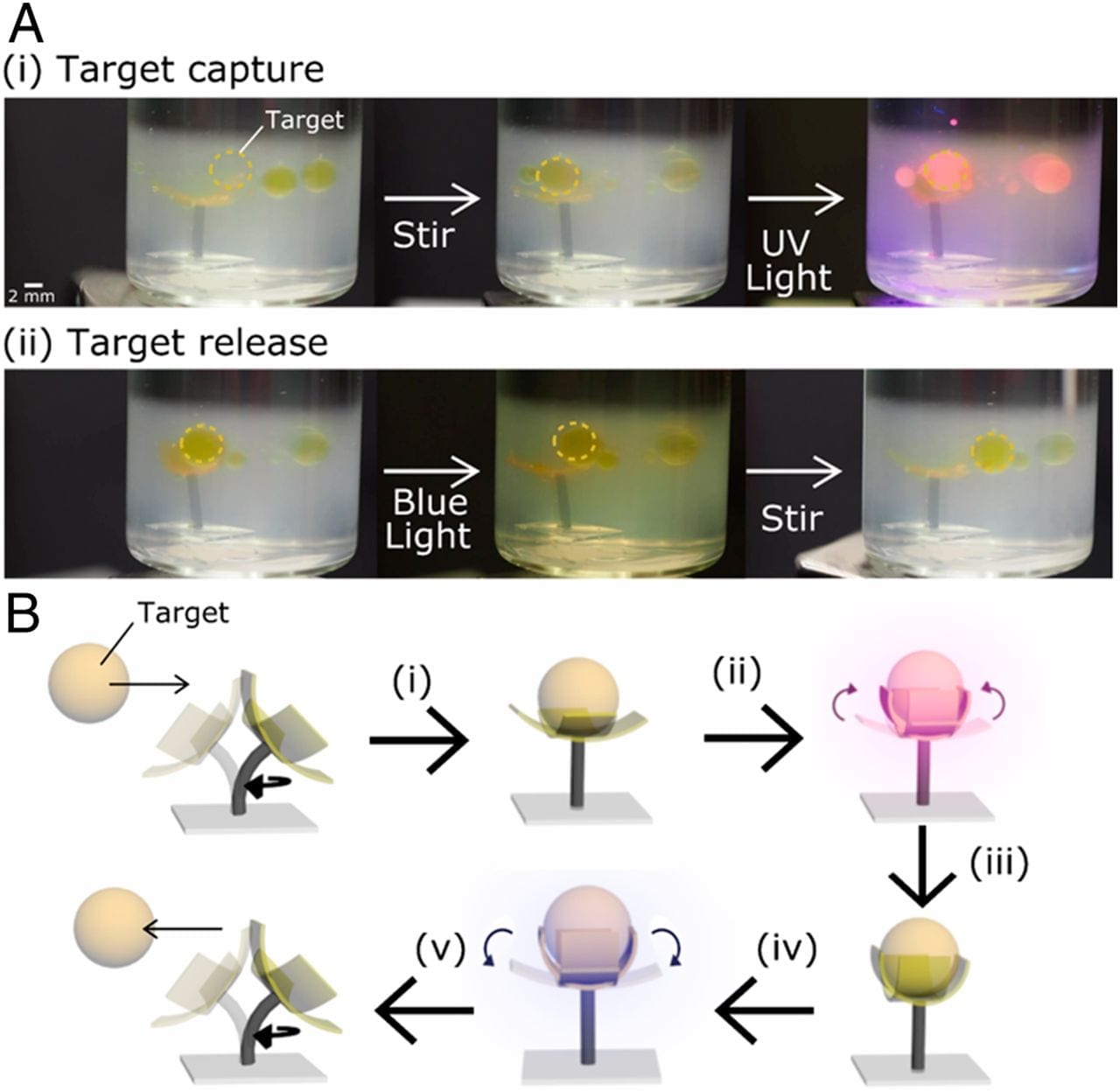

- Swarm of tiny snail robots stick together to form new structures

Researchers have built a swarm of miniature, snail-inspired robots, minus all the mucus. Instead, a retractable suction cup works in tandem with the remote-controlled machine’s tank-like treads to ...

- Microrobots Swarm the Seas, Capturing Microplastics and Bacteria [Video]

Researchers have developed microrobots capable of removing microplastics and bacteria from water, addressing the dual threat of pollution and disease spread in aquatic environments. When old food ...

- Swarm Intelligence Market Overview: Industry Landscape and Outlook by 2032

Report Ocean published the latest research report on the Swarm Intelligence market. In order to comprehend a market holistically, a variety of factors must be evaluated, including demographics, ...

- Swarm of Swiss robots could take part in future space missions

Researchers in Zurich propose using a swarm of small robots instead of a heavy rover for future space missions.

Go deeper with Google Headlines on:

Swarming technology

[google_news title=”” keyword=”swarming technology” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]