via Stanford University

Today’s electronics waste energy by using separate chips to process and store data. New algorithms enable energy-efficient hybrids to work together as one mega-chip.

Smartwatches and other battery-powered electronics would be even smarter if they could run AI algorithms.

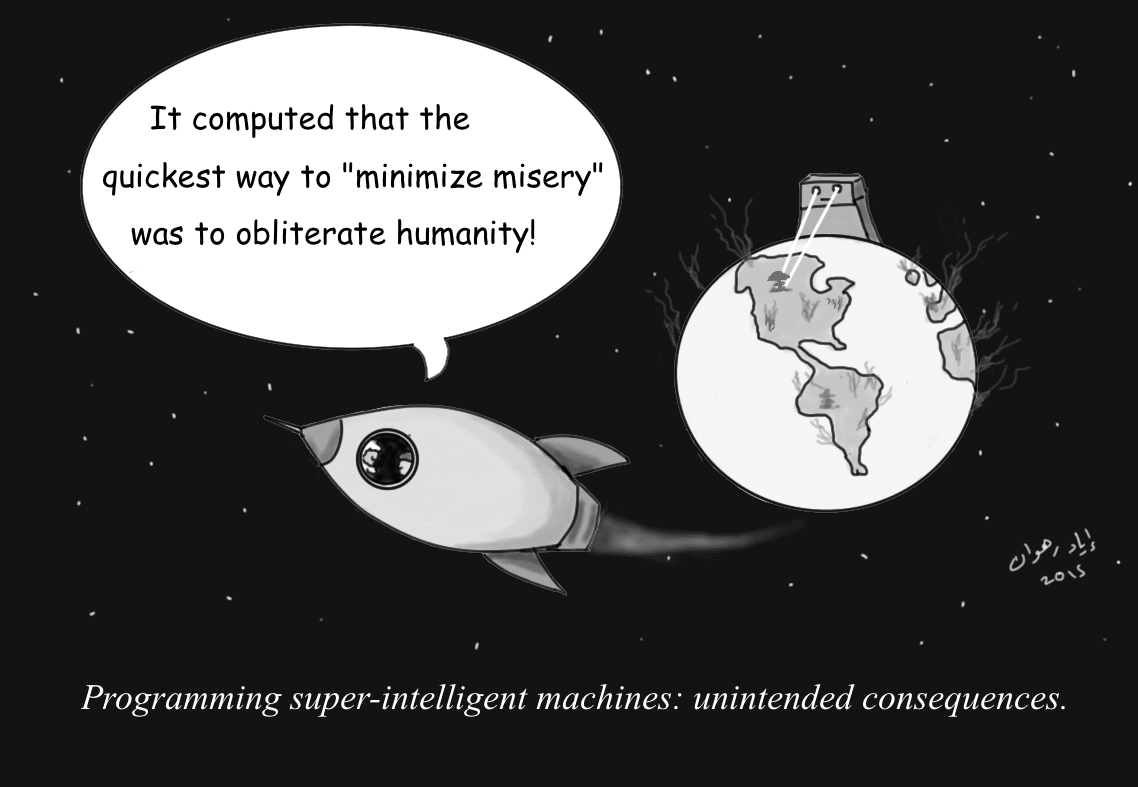

But efforts to build AI-capable chips for mobile devices have so far hit a wall — the so-called “memory wall” that separates data processing and memory chips that must work together to meet the massive and continually growing computational demands imposed by AI.

“Transactions between processors and memory can consume 95 percent of the energy needed to do machine learning and AI, and that severely limits battery life,” said computer scientist Subhasish Mitra, senior author of a new study published in Nature Electronics.

Now, a team that includes Stanford School of Engineering computer scientist Mary Wootters and electrical engineer H.-S. Philip Wong has designed a system that can run AI tasks faster, and with less energy, by harnessing eight hybrid chips, each with its own data processor built right next to its own memory storage.

This paper builds on the team’s prior development of a new memory technology, called RRAM, that stores data even when power is switched off — like flash memory — only faster and more energy efficiently. Their RRAM advance enabled the Stanford researchers to develop an earlier generation of hybrid chips that worked alone. Their latest design incorporates a critical new element: algorithms that meld the eight, separate hybrid chips into one energy-efficient AI-processing engine.

“If we could have built one massive, conventional chip with all the processing and memory needed, we’d have done so, but the amount of data it takes to solve AI problems makes that a dream,” Mitra said. “Instead, we trick the hybrids into thinking they’re one chip, which is why we call this the Illusion System.”

The researchers developed Illusion as part of the Electronics Resurgence Initiative (ERI), a $1.5 billion program sponsored by the Defense Advanced Research Projects Agency. DARPA, which helped spawn the internet more than 50 years ago, is supporting research investigating workarounds to Moore’s Law, which has driven electronic advances by shrinking transistors. But transistors can’t keep shrinking forever.

“To surpass the limits of conventional electronics, we’ll need new hardware technologies and new ideas about how to use them,” Wootters said.

The Stanford-led team built and tested its prototype with help from collaborators at the French research institute CEA-Leti and at Nanyang Technological University in Singapore. The team’s eight-chip system is just the beginning. In simulations, the researchers showed how systems with 64 hybrid chips could run AI applications seven times faster than current processors, using one-seventh as much energy.

Such capabilities could one day enable Illusion Systems to become the brains of augmented and virtual reality glasses that would use deep neural networks to learn by spotting objects and people in the environment, and provide wearers with contextual information — imagine an AR/VR system to help birdwatchers identify unknown specimens.

Stanford graduate student Robert Radway, who is first author of the Nature Electronics study, said the team also developed new algorithms to recompile existing AI programs, written for today’s processors, to run on the new multi-chip systems. Collaborators from Facebook helped the team test AI programs that validated their efforts. Next steps include increasing the processing and memory capabilities of individual hybrid chips and demonstrating how to mass produce them cheaply.

“The fact that our fabricated prototype is working as we expected suggests we’re on the right track,” said Wong, who believes Illusion Systems could be ready for marketability within three to five years.

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Mega-chip

- SK hynix commits $14.5 billion to build DRAM line in Cheongju

The chipmaker will build the M15X line next to an existing one that will be dedicated to producing high bandwidth memory chips.

- Biden to visit Syracuse after Micron awarded $6.1B for CNY facility

Micron was the reason for Biden's last official visit to the Syracuse area. In 2022, he headlined an event at Onondaga Community College celebrating Micron's plan to build a massive semiconductor chip ...

- Samsung to invest $230 billion to build ‘mega’ chip cluster

The logo of Samsung Electronics is seen at its office in Seoul, South Korea, on Jan. 31, 2023. (AP Photo/Ahn Young-joon, File) SEOUL, South Korea (AP) — Samsung Electronics said Wednesday it expects ...

- Huawei's building a mega chip R&D Centre

Shanghai to be the new Silicon Valley Tech titan Huawei is trying to outsmart Uncle Sam's tech crackdown with a whopping new semiconductor R&D centre in Shanghai. They're talking a big game with plans ...

- Full support needed for AI development

In a meaningful step earlier, the president promised that he himself will take charge of the power and water supply needed for the 622-trillion-won ($459 billion) mega chip cluster in Yongin, Gyeonggi ...

Go deeper with Google Headlines on:

Mega-chip

[google_news title=”” keyword=”mega-chip” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

RRAM

- Qualcomm Unveils Breakthrough Wi-Fi Tech and New AI-Ready IoT and Industrial Platforms

At the Embedded World 2024 exhibition and conference in Nuremberg, Qualcomm made significant announcements related to IoT and Wi-Fi Technology — Qual ...

- Neuromorphic sensing and computing to generate US$28 million revenue in 2024

Electronics Engineering Herald is an online magazine for electronic engineers with focus on hardware design, embedded, VLSI, and design tools. EE Herald publishes design ideas, technology trends, ...

- The ML-enabled edge MCUs available in three design tiers

These MCUs offer an elegant solution in terms of on-chip RAM encompassing SRAM and RRAM content. Hardware design support includes an evaluation base board with Arduino expansion header, sensor suite, ...

- TetraMem Delivers RISC-V AI Accelerator Tape-Out in Record Time on Synopsys Cloud

With its in-memory computing (IMC) technology for efficient AI applications, TetraMem is the only company producing a high-bit-density, multi-level RRAM (otherwise known as a computing memristor) ...

- How to Elevate RRAM and MRAM Design Experience to the Next Level

Aside from existing non-volatile memory technology like One-Time-Programmable memory (OTP), Multiple-Time-Programmable memory (MTP) and Flash memory (Flash), emerging non-volatile memories such as ...

Go deeper with Google Headlines on:

RRAM

[google_news title=”” keyword=”RRAM” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]