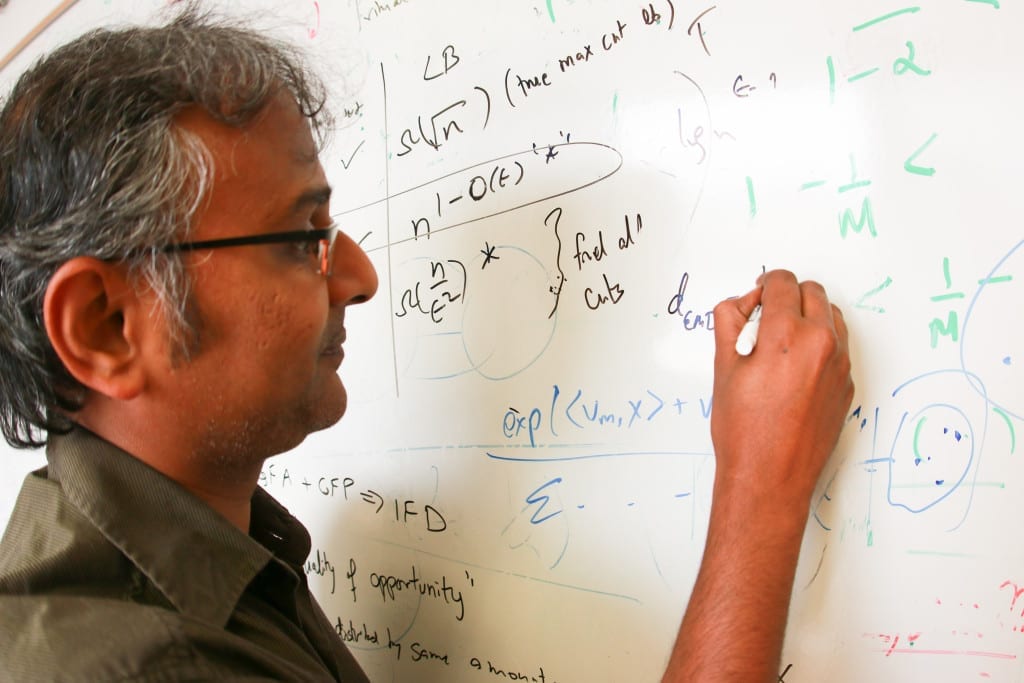

Suresh Venkatasubramanian, an associate professor in the University of Utah’s School of Computing, leads a team of researchers that have discovered a technique to determine if algorithms used for tasks such as hiring or administering housing loans could in fact discriminate unintentionally. The team also has discovered a way to fix such errors if they exist. Their findings were recently revealed at the 21st Association for Computing Machinery’s SIGKDD Conference on Knowledge Discovery and Data Mining in Sydney, Australia.

Software may appear to operate without bias because it strictly uses computer code to reach conclusions. That’s why many companies use algorithms to help weed out job applicants when hiring for a new position.

But a team of computer scientists from the University of Utah, University of Arizona and Haverford College in Pennsylvania have discovered a way to find out if an algorithm used for hiring decisions, loan approvals and comparably weighty tasks could be biased like a human being.

The researchers, led by Suresh Venkatasubramanian, an associate professor in the University of Utah’s School of Computing, have discovered a technique to determine if such software programs discriminate unintentionally and violate the legal standards for fair access to employment, housing and other opportunities. The team also has determined a method to fix these potentially troubled algorithms. Venkatasubramanian presented his findings Aug. 12 at the 21st Association for Computing Machinery’s SIGKDD Conference on Knowledge Discovery and Data Mining in Sydney, Australia.

“There’s a growing industry around doing résumé filtering and résumé scanning to look for job applicants, so there is definitely interest in this,” says Venkatasubramanian. “If there are structural aspects of the testing process that would discriminate against one community just because of the nature of that community, that is unfair.”

Machine-learning algorithms

Many companies have been using algorithms in software programs to help filter out job applicants in the hiring process, typically because it can be overwhelming to sort through the applications manually if many apply for the same job. A program can do that instead by scanning résumés and searching for keywords or numbers (such as school grade point averages) and then assigning an overall score to the applicant.

These programs also can learn as they analyze more data. Known as machine-learning algorithms, they can change and adapt like humans so they can better predict outcomes. Amazon uses similar algorithms so they can learn the buying habits of customers or more accurately target ads, and Netflix uses them so they can learn the movie tastes of users when recommending new viewing choices.

But there has been a growing debate on whether machine-learning algorithms can introduce unintentional bias much like humans do.

“The irony is that the more we design artificial intelligence technology that successfully mimics humans, the more that A.I. is learning in a way that we do, with all of our biases and limitations,” Venkatasubramanian says.

Disparate impact

Venkatasubramanian’s research determines if these software algorithms can be biased through the legal definition of disparate impact, a theory in U.S. anti-discrimination law that says a policy may be considered discriminatory if it has an adverse impact on any group based on race, religion, gender, sexual orientation or other protected status.

Venkatasubramanian’s research revealed that you can use a test to determine if the algorithm in question is possibly biased. If the test — which ironically uses another machine-learning algorithm — can accurately predict a person’s race or gender based on the data being analyzed, even though race or gender is hidden from the data, then there is a potential problem for bias based on the definition of disparate impact.

“I’m not saying it’s doing it, but I’m saying there is at least a potential for there to be a problem,” Venkatasubramanian says.

Read more: PROGRAMMING AND PREJUDICE

The Latest on: Bias in Algorithms

[google_news title=”” keyword=”Bias in Algorithms” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Bias in Algorithms

- A.C.C.E.S.S. AI: A New Framework For Advancing Health Equity In Health Care AIon April 25, 2024 at 5:25 am

The A.C.C.E.S.S. AI Model is a framework for multidisciplinary stakeholders to collectively engage communities, identify barriers to AI implementation, and uncover opportunities to use AI to advance ...

- Randomised controlled trials evaluating artificial intelligence in clinical practice: a scoping reviewon April 24, 2024 at 5:39 pm

This scoping review of randomised controlled trials on artificial intelligence (AI) in clinical practice reveals an expanding interest in AI across clinical specialties and locations. The USA and ...

- The Unseen Ethical Considerations in AI Practices: A Guide for the CEOon April 23, 2024 at 1:28 pm

While technical prowess and business potential are usually the focus of conversations around AI, the ethical considerations are sometimes overlooked, especially those not immediately obvious.

- New mitigation framework reduces bias in classification outcomeson April 23, 2024 at 1:04 pm

We use computers to help us make (hopefully) unbiased decisions. The problem is that machine-learning algorithms do not always make fair classifications if human bias is embedded in the data used to ...

- AI for small business: an update with what’s new in 2024on April 23, 2024 at 4:10 am

I wrote an article about how I use Artificial Intelligence (AI) in my small business. The article proved to be quite popular and I was even interviewed about ...

- AI Recommendation Algorithms Can Worsen Lonelinesson April 21, 2024 at 5:00 pm

Humans are also in charge of designing these algorithms and online platforms, so it would be unfair to "blame" AI for this bias. It is important to be aware that this bias is amplified by AI through a ...

- Addressing Racial Bias in AI: Challenges and Solutionson April 21, 2024 at 3:42 am

Worse still, the development of AI technologies has uncovered thorny issues including the perpetuation of racial bias in AI-produced content. Business insider provided some cases of the racial biases ...

- Early AI Regulation Efforts Get Pushback From All Sideson April 19, 2024 at 2:04 pm

Legislators Colorado, Connecticut and Texas who are concerned about AI bias and errors made their case together as civil rights-oriented groups and the AI industry jostle over core components of new ...

- Researchers reduce bias in pathology AI algorithms and enhance accuracy using foundation modelson April 18, 2024 at 8:00 am

Advanced artificial intelligence (AI) systems have shown promise in revolutionizing the field of pathology by transforming the detection, diagnosis, and treatment of disease; however, the ...

- Google accused of bias in search resultson April 16, 2024 at 3:24 pm

Explore the allegations of bias leveled against Google, providing an in-depth analysis of its search results and potential influences.

via Bing News