Software may appear to operate without bias because it strictly uses computer code to reach conclusions. That’s why many companies use algorithms to help weed out job applicants when hiring for a new position.

But a team of computer scientists from the University of Utah, University of Arizona and Haverford College in Pennsylvania have discovered a way to find out if an algorithm used for hiring decisions, loan approvals and comparably weighty tasks could be biased like a human being.

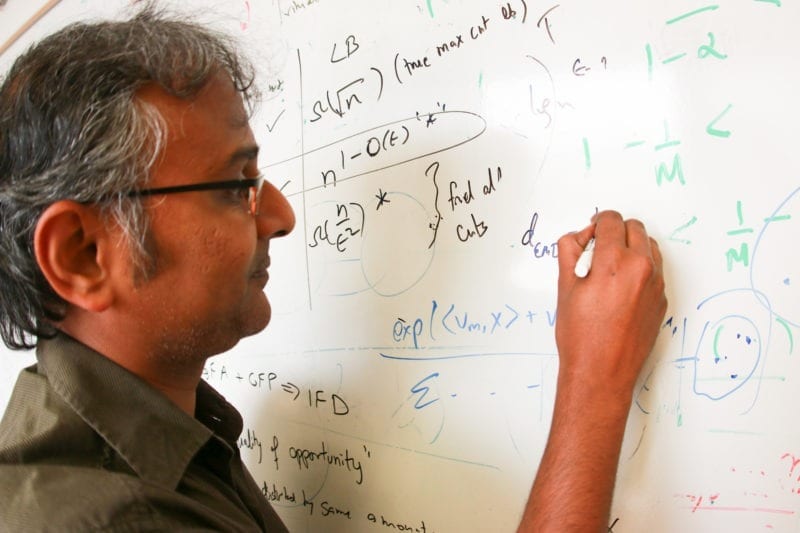

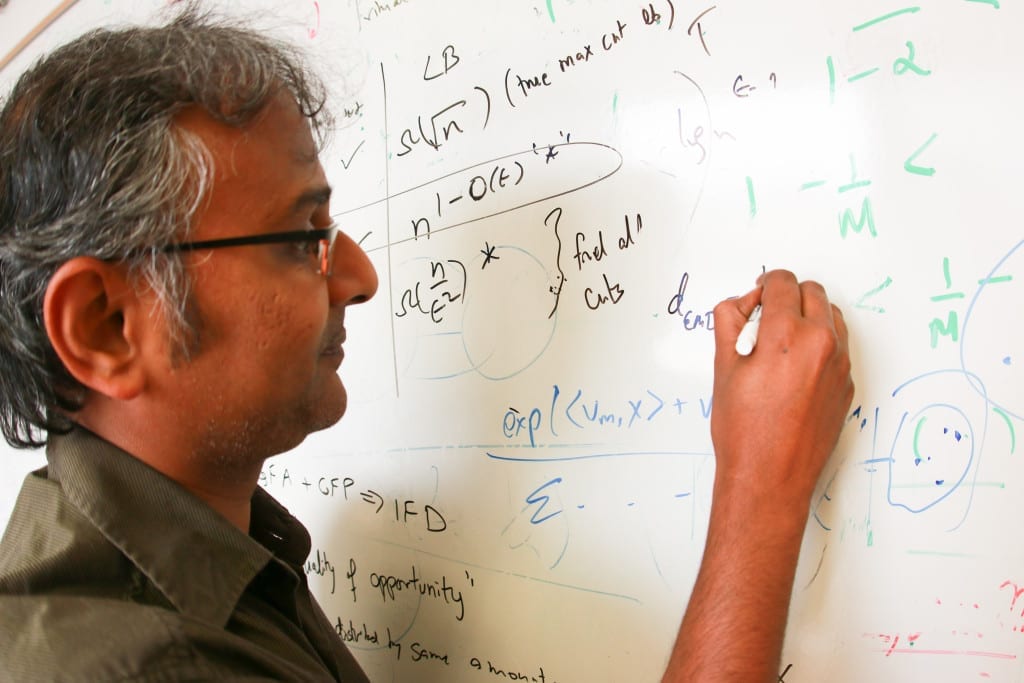

The researchers, led by Suresh Venkatasubramanian, an associate professor in the University of Utah’s School of Computing, have discovered a technique to determine if such software programs discriminate unintentionally and violate the legal standards for fair access to employment, housing and other opportunities. The team also has determined a method to fix these potentially troubled algorithms. Venkatasubramanian presented his findings Aug. 12 at the 21st Association for Computing Machinery’s SIGKDD Conference on Knowledge Discovery and Data Mining in Sydney, Australia.

“There’s a growing industry around doing résumé filtering and résumé scanning to look for job applicants, so there is definitely interest in this,” says Venkatasubramanian. “If there are structural aspects of the testing process that would discriminate against one community just because of the nature of that community, that is unfair.”

Machine-learning algorithms

Many companies have been using algorithms in software programs to help filter out job applicants in the hiring process, typically because it can be overwhelming to sort through the applications manually if many apply for the same job. A program can do that instead by scanning résumés and searching for keywords or numbers (such as school grade point averages) and then assigning an overall score to the applicant.

These programs also can learn as they analyze more data. Known as machine-learning algorithms, they can change and adapt like humans so they can better predict outcomes. Amazon uses similar algorithms so they can learn the buying habits of customers or more accurately target ads, and Netflix uses them so they can learn the movie tastes of users when recommending new viewing choices.

But there has been a growing debate on whether machine-learning algorithms can introduce unintentional bias much like humans do.

“The irony is that the more we design artificial intelligence technology that successfully mimics humans, the more that A.I. is learning in a way that we do, with all of our biases and limitations,” Venkatasubramanian says.

Disparate impact

Venkatasubramanian’s research determines if these software algorithms can be biased through the legal definition of disparate impact, a theory in U.S. anti-discrimination law that says a policy may be considered discriminatory if it has an adverse impact on any group based on race, religion, gender, sexual orientation or other protected status.

Venkatasubramanian’s research revealed that you can use a test to determine if the algorithm in question is possibly biased. If the test — which ironically uses another machine-learning algorithm — can accurately predict a person’s race or gender based on the data being analyzed, even though race or gender is hidden from the data, then there is a potential problem for bias based on the definition of disparate impact.

“I’m not saying it’s doing it, but I’m saying there is at least a potential for there to be a problem,” Venkatasubramanian says.

Read more: PROGRAMMING AND PREJUDICE

The Latest on: Bias in Algorithms

[google_news title=”” keyword=”Bias in Algorithms” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Bias in Algorithms

- Absolut combats AI bias in fashion with 10K diverse image promptson May 7, 2024 at 7:33 am

The Pernod Ricard brand teamed with Copy Lab to generate inclusive, free-to-use imagery in an attempt to train AI to become less biased.

- Animal behavior research better at keeping observer bias from sneaking in—but there's still room to improveon May 6, 2024 at 9:03 am

Animal behavior research relies on careful observation of animals. Researchers might spend months in a jungle habitat watching tropical birds mate and raise their young. They might track the rates of ...

- Avoiding bias in automated decision-makingon May 5, 2024 at 9:53 pm

The potential positives of artificial intelligence and automated decision-making are numerous, but human design and oversight are necessities.

- Tom Snyder: AI reinforces algorithmic bias, often to unexpected effecton May 5, 2024 at 9:12 pm

As society and business shifts from primarily human interaction to increasingly digital ones, we are now at the mercy of biases built into algorithms that ultimately steer our behavior.

- UW Madison student studies social media algorithmson May 4, 2024 at 1:48 am

A team of UW- Madison students are set to present their recent findings on popular social media sites Instagram and TikTok at a tech symposium this month.

- Fundamental Issues In Computer Vision Still Unresolvedon May 1, 2024 at 5:00 pm

“Unfortunately, all datasets have biases, and algorithms aren’t necessarily going to generalize to data that differs from the datasets they’re trained on. And one thing we have found with deep nets is ...

- Exploring Bias in AI: How Can We Overcome It?on May 1, 2024 at 5:01 am

In a world with increasing public surveillance, biases in facial recognition also present a potential to exert harm among marginalised groups. So far, people’s false identification through facial ...

- Empowering Minorities in Tech: AI’s Objective Willingness to be Supportiveon April 30, 2024 at 9:03 am

AI, linguistic services will improve, thus bringing about social inequality (augmented or lessened depending on who does the work).

- OCR, CMS Issue New ACA Section 1557 Final Rule Prohibiting Discrimination Related to Use of Artificial Intelligence in Health Careon April 29, 2024 at 5:00 pm

Preventing discrimination and bias in connection with the use of artificial intelligence ... which is a newly defined term that replaced the term “clinical algorithm” in the Proposed Rule, is “any ...

- Self-supervised foundation models mitigate bias in histopathology AIon April 29, 2024 at 12:52 pm

Image classification models perform more accurately in white patients than in Black patients, but foundation models may help close these health equity gaps.

via Bing News