A team of researchers from the Department of Energy’s Oak Ridge National Laboratory has married artificial intelligence and high-performance computing to achieve a peak speed of 20 petaflops in the generation and training of deep learning networks on the laboratory’s Titan supercomputer.

Deep learning is a burgeoning field of artificial intelligence that uses networks modeled after the human brain to “learn” how to distinguish features and patterns in vast datasets. Such networks hold great promise in the realization of numerous technologies, from self-driving cars to intelligent robots.

Due to its ability to make sense of massive amounts of data, researchers across the scientific spectrum are eager to refine deep learning and apply it to some of today’s most challenging science problems. One such effort is ORNL’s Advances in Machine Learning to Improve Scientific Discovery at Exascale and Beyond (ASCEND) project, which aims to use deep learning to make sense of the massive datasets produced by the world’s most sophisticated scientific experiments, such as those located at ORNL.

Analysis of such datasets generally requires existing neural networks to be modified, or novel networks designed and then “trained” so that they know precisely what to look for and can produce valid results.

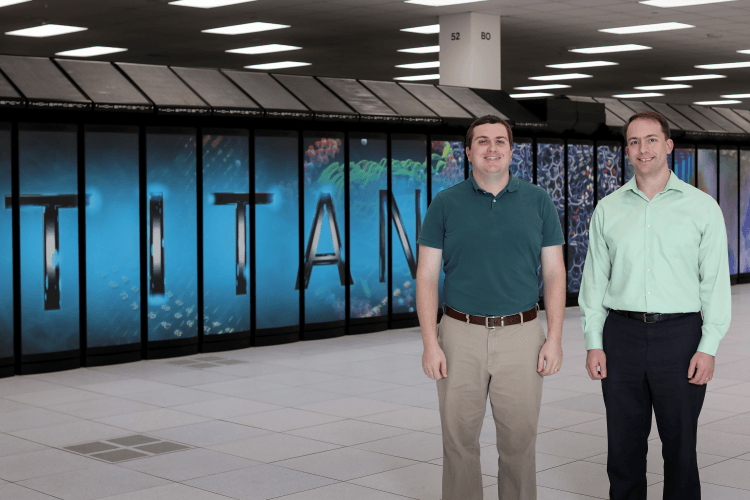

This is a time-consuming and difficult task, but one that an ORNL team led by Robert Patton and including Steven Young and Travis Johnston recently demonstrated can be dramatically expedited with a capable computing system such as ORNL’s Titan, the nation’s fastest supercomputer for science.

To efficiently design neural networks capable of tackling scientific datasets and expediting breakthroughs, Patton’s team developed two codes for evolving (MENNDL) and fine-tuning (RAvENNA) deep neural network architectures.

Both codes can generate and train as many as 18,600 neural networks simultaneously. Peak performance can be estimated by randomly sampling, and then carefully profiling, several hundred of these independently trained networks.

Both codes achieved a peak performance of 20 petaflops, or 20 thousand trillion calculations per second, on Titan (or just under half of Titan’s single precision total peak performance). In practical terms, that translates to training 40-50,000 networks per hour.

“The real measure of success in the deep learning community is time-to-solution,” said Johnston. “And with a machine like Titan we are able to train an unparalleled number of highly accurate networks.”

Titan is a Cray hybrid system, meaning that it uses both traditional CPUs and graphics processing units (GPUs) to tackle complex calculations for big science problems efficiently; the GPUs also happen to be the processor of choice for training deep learning networks.

The team’s work demonstrates that with the right high-performance computing system researchers can efficiently train large numbers of networks, which can then be used to help them tackle today’s increasingly data-heavy experiments and simulations.

This efficient design of deep neural networks will enable researchers to deploy highly accurate, custom-designed models, saving both time and money by freeing the scientist from the task of designing a network from the ground up.

And because the OLCF’s next leadership computing system, Summit, features a deep-learning friendly architecture with enhanced GPUs and complementary Tensor cores, the team is confident both codes will only get faster.

“Out of the box, without tuning to Summit’s unique architecture, we are expecting an increase in performance up to 50 times,” said Johnston.

With that sort of network training capability, Summit could be indispensable to researchers across the scientific spectrum looking to deep learning to help them tackle some of science’s most immense challenges.

Patton’s team is not waiting for the improved hardware to start tackling current scientific data challenges; they have already deployed their codes to assist domain scientists at the Department of Energy’s Fermilab in Batavia, Illinois.

Researchers at Fermilab used MENNDL to better understand how neutrinos interact with ordinary matter by producing a classification network to support their Main Injector Experiment for v-A (MINERvA), a neutrino scattering experiment. The task, known as vertex reconstruction, required a network to analyze images and precisely identify the location where neutrinos interact with one of many targets—a task akin to finding the aerial source of a starburst of fireworks.

In only 24 hours, MENNDL produced optimized networks that outperformed any previously handcrafted network—an achievement that could easily have taken scientists months to accomplish. To identify the high-performing network, MENNDL evaluated approximately 500,000 neural networks, training them on a data set consisting of 800,000 images of neutrino events, steadily using 18,000 of Titan’s nodes.

“You need something like MENNDL to explore this effectively infinite space of possible networks, but you want to do it efficiently,” Young said. “What Titan does is bring the time to solution down to something practical.”

And with Summit to come online this year, the future of deep learning in big science looks bright indeed.

Learn more: ORNL researchers use Titan to accelerate design, training of deep learning networks

The Latest on: Deep learning networks

[google_news title=”” keyword=”deep learning networks” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]- Palo Alto Networks, Microsoft, Google, CrowdStrike, IBM announce major AI security news at RSACon May 8, 2024 at 3:02 am

machine learning and DL Palo Alto Networks unveiled its proprietary AI system that combines genAI, machine learning (ML) and deep learning (DL) capabilities. It is built on the vendor’s security ...

- Palo Alto Networks unveils new AI-powered security solutions to tackle advanced threatson May 7, 2024 at 5:43 pm

Palo Alto Networks Inc. today announced a suite of new security solutions powered by its Precision AI technology that are designed to protect enterprises against artificial intelligence-generated ...

- Palo Alto Networks Launches New Security Solutions Infused with Precision AI to Defend Against Advanced Threats and Safeguard AI Adoptionon May 7, 2024 at 5:20 am

Follow Palo Alto Networks on X (formerly Twitter), LinkedIn, Facebook and Instagram.

- AI Deep Learning Improves Brain-Computer Interface Performanceon May 6, 2024 at 8:54 am

AI deep learning powers a brain-computer interface that enables humans to continuously control a cursor using thoughts.

- Superior Alternatives to MLPs? Kolmogorov-Arnold Networks Eclipse MLPs in Accuracy and Efficiencyon May 6, 2024 at 7:31 am

Multi-layer perceptrons (MLPs) stand as the bedrock of contemporary deep learning architectures, serving as indispensable components in various machine learning applications. Leveraging the expressive ...

- ATR Network: Decentralized private data multimodal supercomputing networkon May 6, 2024 at 7:09 am

On the basis of protecting data and privacy security, ATR Network uses neural network, deep learning and other technologies to deeply analyze heterogeneous data, extract useful data vectors, and ...

- New Deep Instinct AI assistant bridges the gap in malware analysison May 1, 2024 at 6:01 am

Threat protection-focused startup Deep Instinct Ltd. today announced the launch of DIANNA — short for Deep Instinct’s Artificial Neural Network Assistant ... integrates with Deep Instinct’s deep ...

- Deep Learning and Neural Networks Drive a Potential $7.9 Trillion AI Economyon April 29, 2024 at 12:30 pm

As artificial intelligence (AI) continues to permeate the corporate landscape, its potential economic impact is ...

- USA News Group: Deep Learning and Neural Networks Drive a Potential $7.9 Trillion AI Economyon April 29, 2024 at 7:28 am

USA News Group Commentary VANCOUVER, BC, April 29, 2024 /PRNewswire/ -- USA News Group - As artificial intelligence (AI) continues to permeate the corporate landscape, its potential economic ...

- Europe taps deep learning to make industrial robots safer colleagueson April 26, 2024 at 1:07 am

European researchers have launched the RoboSAPIENS project to make adaptive industrial robots more efficient and safer to work with humans.

via Google News and Bing News