via University of Texas at Austin

The rapid progression of technology has led to a huge increase in energy usage to process the massive troves of data generated by devices. But researchers in the Cockrell School of Engineering at The University of Texas at Austin have found a way to make the new generation of smart computers more energy efficient.

Traditionally, silicon chips have formed the building blocks of the infrastructure that powers computers. But this research uses magnetic components instead of silicon and discovers new information about how the physics of the magnetic components can cut energy costs and requirements of training algorithms — neural networks that can think like humans and do things like recognize images and patterns.

“Right now, the methods for training your neural networks are very energy-intensive,” said Jean Anne Incorvia, an assistant professor in the Cockrell School’s Department of Electrical and Computer Engineering. “What our work can do is help reduce the training effort and energy costs.”

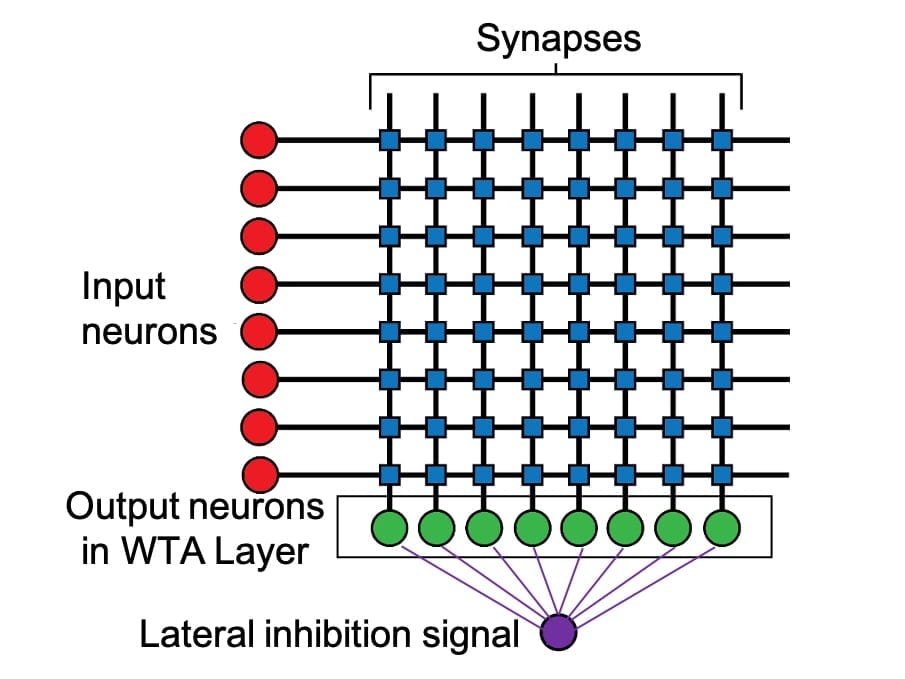

The researchers’ findings were published this week in IOP Nanotechnology. Incorvia led the study with first author and second-year graduate student Can Cui. Incorvia and Cui discovered that spacing magnetic nanowires, acting as artificial neurons, in certain ways naturally increases the ability for the artificial neurons to compete against each other, with the most activated ones winning out. Achieving this effect, known as “lateral inhibition,” traditionally requires extra circuitry within computers, which increases costs and takes more energy and space.

Incorvia said their method provides an energy reduction of 20 to 30 times the amount used by a standard back-propagation algorithm when performing the same learning tasks.

The same way human brains contain neurons, new-era computers have artificial versions of these integral nerve cells. Lateral inhibition occurs when the neurons firing the fastest are able to prevent slower neurons from firing. In computing, this cuts down on energy use in processing data.

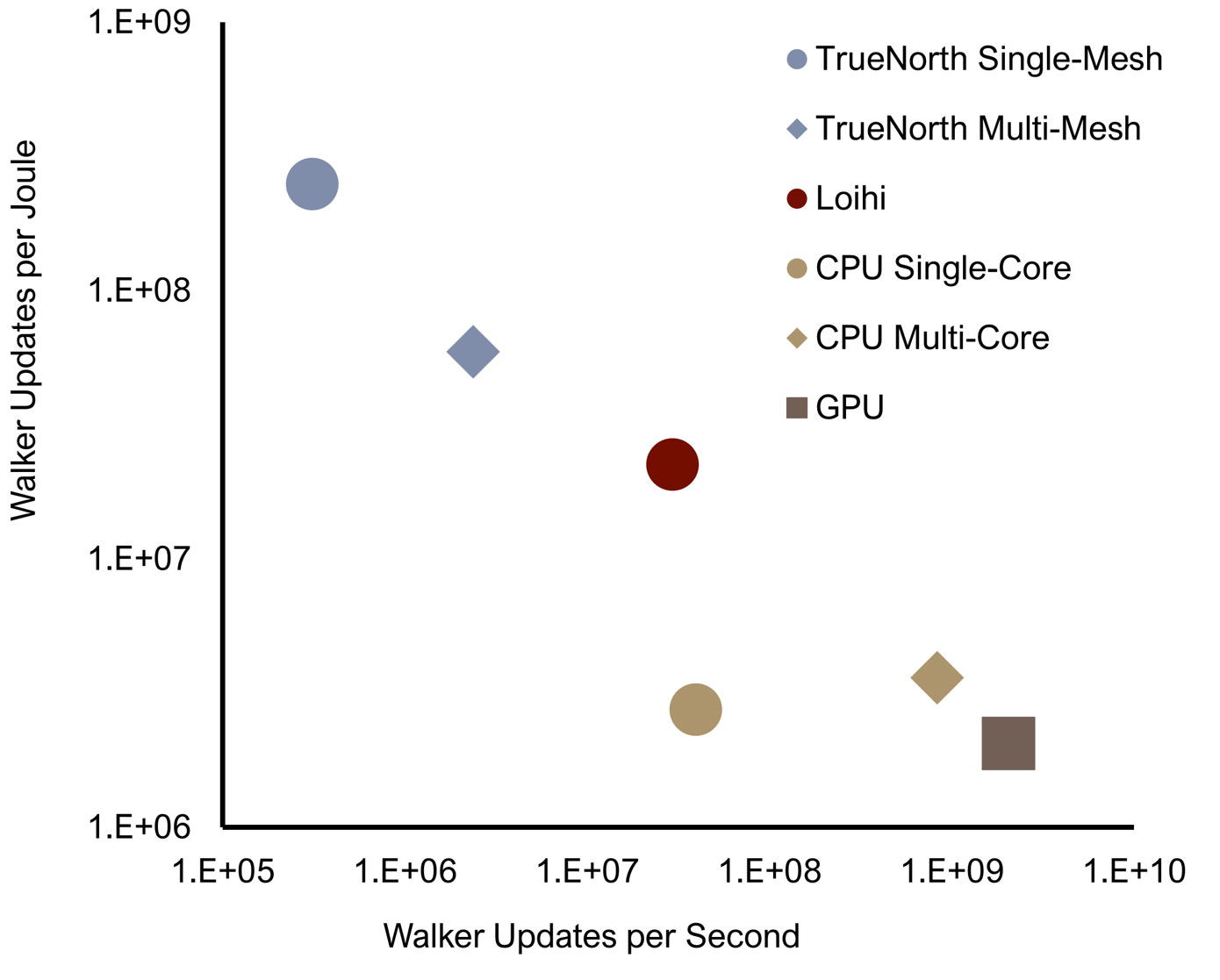

Incorvia explains that the way computers operate is fundamentally changing. A major trend is the concept of neuromorphic computing, which is essentially designing computers to think like human brains. Instead of processing tasks one at a time, these smarter devices are meant to analyze huge amounts of data simultaneously. These innovations have powered the revolution in machine learning and artificial intelligence that has dominated the technology landscape in recent years.

This research focused on interactions between two magnetic neurons and initial results on interactions of multiple neurons. The next step involves applying the findings to larger sets of multiple neurons as well as experimental verification of their findings.

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Magnetic circuits

- Scientists Discover Evidence of Ancient Earth's Peculiarly Strong Magnetic Field

Earth is an island of tranquility in a sea of searing solar radiation and energetic particles, and we have the planet's magnetic field to thank. Scientists searching for early records of Earth's ...

- Laser light makes a material magnetic

Pulses of laser light can cause any material – including insulators – to develop a relatively large magnetic moment. This effect, which has been demonstrated for the first time by an international ...

- Newfound ‘altermagnets’ shatter the magnetic status quo

For the first time in nearly a century, physicists have identified a brand new type of magnetic material. Crack open a physics textbook and you may read that scientists classify magnetic materials ...

- 2D materials rotate light polarization

This would drastically reduce the size of photonic integrated circuits. The team deciphered ... the ultra-thin material is placed in a small magnetic field. According to Ashish Arora, "conducting ...

- Electric circuits

The power is the rate at which a circuit transfers energy. What are magnetic fields? - OCR 21st Century Magnetism is caused by the fields that exist around magnets. These magnetic fields can be ...

Go deeper with Google Headlines on:

Magnetic circuits

[google_news title=”” keyword=”magnetic circuits” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Neuromorphic computing

- 'Inspired by the human brain': Intel debuts neuromorphic system that aims to mimic grey matter with a clear aim — making the machine exponentially faster and much more power ...

Intel's Hala Point is the world's largest “brain-based” computing system with 1.15 billion artificial neurons.

- Sandia Pushes The Neuromorphic AI Envelope With Hala Point “Supercomputer”

Not many devices in the datacenter have been etched with the Intel 4 process, which is the chip maker’s spin on 7 nanometer extreme ultraviolet immersion ...

- Intel aims for sustainable AI with world’s largest neuromorphic system

Intel says it has built the world’s largest neuromorphic system. Code-named Hala Point, the large-scale neuromorphic system, initially deployed at Sandia National Laboratories, utilises Intel’s Loihi ...

- Intel Develops World's Largest Neuromorphic Computer System for Advancing AI Research

Intel has developed the world's largest neuromorphic computer system, a hardware stack modeled after the complexities of the human brain.

- Intel Unveils World’s Largest Neuromorphic Computer, Drawing Inspiration from Human Brain to Train AI

Continue reading Mike Davies, the visionary director of Intel Labs’ Neuromorphic Computing Lab, underscores the critical necessity for more efficient AI models in today’s rapidly evolving landscape.

Go deeper with Google Headlines on:

Neuromorphic computing

[google_news title=”” keyword=”neuromorphic computing ” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]