In debates over the future of artificial intelligence, many experts think of the new systems as coldly logical and objectively rational. But in a new study, researchers have demonstrated how machines can be reflections of us, their creators, in potentially problematic ways.

Common machine learning programs, when trained with ordinary human language available online, can acquire cultural biases embedded in the patterns of wording, the researchers found. These biases range from the morally neutral, like a preference for flowers over insects, to the objectionable views of race and gender.

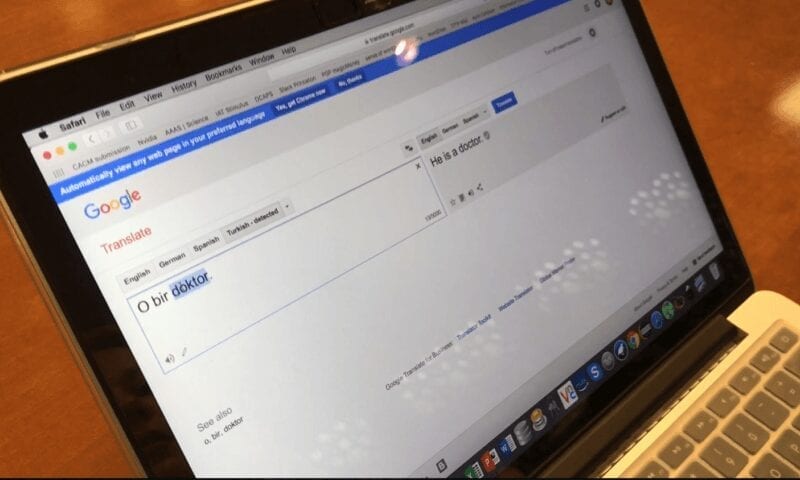

Identifying and addressing possible bias in machine learning will be critically important as we increasingly turn to computers for processing the natural language humans use to communicate, for instance in doing online text searches, image categorization and automated translations.

“Questions about fairness and bias in machine learning are tremendously important for our society,” said researcher Arvind Narayanan, an assistant professor of computer science and an affiliated faculty member at the Center for Information Technology Policy (CITP) at Princeton University, as well as an affiliate scholar at Stanford Law School’s Center for Internet and Society. “We have a situation where these artificial intelligence systems may be perpetuating historical patterns of bias that we might find socially unacceptable and which we might be trying to move away from.”

The paper, “Semantics derived automatically from language corpora contain human-like biases,” published April 14 in Science. Its lead author is Aylin Caliskan, a postdoctoral research associate and a CITP fellow at Princeton; Joanna Bryson, a reader at University of Bath, and CITP affiliate, is a coauthor.

As a touchstone for documented human biases, the study turned to the Implicit Association Test, used in numerous social psychology studies since its development at the University of Washington in the late 1990s. The test measures response times (in milliseconds) by human subjects asked to pair word concepts displayed on a computer screen. Response times are far shorter, the Implicit Association Test has repeatedly shown, when subjects are asked to pair two concepts they find similar, versus two concepts they find dissimilar.

Take flower types, like “rose” and “daisy,” and insects like “ant” and “moth.” These words can be paired with pleasant concepts, like “caress” and “love,” or unpleasant notions, like “filth” and “ugly.” People more quickly associate the flower words with pleasant concepts, and the insect terms with unpleasant ideas.

The Princeton team devised an experiment with a program where it essentially functioned like a machine learning version of the Implicit Association Test. Called GloVe, and developed by Stanford University researchers, the popular, open-source program is of the sort that a startup machine learning company might use at the heart of its product. The GloVe algorithm can represent the co-occurrence statistics of words in, say, a 10-word window of text. Words that often appear near one another have a stronger association than those words that seldom do.

The Stanford researchers turned GloVe loose on a huge trawl of contents from the World Wide Web, containing 840 billion words. Within this large sample of written human culture, Narayanan and colleagues then examined sets of so-called target words, like “programmer, engineer, scientist” and “nurse, teacher, librarian” alongside two sets of attribute words, such as “man, male” and “woman, female,” looking for evidence of the kinds of biases humans can unwittingly possess.

Learn more: Biased bots: Human prejudices sneak into artificial intelligence systems

[osd_subscribe categories=’artificial-intelligence’ placeholder=’Email Address’ button_text=’Subscribe Now for any new posts on the topic “ARTIFICIAL INTELLIGENCE”‘]

The Latest on: Bias in artificial intelligence systems

[google_news title=”” keyword=”Bias in artificial intelligence systems” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Bias in artificial intelligence systems

- Artificial intelligence reshaping HR practiceson May 8, 2024 at 3:01 pm

Across the globe and in today’s rapidly evolving technological landscape, the integration of artificial intelligence (AI) is reshaping traditional human resource (HR) practices.The elephant in the ...

- A physicists’ guide to the ethics of artificial intelligenceon May 6, 2024 at 6:00 am

Physics may seem like its own world, but different sectors using machine learning are all part of the same universe.

- Randomised controlled trials evaluating artificial intelligence in clinical practice: a scoping reviewon April 24, 2024 at 5:39 pm

This scoping review of randomised controlled trials on artificial intelligence (AI) in clinical practice reveals an expanding interest in AI across clinical specialties and locations. The USA and ...

- CISA’s chief data officer: Bias in AI models won’t be the same for every agencyon April 24, 2024 at 1:24 pm

Monitoring and logging are critical for agencies as they assess datasets, though “bias-free data might be a place we don’t get to,” the federal cyber agency’s CDO says.

- Artikel-artikel mengenai Artificial intelligence (AI) biason April 17, 2024 at 5:00 pm

Many organisations are asking how far can we trust artificial intelligence, and how we can hold it accountable when it makes a mistake. Companies and hiring agencies are increasingly resorting to ...

- Massachusetts official warns AI systems subject to consumer protection, anti-bias lawson April 16, 2024 at 1:14 pm

BOSTON (AP) — Developers, suppliers, and users of artificial intelligence must comply ... for example, any bias and lack of transparency within AI systems, can cause our residents," she added. Falsely ...

- Automation Bias: What It Is And How To Overcome Iton March 10, 2024 at 10:52 am

Automation bias refers to our tendency to ... prevalent as more tasks are delegated to artificial intelligence. While automated systems can help reduce errors and speed up decision-making, they ...

- Todos os artigos de Artificial intelligence (AI) biason February 6, 2024 at 4:00 pm

Many organisations are asking how far can we trust artificial intelligence, and how we can hold it accountable when it makes a mistake. Companies and hiring agencies are increasingly resorting to ...

- How artificial intelligence can make hiring bias worseon June 22, 2023 at 2:05 am

Read: Jobs in artificial intelligence: Workers are looking to ride the wave, and employers are hiring AI brands and supporters tend to emphasize how the speed and efficiency of AI technology can ...

via Bing News