CREDIT

Florida Atlantic University

Can machines think? That’s what renowned mathematician Alan Turing sought to understand back in the 1950s when he created an imitation game to find out if a human interrogator could tell a human from a machine based solely on conversation deprived of physical cues.

The Turing test was introduced to determine a machine’s ability to show intelligent behavior that is equivalent to or even indistinguishable from that of a human. Turing mainly cared about whether machines could match up to humans’ intellectual capacities.

But there is more to being human than intellectual prowess, so researchers from the Center for Complex Systems and Brain Sciences (CCSBS) in the Charles E. Schmidt College of Science at Florida Atlantic University set out to answer the question: “How does it ‘feel’ to interact behaviorally with a machine?”

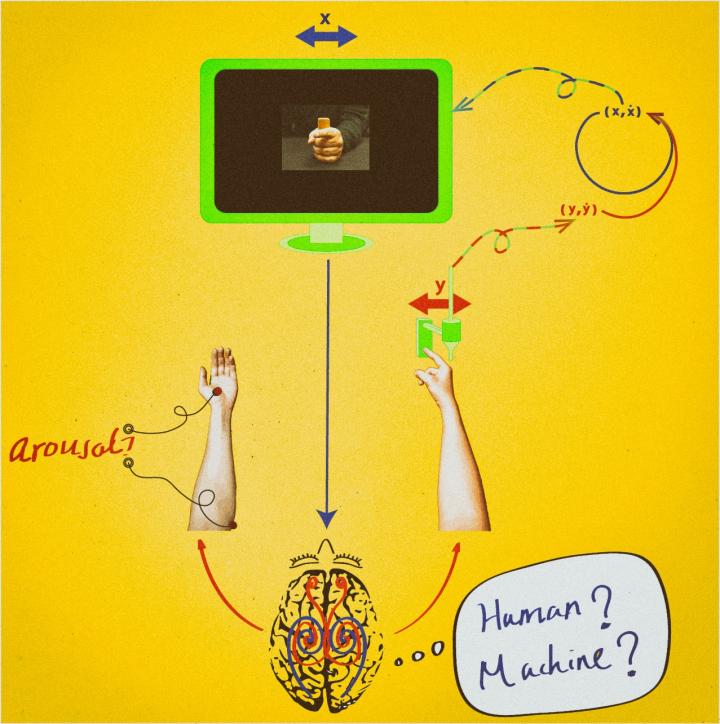

They created the equivalent of an “emotional” Turing test, and developed a virtual partner that is able to elicit emotional responses from its human partner while the pair engages in behavioral coordination in real-time.

Results of the study, titled “Enhanced Emotional Responses during Social Coordination with a Virtual Partner,” are recently published in the International Journal of Psychophysiology. The researchers designed the virtual partner so that its behavior is governed by mathematical models of human-to-human interactions in a way that enables humans to interact with the mathematical description of their social selves.

“Our study shows that humans exhibited greater emotional arousal when they thought the virtual partner was a human and not a machine, even though in all cases, it was a machine that they were interacting with,” said Mengsen Zhang, lead author and a Ph.D. student in FAU’s CCSBS. “Maybe we can think of intelligence in terms of coordinated motion within and between brains.”

The virtual partner is a key part of a paradigm developed at FAU called the Human Dynamic Clamp – a state-of-the-art human machine interface technology that allows humans to interact with a computational model that behaves very much like humans themselves. In simple experiments, the model – on receiving input from human movement – drives an image of a moving hand which is displayed on a video screen. To complete the reciprocal coupling, the subject sees and coordinates with the moving image as if it were a real person observed through a video circuit. This social “surrogate” can be precisely tuned and controlled – both by the experimenter and by the input from the human subject.

“The behaviors that gave rise to that distinctive emotional arousal were simple finger movements, not events like facial expressions for example, known to convey emotion,” said Emmanuelle Tognoli, Ph.D., co-author and associate research professor in FAU’s CCSBS. “So the findings are rather startling at first.”

Tognoli is quick to point out that that it is not so much about how fanciful the partner appears or how emotionally prone it is, since usually, fingers have little in the way of tears or laughter. Instead, it is a matter of how well the virtual partner relates its behavior to the human – its competence for social coordination written in its mathematical equations.

The mathematical models that govern the virtual partner’s behavior are grounded in four decades of empirical and theoretical research at FAU led by co-author J.A. Scott Kelso, Ph.D., the Glenwood and Martha Creech Eminent Chair in Science, and founder of FAU’s CCSBS.

Kelso stresses that the key idea behind the Human Dynamic Clamp is the symmetry between the human and the machine, the fact that they are governed by the same laws of coordination dynamics.

“In reality, humans’ interactions with their milieu, including other human beings, are continuous and reciprocal,” said Kelso. “By putting time and reciprocity back in the investigation of emotion and social interaction, the Human Dynamic Clamp affords the opportunity to explore parameter ranges and perturbations that are out of reach of traditional experimental designs. It is a step forward for investigations aimed at understanding complex social behavior.”

The study shows that behavioral interaction and emotion are continuously feeding from each other, so that coordination of movement could make useful contribution to the rehabilitation of diseases. Movement coordination disorders are often found in patients with schizophrenia and autism spectrum disorders, who also suffer from social and emotional dysfunctions.

“Artificial Intelligence has been grounded in an algorithmic approach of human cognition. We are now bringing the social and emotional dimensions to the table as well,” said Guillaume Dumas, Ph.D., co-author, and a former post-doctoral member of FAU’s CCSBS who is currently with the Institut Pasteur in Paris.

The researchers anticipate that the virtual partner will soon be developed into the prototype of a cooperative machine that can be used for therapeutic purposes. This type of application might benefit many patients afflicted with social and emotional disorders.

“FAU has nurtured the Center for Complex Systems and Brain Sciences for 30 years, and this work is led by one of our outstanding doctoral students who is advancing our understanding of the orders and disorders that take place in our society and in our brains,” said Daniel C. Flynn, Ph.D., FAU’s vice president for research.

Learn more: ‘Virtual partner’ elicits emotional responses from a human partner in real-time

The Latest on: Human machine interface

[google_news title=”” keyword=”human machine interface” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Human machine interface

- Dusty 'Cat's Paw Nebula' contains a type of molecule never seen in space — and it's one of the largest ever foundon April 30, 2024 at 10:01 am

Scientists have detected a new, unusually large molecule never seen in space before. The 13-atom molecule, called 2-methoxyethanol, was detected in the Cat's Paw Nebula.

- Cognitive Cybersecurity: The Intersection of AI, Data Architecture, and Threat Intelligenceon April 30, 2024 at 8:54 am

As cyber threats become increasingly sophisticated, traditional defense mechanisms are insufficient. This has given birth to a new approach that utilizes the convergence of artificial intelligence (AI ...

- China Has a Controversial Plan for Brain-Computer Interfaceson April 30, 2024 at 8:13 am

China's brain-computer interface technology is catching up to the US ... are able to provide cognitive enhancement in warfighters and merging of human and machine intelligence. “If that is something ...

- How AI Will Change the Way You Cookon April 30, 2024 at 7:00 am

AI tools like smart thermometers can ensure food is safe to eat, which will make cooks more confident and lead to less food waste. Fridges that can tell you what's in them while you're grocery ...

- OMNIVISION Launches New 9-megapixel Global Shutter Image Sensor for Industrial Machine Vision Camerason April 30, 2024 at 6:29 am

GS sensor with a 1-inch optical format. The sensor's 3.45 µm pixel is based on OMNIVISION's patented PureCel®Plus-S stacked-die architecture for best-in-class image sensor performance. The OG09A ...

- India Human Machine Interface (HMI) Market Big Money to be Made as Market Size Continues to Surgeon April 29, 2024 at 9:29 pm

Request To Download Free Sample of This Strategic Report @- The ICT sector is segmented in various ways to provide a clear understanding of market dynamics: By Industry Vertical: Key verticals in the ...

- Auto China 2024: Honda shows more new EVson April 29, 2024 at 8:09 am

N Series electric vehicle (EV) models, the e:NP2 and e:NS2, at Auto China 2024 in Beijing, China. The e:NP2 is now on sale from GAC Honda Automobile (GAC Honda), the vehicle production and sales joint ...

- Automotive HMI Market: Robust Growth Forecasted at 14.8% CAGR, Surpassing $48 Billion by 2033on April 29, 2024 at 7:47 am

Automotive Human-Machine Interface HMI Market expected to hit US$ 48,079.3 Million at CAGR of 14.8% during forecast 2023 to 2033 | Future Market Insights, Inc.

- Transforming The Customer Experience Into The “Human Experience”on April 26, 2024 at 6:35 am

Customer experience will be replaced with the higher concept we called, The Human Experience. CEOs of AARP, Audien, Xerox, ESG News Corp ...

- China's homegrown brain-machine interface system unveiled at Zhongguancun Forumon April 25, 2024 at 11:45 pm

This photo shows the NeuCyber Array BMI System, a self-developed brain-machine interface (BMI) system from China, unveiled at the opening ceremony of the 2024 Zhongguancun Forum in Beijing, capital of ...

via Bing News