NERSC Flips Switch on New Flagship Supercomputer

The National Energy Research Scientific Computing (NERSC) Center recently accepted “Edison,” a new flagship supercomputer designed for scientific productivity.

Named in honor of American inventor Thomas Alva Edison, the Cray XC30 will be dedicated in a ceremony held at the Department of Energy’s Lawrence Berkeley National Laboratory (Berkeley Lab) on Feb. 5, and scientists are already reporting results. (»See related story: “Early Edison Users Deliver Results.”)

About 5,000 researchers working on 700 projects and running 600 different codes compute at NERSC, which is operated by Berkeley Lab. They produce an average of 1,700 peer-reviewed publications every year, making NERSC the most productive scientific computing center serving the Department of Energy’s Office of Science.

“We support a very broad range of science, from basic energy research to climate science, from biosciences to discovering new materials, exploring high energy physics and even uncovering the very origins of the universe,” said NERSC Director Sudip Dosanjh.

Edison can execute nearly 2.4 quadrillion floating-point operations per second (petaflop/s) at peak theoretical speeds. While theoretical speeds are impressive, “NERSC’s longstanding approach is to evaluate proposed systems by how well they meet the needs of our diverse community of researchers, so we focus on sustained performance on real applications,” said NERSC Division Deputy for Operations Jeff Broughton, who led the Edison procurement team.

“For us, what’s really important is the scientific productivity of our users,” Dosanjh said. That’s why Edison was configured to handle two kinds of computing equally well: data analysis and simulation and modeling.

Data Analysis Joins Simulation and Modeling

Traditionally, scientific supercomputers are configured to simulate and model complex phenomena, such as nanomaterials converting electricity into photons of light, climate changing over decades or centuries, or interstellar gases forming into stars and galaxies. Simulations require a lot of processors running in unison, but not necessarily a lot of memory for each processor.

Data analysis, such as genome sequencing or molecular screening programs that search for promising new materials or drugs, often involves high throughput computing—running large numbers of loosely coupled simulations simultaneously. Such “ensemble computing” requires more memory per node and has typically been relegated to separate computer clusters. As instruments and experiments deliver more and more data however, scientists need more computing power to crunch it; so smaller clusters no longer suffice.

“Facilities throughout the Department of Energy are being inundated with data that researchers don’t have the ability to understand, process or analyze sufficiently,” said Dosanjh. Historically, NERSC was an exporter of data as scientists ran large-scale simulations and then moved that data to other sites. But with the growth of experimental data coming from other sites, NERSC is now a net importer, taking in a petabyte of data in fields such as biosciences, climate and high-energy physics each month.

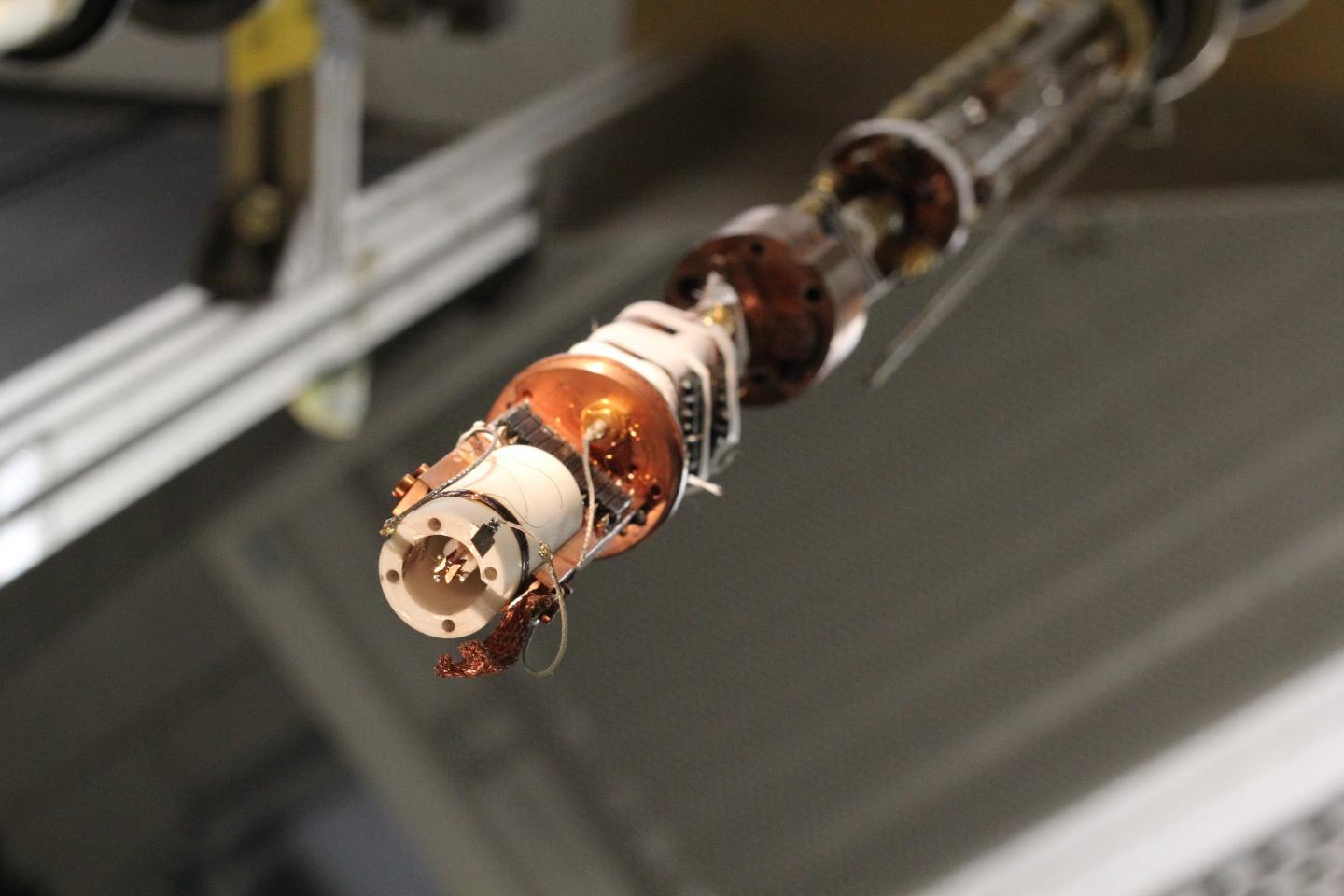

Both types of computing rely heavily on moving data, said Dosanjh. “So Edison has been optimized for that: It has a really high-speed interconnect, it has lots of memory bandwidth, lots of memory per node, and it has very high input/output speeds to the file system and disk system.”

“If you have a computing resource like Edison, one with the flexibility to run different classes of problems, then you can apply the full capacity of your system to the problem at hand, whether that be high-throughput genome sequencing or highly parallel climate simulations,” said Broughton.

Less Time Tweaking Codes, More Time Doing Science

Because Edison does not employ accelerators, such as graphics processing units (GPUs), scientists have been able to move their codes from NERSC’s previous flagship system (a Cray XE6 named for computer scientist Grace Hopper) to Edison with little or no changes, another consideration meant to keep scientists doing science instead of rewriting code.

“We were able to open Edison to all our users shortly after installation for testing, and the system was immediately full,” said Broughton. By the time Edison was accepted and placed into production, scientists had logged millions of processor hours of research into areas as varied as carbon sequestration, nanomaterials, cosmology, and combustion.

And while researchers may not see or appreciate Edison’s advances in energy efficiency, it will impact their ability to do science. “In coming years, performance will be more limited by power than anything else, so energy efficiency is critical,” said Dosanjh.

The Latest on: Supercomputer

[google_news title=”” keyword=”Supercomputer” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Supercomputer

- Supercomputer makes a close call as it predicts the final Premier League standingon April 27, 2024 at 3:20 pm

Arsenal remains at the top of the Premier League standings, with Manchester City yet to play its outstanding game. The Gunners are benefiting from Liverpool’s poor run of form, leaving only City as ...

- Women’s Super League: ‘We Can’t Control The Opta Supercomputer’ – Arsenal Boss Eidevallon April 27, 2024 at 11:53 am

Arsenal sit in third place ahead of Sunday’s match against Everton and are six points behind leaders Manchester City ...

- NVIDIA’s AI Supercomputer Arrives at OpenAI, Elon Musk Shares Thoughtson April 27, 2024 at 11:38 am

NVIDIA delivers its first AI supercomputer, the DGX A100, to OpenAI, marking a significant milestone in AI research. Read more!

- Nvidia to help Japan build hybrid quantum-supercomputeron April 26, 2024 at 9:31 pm

TOKYO -- Japan's government-backed technology institute will work with Nvidia to build a hybrid supercomputer that offers quantum computing capability for use by researchers and companies.

- HPE to build new 35 petaflop supercomputer in Polandon April 26, 2024 at 10:09 am

In addition to the new Helios machine, CYFRONET also houses the 7.7 petaflops system, Athena, launched in 2022; the Huawei-based Ares, a 3.5 petaflops system launched in 2021; and HPE-based Prometheus ...

- Why IBM’s dazzling Watson supercomputer was a lousy tutoron April 26, 2024 at 2:00 am

With a new race underway to create the next teaching chatbot, IBM’s abandoned 5-year education push offers lessons about AI’s limits.

- Sandia Pushes The Neuromorphic AI Envelope With Hala Point “Supercomputer”on April 25, 2024 at 6:20 pm

Not many devices in the datacenter have been etched with the Intel 4 process, which is the chip maker’s spin on 7 nanometer extreme ultraviolet immersion ...

- NVIDIA is helping Japan build their bleeding-edge ABCI-Q quantum supercomputer with HPC and AIon April 24, 2024 at 8:36 pm

NVIDIA has announced that Japan's new quantum supercomputer will be powered by NVIDIA platforms for accelerated and quantum computing. Japan's National Institute of Advanced Industrial Science and ...

- Nvidia supercomputers: new collegiate, research systems come onlineon April 23, 2024 at 5:00 pm

Georgia Tech's dedicated AI supercomputer is a cluster of 20 Nvidia HGX H100s; the DOE's Venado is the first large-scale system with Nvidia Grace CPU superchips deployed in the U.S. One Nvidia ...

- Supercomputer simulation reveals new mechanism for membrane fusionon April 23, 2024 at 5:46 am

An intricate simulation performed by UT Southwestern Medical Center researchers using one of the world's most powerful supercomputers sheds new light on how proteins called SNAREs cause biological ...

via Bing News