Research Image

CREDIT: POSTECH

Breast cancer undisputedly has the highest incidence rate in female patients. Moreover, out of the six major cancers, it is the only one that has shown an increasing trend over the past 20 years.

The chance of survival would be higher if breast cancer is detected and treated early. However, the survival rate drastically decreases to less than 75% after stage 3, which means early detection with regular medical check-ups is critical for reducing patient mortality. . Recently a research team at POSTECH developed an AI network system for ultrasonography to accurately detect and diagnose breast cancer.

A team of researchers from POSTECH led by Professor Chulhong Kim (Department of Convergence IT Engineering, the Department of Electrical Engineering, and the Department of Mechanical Engineering), and Sampa Misra and Chiho Yoon (Department of Electrical Engineering) has developed a deep learning-based multimodal fusion network for segmentation and classification of breast cancers using B-mode and strain elastography ultrasound images. The findings from the study were published in Bioengineering & Translational Medicine.

Ultrasonography is one of the key medical imaging modalities for evaluating breast lesions. To distinguish benign from malignant lesions, computer-aided diagnosis (CAD) systems have offered radiologists a great deal of help by automatically segmenting and identifying features of lesions.

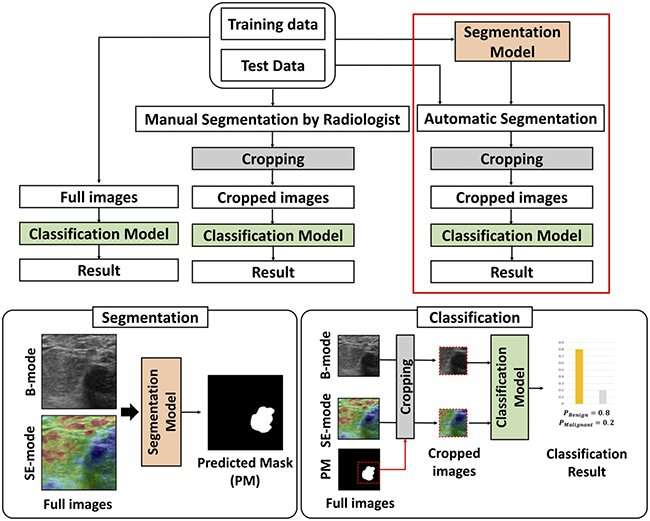

Here, the team presented deep learning (DL)-based methods to segment the lesions and then classify them as benign or malignant, using both B-mode and strain elastography (SE-mode) images. First of all, the team constructed a ‘weighted multimodal U-Net (W-MM-U-Net) model’ where the optimum weight is assigned on different imaging modalities to segment lesions, utilizing a weighted-skip connection method. Also, they proposed a ‘multimodal fusion framework (MFF)’ on cropped B-mode and SE-mode ultrasound (US) lesion images to classify benign and malignant lesions.

The MFF consists of an integrated feature network (IFN) and a decision network (DN). Unlike other recent fusion methods, the proposed MFF method can simultaneously learn complementary information from convolutional neural networks (CNN) that are trained with B-mode and SE-mode US images. The features of the CNN are ensembled using the multimodal EmbraceNet model, while DN classifies the images using those features.

The method predicted seven benign patients as being benign in three out of the five trials and six malignant patients as being malignant in five out of the five trials, according to the experimental results on the clinical data. This means the proposed method outperforms the conventional single and multimodal methods and would potentially enhance the classification accuracy of radiologists for breast cancer detection in US images.

Professor Chulhong Kim explained, “We were able to increase the accuracy of lesion segmentation by determining the importance of each input modal and automatically giving the proper weight.” He added, “We trained each deep learning model and the ensemble model at the same time to have a much better classification performance than the conventional single modal or other multimodal methods.”

Original Article: AI-powered ultrasound imaging that detects breast cancer

More from: Pohang University of Science and Technology

The Latest Updates from Bing News

Go deeper with Bing News on:

Detecting breast cancer

- Kansas lawmaker asks, 'Is it sexism?' after peers pull $75K for breast cancer screenings

Kansas legislators planned to spend $75,000 on breast cancer screenings for state staff but removed in the final state budget.

- Breast cancer rates rising among Canadian women in their 20s, 30s and 40s

Rates of breast cancer in women under the age of 50 are rising in Canada according to a study which showed an increase in breast cancer diagnoses among females in their twenties, thirties, and forties ...

- HealthWatch: Beating breast cancer with Aurora BayCare Medical Center

Early detection and knowing your family history are key when it comes to beating breast cancer. Catching it early saved Stephanie Skrede’s life. “Mom called ...

- Bay Area doctors using Ion robot to detect lung cancer safer, faster than before

Developed by Sunnyvale-based Intuitive Surgical, the Ion robot can help doctors diagnose lung cancer faster and safer than ever before.

- FDA Approves Lumisight to Detect Residual Cancer After Lumpectomy

The US Food and Drug Administration (FDA) has approved Lumisight (pegulicianine), a fluorescent imaging agent, for use in adults with breast cancer to aid in intraoperative detection of cancerous ...

Go deeper with Bing News on:

Multimodal fusion framework

- New multi-task deep learning framework integrates large-scale single-cell proteomics and transcriptomics data

The exponential progress in single-cell multi-omics technologies has led to the accumulation of large and diverse multi-omics datasets. However, the integration of single-cell proteomics and ...

- SiMa.ai secures $70M funding to introduce a multimodal GenAI chip

As the demand for GenAI is growing, SiMa.ai is set to introduce its second-generation ML SoC in the first quarter of 2025 with an emphasis on providing its customers with multimodal GenAI capability.

- Groundbreaking AI Framework Revolutionizes Drug Discovery

Following this, it integrates these features through a fusion process and ... plans to enhance the framework's capabilities, including the exploration of multimodal pre-training strategies.

- ‘Artificial sun’ sets record for time at 100 million degrees in latest advance for nuclear fusion

Nuclear fusion seeks to replicate the reaction that makes the sun and other stars shine, by fusing together two atoms to unleash huge amounts of energy. Often referred to as the holy grail of ...

- Advancing drug discovery with AI: Introducing the KEDD framework

Following this, it integrates these features through a fusion process and ... plans to enhance the framework's capabilities, including the exploration of multimodal pre-training strategies.