Researchers from the GrapheneX-UTS Human-centric Artificial Intelligence Centre have developed a portable, non-invasive system that can decode silent thoughts and turn them into text.

In a world-first, researchers from the GrapheneX-UTS Human-centric Artificial Intelligence Centre at the University of Technology Sydney (UTS) have developed a portable, non-invasive system that can decode silent thoughts and turn them into text.

The technology could aid communication for people who are unable to speak due to illness or injury, including stroke or paralysis. It could also enable seamless communication between humans and machines, such as the operation of a bionic arm or robot.

The study has been selected as the spotlight paper at the NeurIPS conference, a top-tier annual meeting that showcases world-leading research on artificial intelligence and machine learning, to be held in New Orleans on 12 December 2023.

The research was led by Distinguished Professor CT Lin, Director of the GrapheneX-UTS HAI Centre, together with first author Yiqun Duan and fellow PhD candidate Jinzhou Zhou from the UTS Faculty of Engineering and IT.

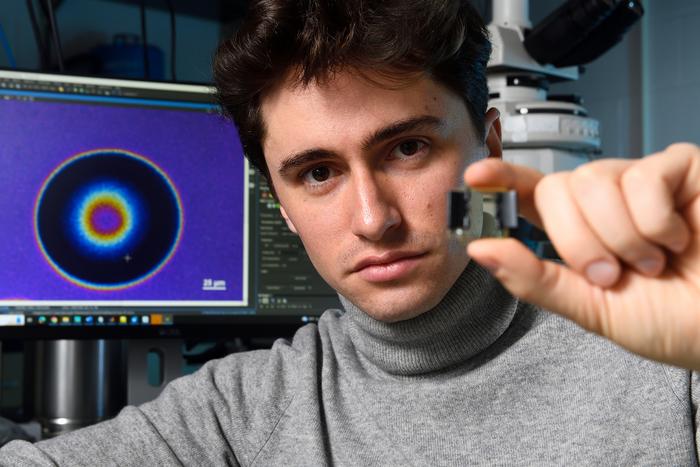

In the study participants silently read passages of text while wearing a cap that recorded electrical brain activity through their scalp using an electroencephalogram (EEG). A demonstration of the technology can be seen in this video.

The EEG wave is segmented into distinct units that capture specific characteristics and patterns from the human brain. This is done by an AI model called DeWave developed by the researchers. DeWave translates EEG signals into words and sentences by learning from large quantities of EEG data.

“This research represents a pioneering effort in translating raw EEG waves directly into language, marking a significant breakthrough in the field,” said Distinguished Professor Lin.

“It is the first to incorporate discrete encoding techniques in the brain-to-text translation process, introducing an innovative approach to neural decoding. The integration with large language models is also opening new frontiers in neuroscience and AI,” he said.

Previous technology to translate brain signals to language has either required surgery to implant electrodes in the brain, such as Elon Musk’s Neuralink, or scanning in an MRI machine, which is large, expensive, and difficult to use in daily life.

These methods also struggle to transform brain signals into word level segments without additional aids such as eye-tracking, which restrict the practical application of these systems. The new technology is able to be used either with or without eye-tracking.

The UTS research was carried out with 29 participants. This means it is likely to be more robust and adaptable than previous decoding technology that has only been tested on one or two individuals, because EEG waves differ between individuals.

The use of EEG signals received through a cap, rather than from electrodes implanted in the brain, means that the signal is noisier. In terms of EEG translation however, the study reported state-of the art performance, surpassing previous benchmarks.

“The model is more adept at matching verbs than nouns. However, when it comes to nouns, we saw a tendency towards synonymous pairs rather than precise translations, such as ‘the man’ instead of ‘the author’,” said Duan.

“We think this is because when the brain processes these words, semantically similar words might produce similar brain wave patterns. Despite the challenges, our model yields meaningful results, aligning keywords and forming similar sentence structures,” he said.

The translation accuracy score is currently around 40% on BLEU-1. The BLEU score is a number between zero and one that measures the similarity of the machine-translated text to a set of high-quality reference translations. The researchers hope to see this improve to a level that is comparable to traditional language translation or speech recognition programs, which is closer to 90%.

The research follows on from previous brain-computer interface technology developed by UTS in association with the Australian Defence Force that uses brainwaves to command a quadruped robot, which is demonstrated in this ADF video.

Original Article: Portable, non-invasive, mind-reading AI turns thoughts into text

More from: University of Technology Sydney

The Latest Updates from Bing News

Go deeper with Bing News on:

Brain-to-text translation

- Artificial intelligence being used to decode sperm whales' language as 'phonetic alphabet' is identified

Scientists believe artificial intelligence will one day help them learn to communicate with the whales in the same way. If successful it would be the first time that humans have spoken with another ...

- What to expect from the next generation of chatbots

Upgrades to OpenAI's ChatGPT and Meta's Llama system introduce significant advancements in AI capabilities. GPT-5 and Llama-3 will revolutionize human-machine interactions, transforming industries and ...

- TechKnow: Coming up next

The technology behind these systems is known as a large language model (LLM). These are artificial neural networks, a type of AI designed to mimic the human brain. They can generate general purpose ...

- Cancer jab marks huge breakthrough in battle against deadly brain tumours

A new personalised cancer jab triggers a ‘fierce’ immune response to fight deadly brain tumours, say scientists. In world-first human trials, researchers revealed that their revolutionary mRNA cancer ...

- MIT Technology Review

And the greater the bandwidth, the more likely it is that the device will be able to translate brain activity into speech or text. When it comes to the sheer amount of human experience ...

Go deeper with Bing News on:

DeWave

- Short Wave

Podcasts > Life Sciences > Short Wave From NPR New discoveries, everyday mysteries, and the science behind the headlines — in just under 15 minutes. It's science for everyone, using a lot of ...

- Brandon Spencer

Brandon started his career as an Intern at WCTV in Tallahassee, Florida in August 2018. He went on to graduate from Florida State University in May 2019 with a B.S. in Sport Management. While at FSU, ...

- Slow-wave sleep articles from across Nature Portfolio

Slow-wave sleep (SWS) refers to phase 3 sleep, which is the deepest phase of non-rapid eye movement (NREM) sleep, and is characterized by delta waves (measured by EEG). Dreaming and sleepwalking ...

- Gerrit De Vynck

Gerrit De Vynck is a tech reporter for The Washington Post. He writes about Google, artificial intelligence and the algorithms that increasingly shape society. He previously covered tech for seven ...

- GAEREA Returns With New Standalone Single "World Ablaze"

Gaerea is back with a new single "World Ablaze" alongside a music video created by Grupa 13. No word on a follow-up to their 2022 record Mirage just yet, but "World Ablaze" is one hell of a holdover ...