via Caltech

Brain–machine interfaces (BMIs) are devices that can read brain activity and translate that activity to control an electronic device like a prosthetic arm or computer cursor. They promise to enable people with paralysis to move prosthetic devices with their thoughts.

Many BMIs require invasive surgeries to implant electrodes into the brain in order to read neural activity. However, in 2021, Caltech researchers developed a way to read brain activity using functional ultrasound (fUS), a much less invasive technique.

Now, a new study is a proof-of-concept that fUS technology can be the basis for an “online” BMI—one that reads brain activity, deciphers its meaning with decoders programmed with machine learning, and consequently controls a computer that can accurately predict movement with very minimal delay time.

The study was conducted in the Caltech laboratories of Richard Andersen, James G. Boswell Professor of Neuroscience and director and leadership chair of the T&C Chen Brain–Machine Interface Center; and Mikhail Shapiro, Max Delbrück Professor of Chemical Engineering and Medical Engineering and Howard Hughes Medical Institute Investigator. The work was a collaboration with the laboratory of Mickael Tanter, director of physics for medicine at INSERM in Paris, France.

“Functional ultrasound is a completely new modality to add to the toolbox of brain–machine interfaces that can assist people with paralysis,” says Andersen. “It offers attractive options of being less invasive than brain implants and does not require constant recalibration. This technology was developed as a truly collaborative effort that could not be accomplished by one lab alone.”

“In general, all tools for measuring brain activity have benefits and drawbacks,” says Sumner Norman, former senior postdoctoral scholar research associate at Caltech and a co-first author on the study. “While electrodes can very precisely measure the activity of single neurons, they require implantation into the brain itself and are difficult to scale to more than a few small brain regions. Non-invasive techniques also come with tradeoffs. Functional magnetic resonance imaging [fMRI] provides whole-brain access but is restricted by limited sensitivity and resolution. Portable methods, like electroencephalography [EEG] are hampered by poor signal quality and an inability to localize deep brain function.”

Ultrasound imaging works by emitting pulses of high frequency sound and measuring how those sound vibrations echo throughout a substance, such as various tissues of the human body. Sound waves travel at different speeds through these tissue types and reflect at the boundaries between them. This technique is commonly used to take images of a fetus in utero, and for other diagnostic imaging.

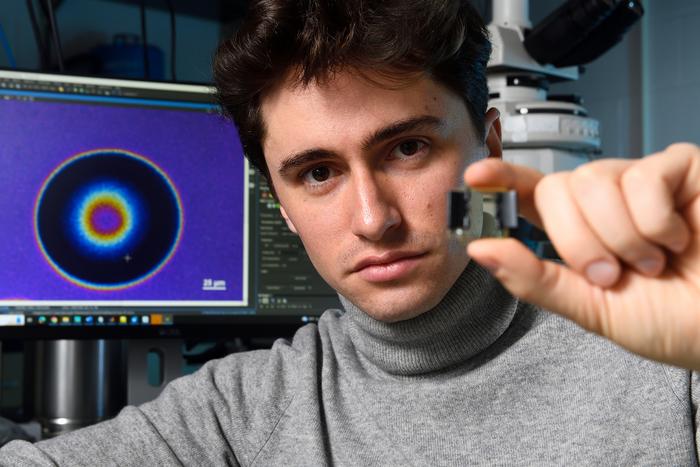

Because the skull itself is not permeable to sound waves, using ultrasound for brain imaging requires a transparent “window” to be installed into the skull. “Importantly, ultrasound technology does not need to be implanted into the brain itself,” says Whitney Griggs (PhD ’23), a co-first author on the study. “This significantly reduces the chance for infection and leaves the brain tissue and its protective dura perfectly intact.”

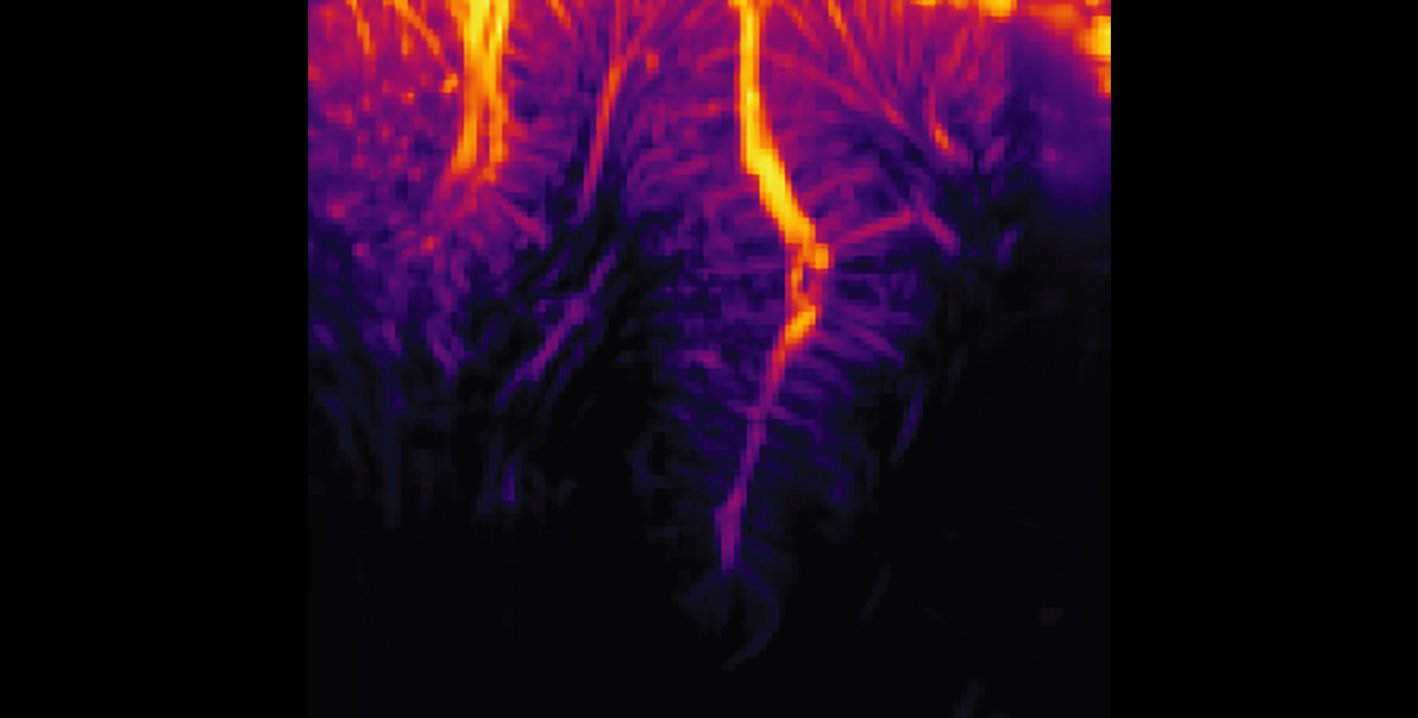

“As neurons’ activity changes, so does their use of metabolic resources like oxygen,” says Norman. “Those resources are resupplied through the blood stream, which is the key to functional ultrasound.” In this study, the researchers used ultrasound to measure changes in blood flow to specific brain regions. In the same way that the sound of an ambulance siren changes in pitch as it moves closer and then farther away from you, red blood cells will increase the pitch of the reflected ultrasound waves as they approach the source and decrease the pitch as they flow away. Measuring this Doppler-effect phenomenon allowed the researchers to record tiny changes in the brain’s blood flow down to spatial regions just 100 micrometers wide, about the width of a human hair. This enabled them to simultaneously measure the activity of tiny neural populations, some as small as just 60 neurons, widely throughout the brain.

The researchers used functional ultrasound to measure brain activity from the posterior parietal cortex (PPC) of non-human primates, a region that governs the planning of movements and contributes to their execution. The region has been studied by the Andersen lab for decades using other techniques. The animals were taught two tasks, requiring them to either plan to move their hand to direct a cursor on a screen, or plan to move their eyes to look at a specific part of the screen. They only needed to think about performing the task, not actually move their eyes or hands, as the BMI read the planning activity in their PPC.

“I remember how impressive it was when this kind of predictive decoding worked with electrodes two decades ago, and it’s amazing now to see it work with a much less invasive method like ultrasound,” says Shapiro.

The ultrasound data was sent in real-time to a decoder (previously trained to decode the meaning of that data using machine learning), and subsequently generated control signals to move a cursor to where the animal intended it to go. The BMI was able to successfully do this to eight radial targets with mean errors of less than 40 degrees.

“It’s significant that the technique does not require the BMI to be recalibrated each day, unlike other BMIs,” says Griggs. “As an analogy, imagine needing to recalibrate your computer mouse for up to 15 minutes each day before use.”

Next, the team plans to study how BMIs based on ultrasound technology perform in humans, and to further develop the fUS technology to enable three-dimensional imaging for improved accuracy.

Original Article: Ultrasound Enables Less-Invasive Brain–Machine Interfaces

More from: California Institute of Technology | Howard Hughes Medical Institute | INSERM

The Latest Updates from Bing News

Go deeper with Bing News on:

Brain-machine interface

- Allianz Trade backs Prometheus for disability support

The insurer will provide financial support and aid with strategic and visibility matters. Trade credit insurer, Allianz Trade, and French start-up, Inclusive Brains, have joined forces to create ...

- Tie-up to develop AI-powered neural interfaces for people with disabilities

Prometheus has already demonstrated its capabilities in usability tests, allowing users to control an exoskeleton arm through brain signals, facial expressions, or other neurophysiological cues. These ...

- Allianz Trade x Inclusive Brains: AI + Neurotech for Inclusiveness

Paul Barbaste Allianz Trade x Inclusive Brains: AI + Neurotech for Inclusiveness Allianz Trade & Inclusive Brains join forces to foster the inclusion of people with disabilities thanks to AI and ...

- Whither BCIs?

Neuralink’s chip is an example of a brain-computer interface (BCI), a system that allows a direct communication pathway between a brain and a computer. In this case, the device needs to be surgically ...

- Brains, robots, and interfaces

Last year, Nature Electronics declared brain-computer interfaces their technology of the year. These interfaces can now translate neural signals into speech, at speeds close to normal conversation.

Go deeper with Bing News on:

Ultrasound brain-machine interface

- Accuvix V10 Ultrasound Machine from Samsung

Meet the ACCUVIX V10, combining futuristic state-of-the-art technology and outstanding user-interface design. The new ACCUVIX V10 contains cutting-edge 2D, 3D and 4D image technologies ...

- AI Deep Learning Improves Brain-Computer Interface Performance

AI deep learning powers a brain-computer interface that enables humans to continuously control a cursor using thoughts.

- Accuvix A30 Ultrasound Machine from Samsung

a sensitive interface and a forward-looking ergonomic design. With the release of the world's first 21.5-inch HD LED ultrasound monitor, the Accuvix A30 introduces high resolution image ...

- Deep-learning decoding for a noninvasive brain-computer interface

Brain-computer interfaces (BCIs) have the potential to make life easier for people with motor or speech disorders, allowing them to manipulate prosthetic limbs and employ computers, among other uses.

- Focused ultrasound technique gets quality assurance protocol

More information: Chih-Yen Chien et al, Quality assurance for focused ultrasound-induced blood-brain barrier opening procedure using passive acoustic detection, eBioMedicine (2024). DOI: 10.1016/j ...