From left are Professor Jiyun Kim and Jin Pyo Lee in the Department of Material Science and Engineering at UNIST.

CREDIT: UNIST

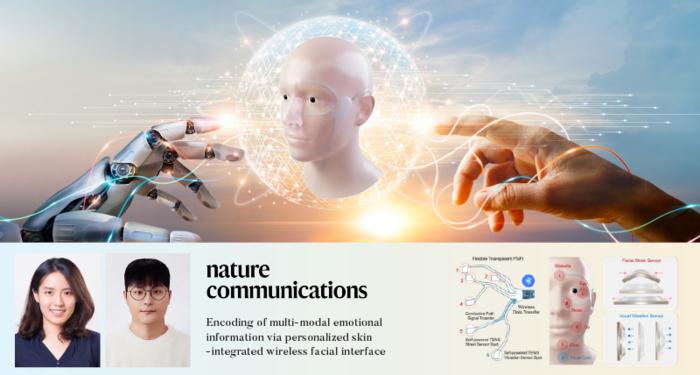

A groundbreaking technology that can recognize human emotions in real time has been developed by Professor Jiyun Kim and his research team in the Department of Material Science and Engineering at UNIST. This innovative technology is poised to revolutionize various industries, including next-generation wearable systems that provide services based on emotions.

Understanding and accurately extracting emotional information has long been a challenge due to the abstract and ambiguous nature of human affects such as emotions, moods, and feelings. To address this, the research team has developed a multi-modal human emotion recognition system that combines verbal and non-verbal expression data to efficiently utilize comprehensive emotional information.

At the core of this system is the personalized skin-integrated facial interface (PSiFI) system, which is self-powered, facile, stretchable, and transparent. It features a first-of-its-kind bidirectional triboelectric strain and vibration sensor that enables the simultaneous sensing and integration of verbal and non-verbal expression data. The system is fully integrated with a data processing circuit for wireless data transfer, enabling real-time emotion recognition.

Utilizing machine learning algorithms, the developed technology demonstrates accurate and real-time human emotion recognition tasks, even when individuals are wearing masks. The system has also been successfully applied in a digital concierge application within a virtual reality (VR) environment.

The technology is based on the phenomenon of “friction charging,” where objects separate into positive and negative charges upon friction. Notably, the system is self-generating, requiring no external power source or complex measuring devices for data recognition.

Professor Kim commented, “Based on these technologies, we have developed a skin-integrated face interface (PSiFI) system that can be customized for individuals.” The team utilized a semi-curing technique to manufacture a transparent conductor for the friction charging electrodes. Additionally, a personalized mask was created using a multi-angle shooting technique, combining flexibility, elasticity, and transparency.

The research team successfully integrated the detection of facial muscle deformation and vocal cord vibrations, enabling real-time emotion recognition. The system’s capabilities were demonstrated in a virtual reality “digital concierge” application, where customized services based on users’ emotions were provided.

Jin Pyo Lee, the first author of the study, stated, “With this developed system, it is possible to implement real-time emotion recognition with just a few learning steps and without complex measurement equipment. This opens up possibilities for portable emotion recognition devices and next-generation emotion-based digital platform services in the future.”

The research team conducted real-time emotion recognition experiments, collecting multimodal data such as facial muscle deformation and voice. The system exhibited high emotional recognition accuracy with minimal training. Its wireless and customizable nature ensures wearability and convenience.

Furthermore, the team applied the system to VR environments, utilizing it as a “digital concierge” for various settings, including smart homes, private movie theaters, and smart offices. The system’s ability to identify individual emotions in different situations enables the provision of personalized recommendations for music, movies, and books.

Professor Kim emphasized, “For effective interaction between humans and machines, human-machine interface (HMI) devices must be capable of collecting diverse data types and handling complex integrated information. This study exemplifies the potential of using emotions, which are complex forms of human information, in next-generation wearable systems.”

Original Article: World’s first real-time wearable human emotion recognition technology developed!

More from: Ulsan National Institute of Science and Technology | Nanyang Technological University

The Latest Updates from Bing News

Go deeper with Bing News on:

Multi-modal human emotion recognition system

- Robotic system feeds people with severe mobility limitations

Cornell researchers have developed a robotic feeding system that uses computer vision, machine learning and multimodal sensing to safely feed people with severe mobility limitations, including those ...

- How sleep affects human health, explained

Among many other things, sleep plays a crucial role in memory consolidation and other brain functions, supporting the immune system and healing after injury or disease, and protecting against heart ...

- Magos Herrera explores spectrum of human emotion in jazz

Alchemizing the feelings of grief and stagnancy into a celebration of human connection, “Aire” explores the diversity of the human heart. Herrera uses influence from Latin-American music, jazz, ...

- These AI avatars now come with human-like expressions

On Wednesday, the startup announced Expressive Avatars, which can depict a range of lifelike human emotions. Expressive Avatars are the latest edition of what the startup calls its "digital actors." ...

- Nvidia-backed Startup Synthesia Releases AI Avatars That Represent Human Emotions

Synthesia, an artificial intelligence firm backed by Nvidia, unveiled today a new line of AI-generated digital avatars that can comprehend user-submitted language and translate it to depict human ...

Go deeper with Bing News on:

Portable emotion recognition devices

- The Best External Hard Drives for 2024

Most such multi-bay devices are sold without the actual hard drives included, so you can install any drive you want (usually, 3.5-inch drives, but some support laptop-style 2.5-inchers).

- Portable AI Devices Aren't Ready for Prime Time

Commentary: Buzzy new products like the Rabbit R1 and Humane AI Pin aren't yet worth your money, CNET experts say. Plus, more developments from the world of AI.

- Apple acquires French computer vision, facial analysis startup

Datakalab has described itself as “experts in low power, runtime efficient, and deep learning algorithms” that can work effectively on portable devices.

- Apple to Bring On-Device AI with French Startup Acquisition

Apple acquired Datakalab, a French Artificial Intelligence startup amid its plans to deliver on-device AI tools.

- Apple acquires this French company for on-device AI processing: How this may help iPhone 16 models

Apple acquires Paris-based Datakalab to enhance iPhone 16 with on-device AI tools. iOS 18 to showcase new AI features at WWDC 2024. Datakalab's expert ...