AI-generated (DALL-E 3) conceptual image depicting light waves passing through a physical system.

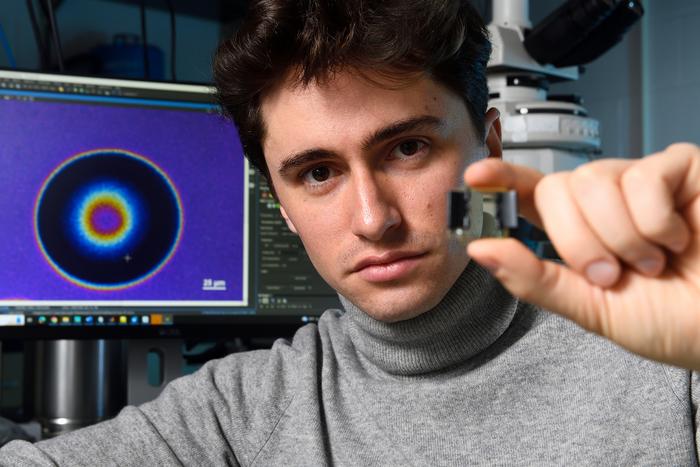

Credit: LWE/EPFL

EPFL researchers have developed an algorithm to train an analog neural network just as accurately as a digital one, enabling the development of more efficient alternatives to power-hungry deep learning hardware.

With their ability to process vast amounts of data through algorithmic ‘learning’ rather than traditional programming, it often seems like the potential of deep neural networks like Chat-GPT is limitless. But as the scope and impact of these systems have grown, so have their size, complexity, and energy consumption – the latter of which is significant enough to raise concerns about contributions to global carbon emissions.

And while we often think of technological advancement in terms of shifting from analog to digital, researchers are now looking for answers to this problem in physical alternatives to digital deep neural networks. One such researcher is Romain Fleury of EPFL’s Laboratory of Wave Engineering in the School of Engineering. In a paper published in Science, he and his colleagues describe an algorithm for training physical systems that shows improved speed, enhanced robustness, and reduced power consumption compared to other methods.

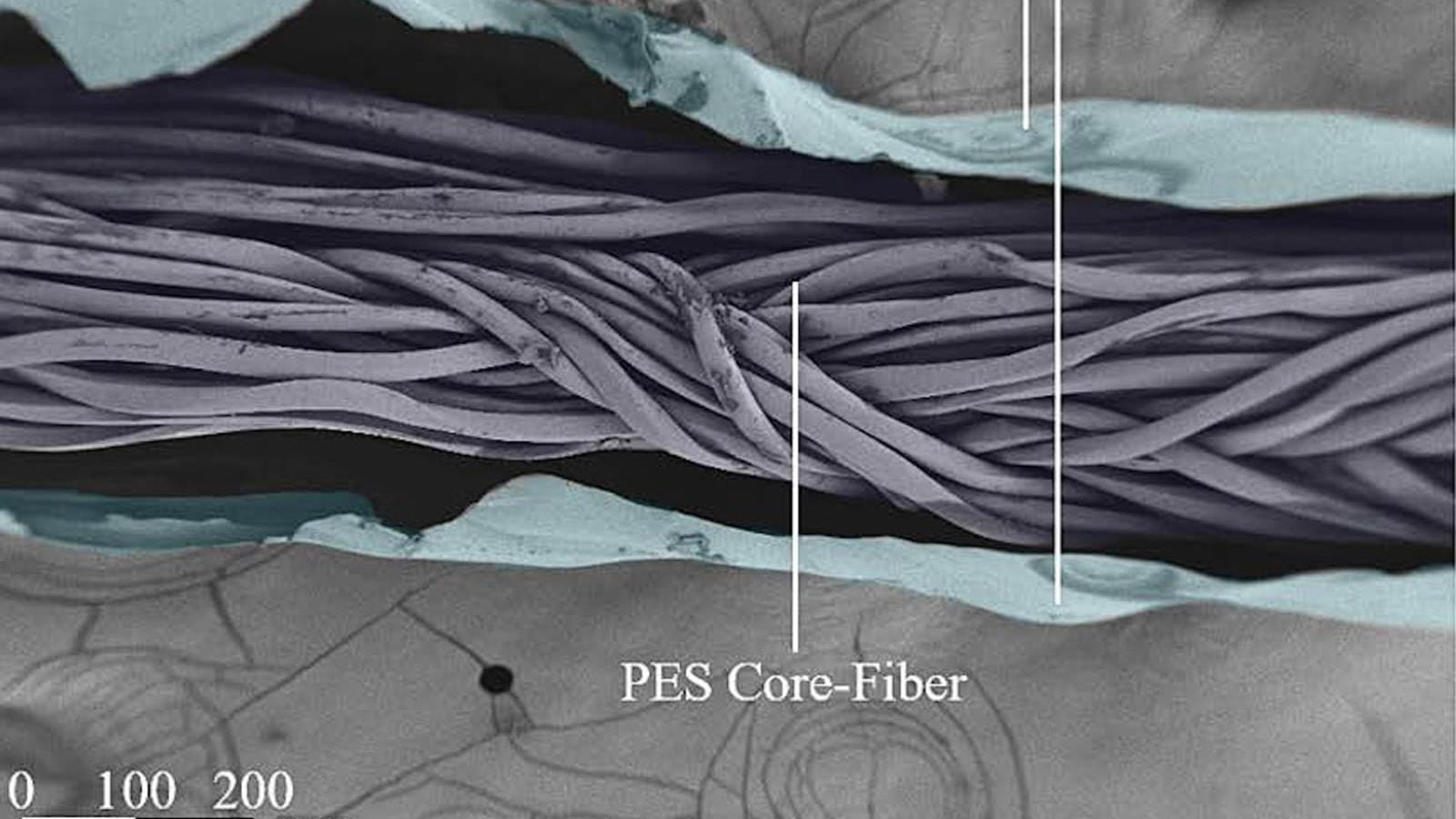

“We successfully tested our training algorithm on three wave-based physical systems that use sound waves, light waves, and microwaves to carry information, rather than electrons. But our versatile approach can be used to train any physical system,” says first author and LWE researcher Ali Momeni.

A “more biologically plausible” approach

Neural network training refers to helping systems learn to generate optimal values of parameters for a task like image or speech recognition. It traditionally involves two steps: a forward pass, where data is sent through the network and an error function is calculated based on the output; and a backward pass (also known as backpropagation, or BP), where a gradient of the error function with respect to all network parameters is calculated.

Over repeated iterations, the system updates itself based on these two calculations to return increasingly accurate values. The problem? In addition to being very energy-intensive, BP is poorly suited to physical systems. In fact, training physical systems usually requires a digital twin for the BP step, which is inefficient and carries the risk of a reality-simulation mismatch.

The scientists’ idea was to replace the BP step with a second forward pass through the physical system to update each network layer locally. In addition to decreasing power use and eliminating the need for a digital twin, this method better reflects human learning.

“The structure of neural networks is inspired by the brain, but it is unlikely that the brain learns via BP,” explains Momeni. “The idea here is that if we train each physical layer locally, we can use our actual physical system instead of first building a digital model of it. We have therefore developed an approach that is more biologically plausible.”

The EPFL researchers, with Philipp del Hougne of CNRS IETR and Babak Rahmani of Microsoft Research, used their physical local learning algorithm (PhyLL) to train experimental acoustic and microwave systems and a modeled optical system to classify data like vowel sounds and images. As well as showing comparable accuracy to BP-based training, the method was robust and adaptable – even in systems exposed to unpredictable external perturbations – compared to the state of the art.

An analog future?

While the LWE’s approach is the first BP-free training of deep physical neural networks, some digital updates of the parameters are still required. “It’s a hybrid training approach, but our aim is to decrease digital computation as much as possible,” Momeni says.

The researchers now hope to implement their algorithm on a small-scale optical system, with the ultimate goal of increasing network scalability.

“In our experiments, we used neural networks with up to 10 layers, but would it still work with 100 layers with billions of parameters? This is the next step, and will require overcoming technical limitations of physical systems.”

Original Article: Training algorithm breaks barriers to deep physical neural networks

More from: École Polytechnique Fédérale de Lausanne | Centre National de la Recherche Scientifique | Microsoft Research

The Latest Updates from Bing News

Go deeper with Bing News on:

Analog neural network

- AI And The Municipal Bond Market

AI is rapidly reengineering the $4 trillion Municipal Bond Market. No part of the market is going to be untouched.

- Neuroscientists Reveal 'Neural Compass' That Stops You From Getting Lost

The discovery offers new insights for conditions like Parkinson's disease and Alzheimer's, in which navigation and orientation are often impaired.

- Organic electrochemical transistors: Scientists solve chemical mystery at the interface of biology and technology

Researchers who want to bridge the divide between biology and technology spend a lot of time thinking about translating between the two different "languages" of those realms.

- At The Heart Of The AI PC Battle Lies The NPU

As AI PCs ascend in the market, NPUs are stepping into the spotlight. Indeed, the NPU has become a new and popular destination for many next-generation AI workloads.

- Intel builds world’s largest neuromorphic system

Some alternate approaches, such as analog circuits ... “At the moment, most of them are based on conventional neural networks that are designed for traditional computing architecture.” ...

Go deeper with Bing News on:

AI power consumption

- AI Copying Is Not The Same As Human Copying | Commentary

That, in turn, drove more discovery, sales and consumption of them — not less — which of ... To the contrary, it expressly acknowledges AI’s power and potential. The Human Artistry Campaign, a ...

- Big tech’s great AI power grab

Big Tech wants more computing power. A lot more. According to their latest quarterly reports, Alphabet (Google’s corporate parent), Amazon and Microsoft—the world’s cloud-computing giants—collectively ...

- AI Predicts China Will Ban High-Energy Blockchains

We asked three AI platforms to predict the future of high-energy consumption blockchains in China, and the unanimous answer points to a ban.

- Power not just a domestic theme; data centres, AI to drive next wave of growth

During the Bosch Connected World conference in Berlin in February, Tesla CEO Elon Musk said that by 2025, there wouldn't be enough electricity to run power-hungry AI chips. “The simultaneous growth of ...

- AI's Energy Needs Could Catapult Natural Gas Demand

The next decade could be big for Natural gas producers as artificial intelligence's energy demands could go beyond renewable sources of electricity.