via Penn State

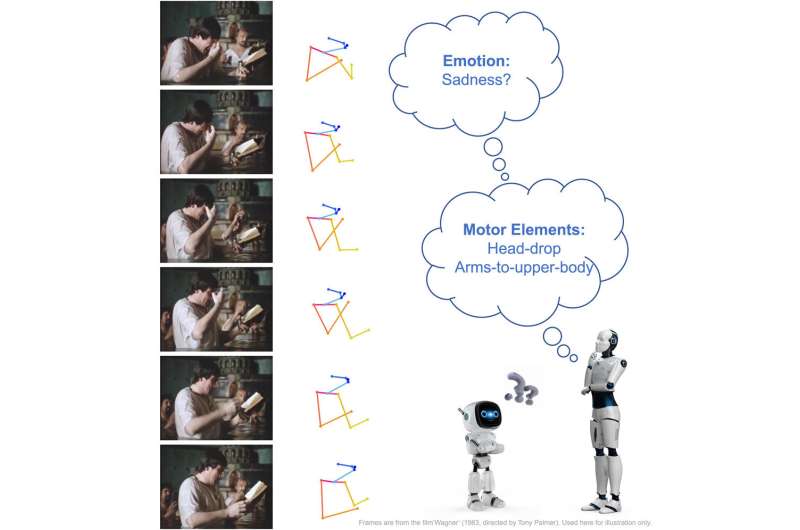

An individual may bring their hands to their face when feeling sad or jump into the air when feeling happy. Human body movements convey emotions, which plays a crucial role in everyday communication, according to a team led by Penn State researchers. Combining computing, psychology and performing arts, the researchers developed an annotated human movement dataset that may improve the ability of artificial intelligence to recognize the emotions expressed through body language.

The work — led by James Wang, distinguished professor in the College of Information Systems and Technology (IST) and carried out primarily by Chenyan Wu, a graduating doctoral student in Wang’s group — was published today (Oct. 13) in the print edition of Patterns and featured on the journal’s cover.

“People often move using specific motor patterns to convey emotions and those body movements carry important information about a person’s emotions or mental state,” Wang said. “By describing specific movements common to humans using their foundational patterns, known as motor elements, we can establish the relationship between these motor elements and bodily expressed emotion.”

“People often move using specific motor patterns to convey emotions, and those body movements carry important information about a person’s emotions or mental state.”

James Wang, distinguished professor of information sciences and technology

According to Wang, augmenting machines’ understanding of bodily expressed emotion may help enhance communication between assistive robots and children or elderly users; provide psychiatric professionals with quantitative diagnostic and prognostic assistance; and bolster safety by preventing mishaps in human-machine interactions.

“In this work, we introduced a novel paradigm for bodily expressed emotion understanding that incorporates motor element analysis,” Wang said. “Our approach leverages deep neural networks — a type of artificial intelligence — to recognize motor elements, which are subsequently used as intermediate features for emotion recognition.”

The team created a dataset of the way body movements indicate emotion — body motor elements — using 1,600 human video clips. Each video clip was annotated using Laban Movement Analysis (LMA), a method and language for describing, visualizing, interpreting and documenting human movement.

Wu then designed a dual-branch, dual-task movement analysis network capable of using the labeled dataset to produce predictions for both bodily expressed emotion and LMA labels for new images or videos.

“Emotion and LMA element labels are related, and the LMA labels are easier for deep neural networks to learn,” Wu said.

According to Wang, LMA can study motor elements and emotions while simultaneously creating a “high-precision” dataset that demonstrates the effective learning of human movement and emotional expression.

“Incorporating LMA features has effectively enhanced body-expressed emotion understanding,” Wang said. “Extensive experiments using real-world video data revealed that our approach significantly outperformed baselines that considered only rudimentary body movement, showing promise for further advancements in the future.”

Original Article: Human body movements may enable automated emotion recognition, researchers say

More from: Pennsylvania State University | University of Haifa | University of Illinois at Chicago

The Latest Updates from Bing News

Go deeper with Bing News on:

Automated emotion recognition

- Here’s How Artificial Intelligence Is Going To Transform The Driver/Car Relationship

Artificial intelligence is redefining how we interact with our cars. Let us take a look at how AI is going to transform the driver/car relationship.

- Samba TV Unveils Groundbreaking GenAI Technology at NewFronts that Provides Actionable Context for Video Advertising

Joining Samba TV to discuss a real-world example will be Freddie Liversedge, VP of Global Media at HP, who will talk about how HP is using Samba AI to quantify its sponsorship with Real Madrid. The ...

- Machine listening: Making speech recognition systems more inclusive

One group commonly misunderstood by voice technology are individuals who speak African American English, or AAE. Researchers designed an experiment to test how AAE speakers adapt their speech when ...

- Exploring Bias in AI: How Can We Overcome It?

In a world with increasing public surveillance, biases in facial recognition also present a potential to exert harm among marginalised groups. So far, people’s false identification through facial ...

- The Emotional Ending of 'Baby Reindeer,' Explained

Despite episode 6 ending on what may be Donny's lowest point, the final episode of Baby Reindeer sees the aspiring comedian on an upswing in his career. It turns out that an audience member filmed the ...

Go deeper with Bing News on:

Emotion sensing machines

- Robotic system feeds people with severe mobility limitations

Cornell researchers have developed a robotic feeding system that uses computer vision, machine learning and multimodal sensing to safely feed people with severe mobility limitations, including those ...

- Brains, robots, and interfaces

Last year, Nature Electronics declared brain-computer interfaces their technology of the year. These interfaces can now translate neural signals into speech, at speeds close to normal conversation.

- Berklee students designed sounds for this smart-following robot: Here’s how

A robot that carries your belongings, follows you around and has a built-in speaker would not have been possible without the help of local college students.

- NASA Science Chief invites all to ‘do NASA science’

Fox said Parker got teary with emotion as he saw the powerful instrument his research ... You’re the people who brought this amazing machine into being. Thank you so much.’ “That meant the world to ...

- Smile, You’re on an In-Car Camera!

Brackenbury said Gentex is using AI machine vision to learn more about the health and emotions of vehicle occupants ... into cars as there are more sensing capabilities in the cabin ...