via Georgia Institute of Technology

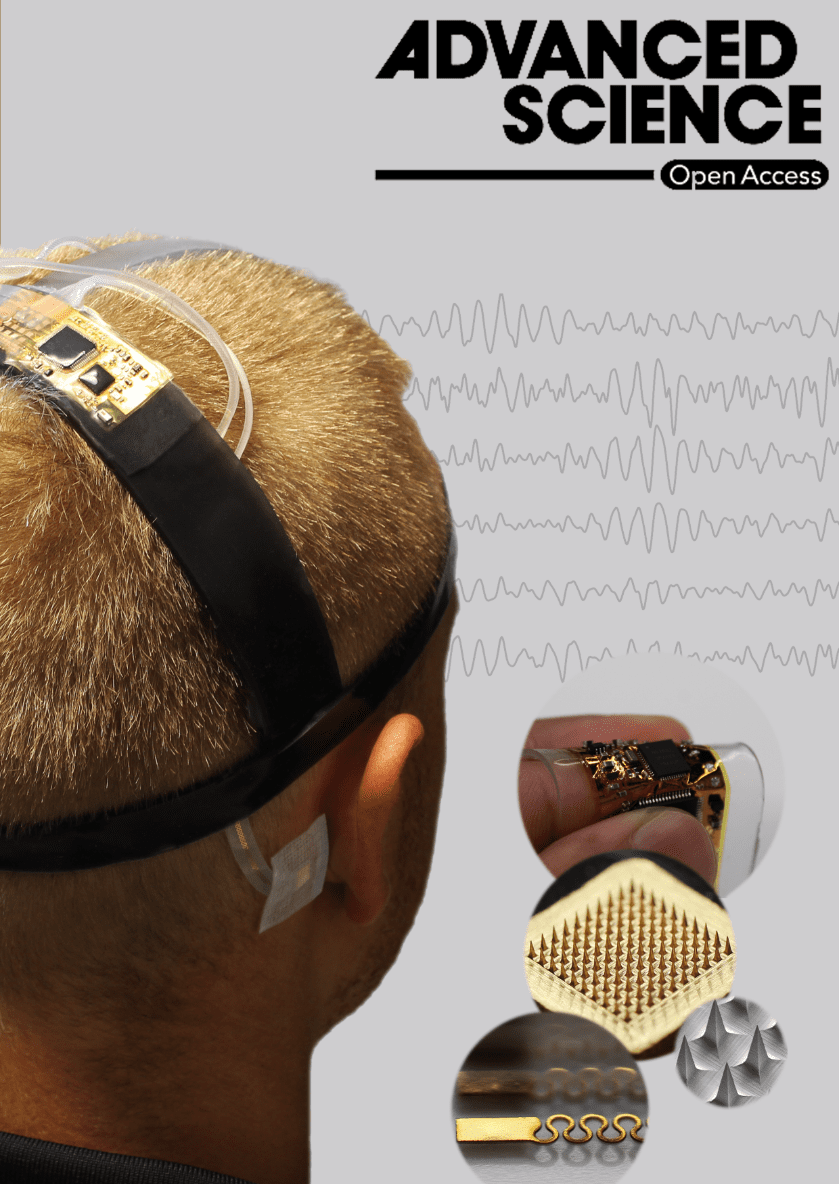

A new wearable brain-machine interface (BMI) system could improve the quality of life for people with motor dysfunction or paralysis, even those struggling with locked-in syndrome – when a person is fully conscious but unable to move or communicate.

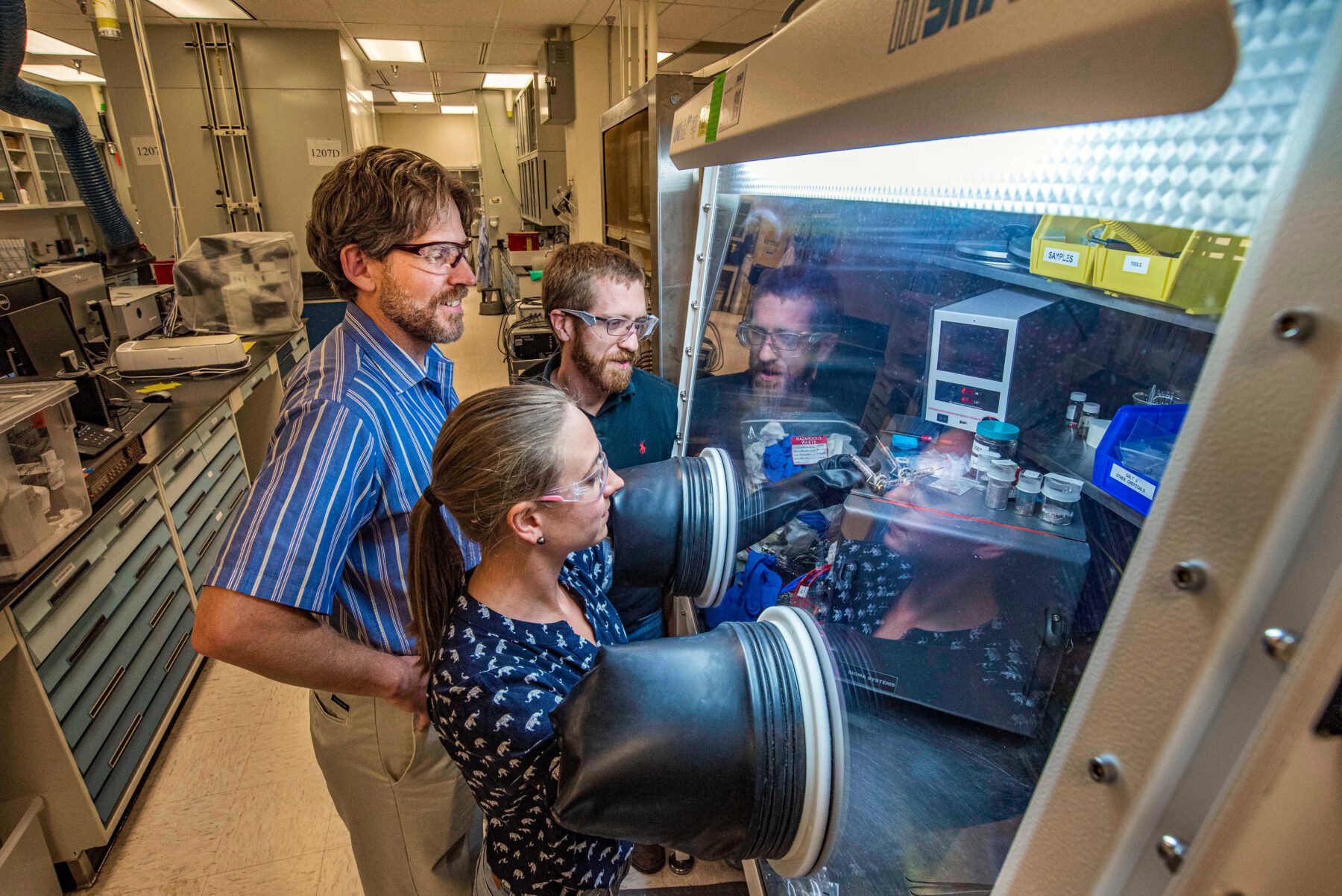

A multi-institutional, international team of researchers led by the lab of Woon-Hong Yeo at the Georgia Institute of Technology combined wireless soft scalp electronics and virtual reality in a BMI system that allows the user to imagine an action and wirelessly control a wheelchair or robotic arm.

The team, which included researchers from the University of Kent (United Kingdom) and Yonsei University (Republic of Korea), describes the new motor imagery-based BMI system this month in the journal Advanced Science.

“The major advantage of this system to the user, compared to what currently exists, is that it is soft and comfortable to wear, and doesn’t have any wires,” said Yeo, associate professor on the George W. Woodruff School of Mechanical Engineering.

BMI systems are a rehabilitation technology that analyzes a person’s brain signals and translates that neural activity into commands, turning intentions into actions. The most common non-invasive method for acquiring those signals is ElectroEncephaloGraphy, EEG, which typically requires a cumbersome electrode skull cap and a tangled web of wires.

These devices generally rely heavily on gels and pastes to help maintain skin contact, require extensive set-up times, are generally inconvenient and uncomfortable to use. The devices also often suffer from poor signal acquisition due to material degradation or motion artifacts – the ancillary “noise” which may be caused by something like teeth grinding or eye blinking. This noise shows up in brain-data and must be filtered out.

The portable EEG system Yeo designed, integrating imperceptible microneedle electrodes with soft wireless circuits, offers improved signal acquisition. Accurately measuring those brain signals is critical to determining what actions a user wants to perform, so the team integrated a powerful machine learning algorithm and virtual reality component to address that challenge.

The new system was tested with four human subjects, but hasn’t been studied with disabled individuals yet.

“This is just a first demonstration, but we’re thrilled with what we have seen,” noted Yeo, Director of Georgia Tech’s Center for Human-Centric Interfaces and Engineering under the Institute for Electronics and Nanotechnology, and a member of the Petit Institute for Bioengineering and Bioscience.

New Paradigm

Yeo’s team originally introduced soft, wearable EEG brain-machine interface in a 2019 study published in the Nature Machine Intelligence. The lead author of that work, Musa Mahmood, was also the lead author of the team’s new research paper.

“This new brain-machine interface uses an entirely different paradigm, involving imagined motor actions, such as grasping with either hand, which frees the subject from having to look at too much stimuli,” said Mahmood, a Ph. D. student in Yeo’s lab.

In the 2021 study, users demonstrated accurate control of virtual reality exercises using their thoughts – their motor imagery. The visual cues enhance the process for both the user and the researchers gathering information.

“The virtual prompts have proven to be very helpful,” Yeo said. “They speed up and improve user engagement and accuracy. And we were able to record continuous, high-quality motor imagery activity.”

According to Mahmood, future work on the system will focus on optimizing electrode placement and more advanced integration of stimulus-based EEG, using what they’ve learned from the last two studies.

Original Article: Wearable Brain-Machine Interface Turns Intentions into Actions

More from: Georgia Institute of Technology | University of Kent | Yonsei University

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Wearable brain-machine interface

- Brain-Machine Interfaces Spark Ethics Debate. 4 Areas Of Concern

Brain-machine interface data could reveal information ... reasonable guide to mitigate some of the potential ethical issues. Wearable technologies could be outfitted with more hardware and ...

- Whither BCIs?

Neuralink’s chip is an example of a brain-computer interface (BCI), a system that allows a direct communication pathway between a brain and a computer. In this case, the device needs to be surgically ...

- China Has a Controversial Plan for Brain-Computer Interfaces

China's brain-computer interface technology is catching up to the US. But it envisions a very different use case: cognitive enhancement.

- China eyes advancing brain-machine interface technology at 2024 ZGC Forum

Officials, scholars, and business representatives agreed to further promote the development of the brain-machine interface (BMI) technology and fully tap into the broad opportunities of frontier ...

- New Brain Cap Lets People Play Video Games With Their Minds

Researchers have created a wearable brain-computer interface (BCI ... researchers wanted a "one-size-fits-all solution." Using machine learning models, they developed what's known as a "decoder ...

Go deeper with Google Headlines on:

Wearable brain-machine interface

[google_news title=”” keyword=”wearable brain-machine interface” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Brain-machine interface

- China accelerates industrial development of brain-machine interface

A monkey with soft electrode filaments implanted in its brain controlled an isolated robotic arm and grasped a strawberry by simply using its thought

- Allianz Trade backs Prometheus for disability support

The insurer will provide financial support and aid with strategic and visibility matters. Trade credit insurer, Allianz Trade, and French start-up, Inclusive Brains, have joined forces to create Prometheus.

- Tie-up to develop AI-powered neural interfaces for people with disabilities

Prometheus has already demonstrated its capabilities in usability tests, allowing users to control an exoskeleton arm through brain signals, facial expressions, or other neurophysiological cues. These tests were conducted with organizations supporting people with cognitive disabilities and paralysis.

- China's homegrown brain-machine interface system unveiled at Zhongguancun Forum

This photo shows the NeuCyber Array BMI System, a self-developed brain-machine interface (BMI) system from China, unveiled at the opening ceremony of the 2024 Zhongguancun Forum in Beijing, capital of China, April 25, 2024. (Xinhua) The NeuCyber Array BMI ...

Go deeper with Google Headlines on:

Brain-machine interface

[google_news title=”” keyword=”brain-machine interface” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]