Image: Chelsea Turner

Autonomous control system “learns” to use simple maps and image data to navigate new, complex routes.

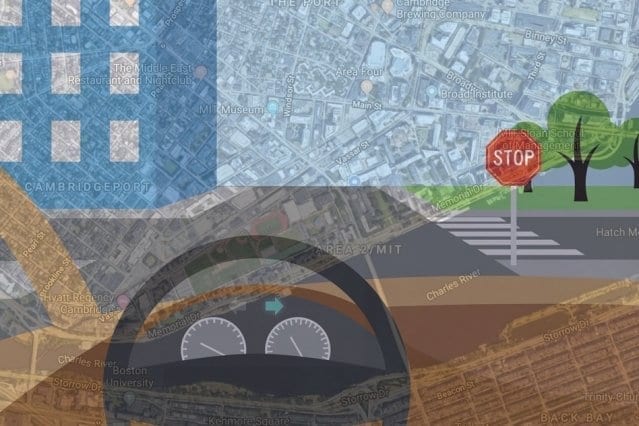

With aims of bringing more human-like reasoning to autonomous vehicles, MIT researchers have created a system that uses only simple maps and visual data to enable driverless cars to navigate routes in new, complex environments.

Human drivers are exceptionally good at navigating roads they haven’t driven on before, using observation and simple tools. We simply match what we see around us to what we see on our GPS devices to determine where we are and where we need to go. Driverless cars, however, struggle with this basic reasoning. In every new area, the cars must first map and analyze all the new roads, which is very time consuming. The systems also rely on complex maps — usually generated by 3-D scans — which are computationally intensive to generate and process on the fly.

In a paper being presented at this week’s International Conference on Robotics and Automation, MIT researchers describe an autonomous control system that “learns” the steering patterns of human drivers as they navigate roads in a small area, using only data from video camera feeds and a simple GPS-like map. Then, the trained system can control a driverless car along a planned route in a brand-new area, by imitating the human driver.

Similarly to human drivers, the system also detects any mismatches between its map and features of the road. This helps the system determine if its position, sensors, or mapping are incorrect, in order to correct the car’s course.

To train the system initially, a human operator controlled an automated Toyota Prius — equipped with several cameras and a basic GPS navigation system — to collect data from local suburban streets including various road structures and obstacles. When deployed autonomously, the system successfully navigated the car along a preplanned path in a different forested area, designated for autonomous vehicle tests.

“With our system, you don’t need to train on every road beforehand,” says first author Alexander Amini, an MIT graduate student. “You can download a new map for the car to navigate through roads it has never seen before.”

“Our objective is to achieve autonomous navigation that is robust for driving in new environments,” adds co-author Daniela Rus, director of the Computer Science and Artificial Intelligence Laboratory (CSAIL) and the Andrew and Erna Viterbi Professor of Electrical Engineering and Computer Science. “For example, if we train an autonomous vehicle to drive in an urban setting such as the streets of Cambridge, the system should also be able to drive smoothly in the woods, even if that is an environment it has never seen before.”

Joining Rus and Amini on the paper are Guy Rosman, a researcher at the Toyota Research Institute, and Sertac Karaman, an associate professor of aeronautics and astronautics at MIT.

Point-to-point navigation

Traditional navigation systems process data from sensors through multiple modules customized for tasks such as localization, mapping, object detection, motion planning, and steering control. For years, Rus’s group has been developing “end-to-end” navigation systems, which process inputted sensory data and output steering commands, without a need for any specialized modules.

Until now, however, these models were strictly designed to safely follow the road, without any real destination in mind. In the new paper, the researchers advanced their end-to-end system to drive from goal to destination, in a previously unseen environment. To do so, the researchers trained their system to predict a full probability distribution over all possible steering commands at any given instant while driving.

The system uses a machine learning model called a convolutional neural network (CNN), commonly used for image recognition. During training, the system watches and learns how to steer from a human driver. The CNN correlates steering wheel rotations to road curvatures it observes through cameras and an inputted map. Eventually, it learns the most likely steering command for various driving situations, such as straight roads, four-way or T-shaped intersections, forks, and rotaries.

“Initially, at a T-shaped intersection, there are many different directions the car could turn,” Rus says. “The model starts by thinking about all those directions, but as it sees more and more data about what people do, it will see that some people turn left and some turn right, but nobody goes straight. Straight ahead is ruled out as a possible direction, and the model learns that, at T-shaped intersections, it can only move left or right.”

What does the map say?

In testing, the researchers input the system with a map with a randomly chosen route. When driving, the system extracts visual features from the camera, which enables it to predict road structures. For instance, it identifies a distant stop sign or line breaks on the side of the road as signs of an upcoming intersection. At each moment, it uses its predicted probability distribution of steering commands to choose the most likely one to follow its route.

Importantly, the researchers say, the system uses maps that are easy to store and process. Autonomous control systems typically use LIDAR scans to create massive, complex maps that take roughly 4,000 gigabytes (4 terabytes) of data to store just the city of San Francisco. For every new destination, the car must create new maps, which amounts to tons of data processing. Maps used by the researchers’ system, however, captures the entire world using just 40 gigabytes of data.

During autonomous driving, the system also continuously matches its visual data to the map data and notes any mismatches. Doing so helps the autonomous vehicle better determine where it is located on the road. And it ensures the car stays on the safest path if it’s being fed contradictory input information: If, say, the car is cruising on a straight road with no turns, and the GPS indicates the car must turn right, the car will know to keep driving straight or to stop.

“In the real world, sensors do fail,” Amini says. “We want to make sure that the system is robust to different failures of different sensors by building a system that can accept these noisy inputs and still navigate and localize itself correctly on the road.”

Learn more: Bringing human-like reasoning to driverless car navigation

The Latest on: Driverless cars

[google_news title=”” keyword=”driverless cars” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Driverless cars

- Car with no driver, two children inside rolls onto occupied soccer field in Cohasseton May 12, 2024 at 5:57 pm

Two children sustained minor injuries Saturday when a car they were in rolled out of a parking space and careened through a soccer field.

- Driverless cars to evolve traffic lights, white signal could be addedon May 12, 2024 at 11:14 am

Researchers propose adding a fourth light, possibly white, to signal when there are sufficient autonomous vehicles on the road to guide traffic.

- Driverless SUV with kids inside strikes coach on Cohasset school sports fieldon May 11, 2024 at 3:36 pm

A witness tells NewsCenter 5 that about an hour before the incident, 150 kindergarten-aged children were playing lacrosse on the field the SUV rolled through.

- Driverless SUV with kids inside travels onto field at Cohasset school, police sayon May 11, 2024 at 1:10 pm

Coaches and parents were able to rush children out of the path of the driverless vehicle, which carried two small kids inside, police said.

- Waymo's Driverless Taxis Now Complete More Than 50k Rides A Weekon May 11, 2024 at 10:47 am

Waymo's driverless taxis are getting more popular, The Alphabet-backed company announced on X this week that it's currently serving more than 50,000 paid driver-free trips every week in the three ...

- British driverless car firm raises $1bn from investors in Europe’s biggest AI deal to dateon May 9, 2024 at 6:00 am

BRITAIN’S hopes of rivalling Silicon Valley were boosted yesterday as a driverless car company raised $1billion (£790million) from investors. The cash injection into Wayve, the biggest to date in ...

- Autonomous car company Glydways to bring driverless public transit to East Contra Costaon May 7, 2024 at 6:52 pm

Public transit has struggled to recover its pre-pandemic ridership, but an autonomous car company has a driverless transportation system that could make East Contra Costa County a leader in modern ...

- British driverless car start-up Wayve raises $1bnon May 6, 2024 at 4:01 pm

Wayve, a British driverless car company, has raised over a billion dollars from three of the world’s most influential tech companies to commercialise its products. Led by investor SoftBank, with ...

- UI studies how to make driverless cars safe for pedestrianson May 2, 2024 at 4:00 am

As driverless cars become more popular on our roads, researchers at the University of Iowa are studying ways to make those cars transmit simple messages to pedestrians that it’s safe to cross in front ...

- Waymo driverless cars have hit Atlanta’s streets. Here’s what we’ve learned about themon May 1, 2024 at 2:59 pm

ATLANTA — Autonomous cars from the driverless taxicab company Waymo have hit Atlanta streets. Waymo said the cars are here as part of its “road trip” testing. The driverless cars will be testing ...

via Bing News