Computers operate self-driving cars, pick friends’ faces out of photos on Facebook, and are learning to take on jobs typically entrusted only to human experts.

Researchers from the University of Wisconsin–Madison and Oak Ridge National Laboratory have trained computers to quickly and consistently detect and analyze microscopic radiation damage to materials under consideration for nuclear reactors. And the computers bested humans in this arduous task.

“Machine learning has great potential to transform the current, human-involved approach of image analysis in microscopy,” says Wei Li, who earned his master’s degree in materials science and engineering this year from UW–Madison.

Many problems in materials science are image-based, yet few researchers have expertise in machine vision — making image recognition and analysis a major research bottleneck. As a student, Li realized that he could leverage training in the latest computational techniques to help bridge the gap between artificial intelligence and materials science research.

Li, with Oak Ridge staff scientist Kevin Field and UW–Madison materials science and engineering professor Dane Morgan, used machine learning to make artificial intelligence better than experienced humans at analyzing damage to potential nuclear reactor materials. The collaborators described their approach in a paper published July 18 in the journal npj Computational Materials.

Machine learning uses statistical methods to guide computers toward improving their performance on a task without receiving any explicit guidance from a human. Essentially, machine learning teaches computers to teach themselves.

“In the future, I believe images from many instruments will pass through a machine learning algorithm for initial analysis before being considered by humans,” says Morgan, who was Li’s graduate school advisor.

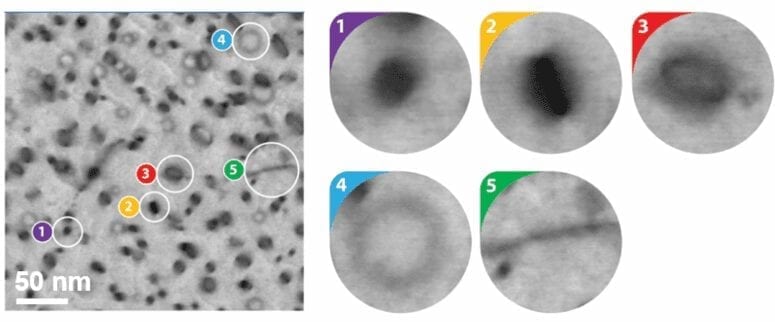

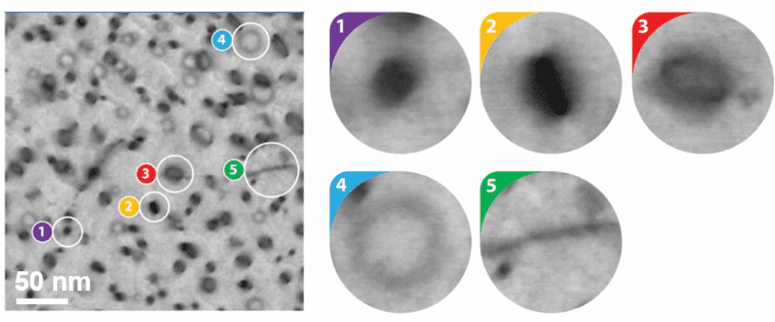

The researchers targeted machine learning as a means to rapidly sift through electron microscopy images of materials that had been exposed to radiation, and identify a specific type of damage — a challenging task because the photographs can resemble a cratered lunar surface or a splatter-painted canvas.

That job, absolutely critical to developing safe nuclear materials, could make a time-consuming process much more efficient and effective.

“Human detection and identification is error-prone, inconsistent and inefficient. Perhaps most importantly, it’s not scalable,” says Morgan. “Newer imaging technologies are outstripping human capabilities to analyze the data we can produce.”

Previously, image-processing algorithms depended on human programmers to provide explicit descriptions of an object’s identifying features. Teaching a computer to recognize something simple like a stop sign might involve lines of code describing a red octagonal object.

More complex, however, is articulating all of the visual cues that signal something is, for example, a cat. Fuzzy ears? Sharp teeth? Whiskers? A variety of critters have those same characteristics.

Machine learning now takes a completely different approach.

“It’s a real change of thinking. You don’t make rules. You let the computer figure out what the rules should be,” says Morgan.

“This is just the beginning. Machine learning tools will help create a cyber infrastructure that scientists can utilize in ways we are just beginning to understand.”

Dane Morgan

Today’s machine learning approaches to image analysis often use programs called neural networks that seem to mimic the remarkable layered pattern-recognition powers of the human brain. To teach a neural network to recognize a cat, scientists simply “train” the program by providing a collection of accurately labeled pictures of various cat breeds. The neural network takes over from there, building and refining its own set of guidelines for the most important features.

Similarly, Morgan and colleagues taught a neural network to recognize a very specific type of radiation damage, called dislocation loops, which are some of the most common, yet challenging, defects to identify and quantify even for a human with decades of experience.

After training with 270 images, the neural network, combined with another machine learning algorithm called a cascade object detector, correctly identified and classified roughly 86 percent of the dislocation loops in a set of test pictures. For comparison, human experts found 80 percent of the defects.

“When we got the final result, everyone was surprised,” says Field, “not only by the accuracy of the approach, but the speed. We can now detect these loops like humans while doing it in a fraction of the time on a standard home computer.”

After he graduated, Li took a job with Google, but the research is ongoing. Morgan and Field are working to expand their training data set and teach a new neural network to recognize different kinds of radiation defects. Eventually, they envision creating a massive cloud-based resource for materials scientists around the world to upload images for near-instantaneous analysis.

“This is just the beginning,” says Morgan. “Machine learning tools will help create a cyber infrastructure that scientists can utilize in ways we are just beginning to understand.”

Learn more: Eagle-eyed machine learning algorithm outdoes human experts

The Latest on: Machine learning tools

[google_news title=”” keyword=”machine learning tools” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Machine learning tools

- Five Takeaways: Artificial Intelligence & Machine Learningon May 9, 2024 at 12:00 am

The Journal of Business hosted Yanek Kondryszyn, intellectual property attorney and partner at Lee & Hayes PLLC, for its most recent Elevating The Conversation podcast.

- OpenAI’s new ChatGPT privacy tool lets creators hide their work from the AIon May 8, 2024 at 5:41 pm

OpenAI finally announced a privacy tool for products like ChatGPT to help creators prevent their copyright content from training AI models.

- New AI Tools Predict How Life’s Building Blocks Assembleon May 8, 2024 at 7:59 am

Google DeepMind’s AlphaFold3 and other deep learning algorithms can now predict the shapes of interacting complexes of protein, DNA, RNA and other molecules, better capturing cells’ biological ...

- The Future Of SEO: How AI And Machine Learning Are Changing Searchon May 8, 2024 at 4:45 am

Despite the uncertainty about how search engines adjust to the new AI world, machine learning is revolutionizing—for the better—the way businesses approach SEO.

- OpenAI will release a Media Manager tool in 2025, allowing creators to block AI trainingon May 7, 2024 at 3:55 pm

No price has yet been listed for the tool, and I'm guessing it will be offered for free since OpenAI is using it to position itself as ethical ...

- OpenAI’s New Tool Will Give Artists Control Over Their Data—but It’s Unclear Howon May 7, 2024 at 11:53 am

ChatGPT developer OpenAI says that artists and other content owners will be able to request that their work be excluded from use in AI development. Many details of the scheme remain unclear.

- Tomorrow’s physics test: machine learningon May 7, 2024 at 7:20 am

Machine learning is becoming an essential part of a physicist’s toolkit. How should new students learn to use it?

- NSF CAREER award supports development of machine learning tools for preeclampsia detectionon May 6, 2024 at 1:49 pm

Talayeh Razzaghi, an assistant professor of industrial and systems engineering at the University of Oklahoma, has been awarded a Faculty Early Career Development Program award from the National ...

- Snapchat introduces augmented reality (AR) & machine learning tools catered to brands and advertiserson May 1, 2024 at 10:28 pm

Snapchat has introduced AR Extensions, enabling advertisers to embed AR Lenses and filters across the platform's diverse ad formats. This integration extends to Dynamic Product Ads, Snap Ads, ...

via Bing News