Researchers have created a control system that makes robots more intelligent.

Using arm sensors that can “read” a person’s muscle movements, Georgia Institute of Technology researchers have created a control system that makes robots more intelligent. The sensors send information to the robot, allowing it to anticipate a human’s movements and correct its own. The system is intended to improve time, safety and efficiency in manufacturing plants.

It’s not uncommon to see large, fast-moving robots on manufacturing floors. Humans seldom work next to them because of safety reasons. Some jobs, however, require people and robots to work together. For example, a person hanging a car door on a hinge uses a lever to guide a robot carrying the door. The power-assisting device sounds practical but isn’t easy to use.

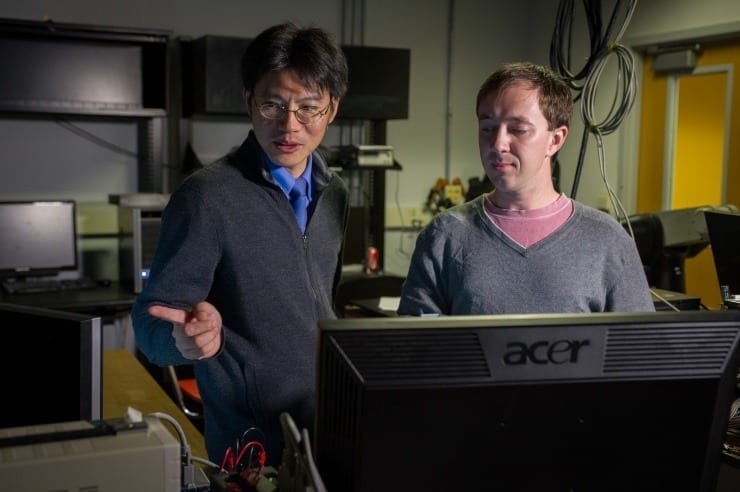

“It turns into a constant tug of war between the person and the robot,” explains Billy Gallagher, a recent Georgia Tech Ph.D. graduate in robotics who led the project. “Both react to each other’s forces when working together. The problem is that a person’s muscle stiffness is never constant, and a robot doesn’t always know how to correctly react.”

For example, as human operators shift the lever forward or backward, the robot recognizes the command and moves appropriately. But when they want to stop the movement and hold the lever in place, people tend to stiffen and contract muscles on both sides of their arms. This creates a high level of co-contraction.

“The robot becomes confused. It doesn’t know whether the force is purely another command that should be amplified or ‘bounced’ force due to muscle co-contraction,” said Jun Ueda, Gallagher’s advisor and a professor in the Woodruff School of Mechanical Engineering. “The robot reacts regardless.”

The robot responds to that bounced force, creating vibration. The human operators also react, creating more force by stiffening their arms. The situation and vibrations become worse.

“You don’t want instability when a robot is carrying a heavy door,” said Ueda.

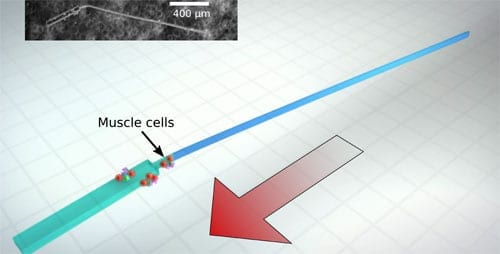

The Georgia Tech system eliminates the vibrations by using sensors worn on a controller’s forearm. The devices send muscle movements to a computer, which provides the robot with the operator’s level of muscle contraction. The system judges the operator’s physical status and intelligently adjusts how it should interact with the human. The result is a robot that moves easily and safely.

“Instead of having the robot react to a human, we give it more information,” said Gallagher. “Modeling the operator in this way allows the robot to actively adjust to changes in the way the operator moves.”

Ueda will continue to improve the system using a $1.2 million National Robotics Initiative grant supported by a National Science Foundation grant (1317718) to better understand the mechanisms of neuromotor adaptation in human-robot physical interaction. The research is intended to benefit communities interested in the adaptive shared control approach for advanced manufacturing and process design, including automobile, aerospace and military.

“Future robots must be able to understand people better,” Ueda said. “By making robots smarter, we can make them safer and more efficient.”

The Latest on: Robots

[google_news title=”” keyword=”Robots” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Robots

- To optimize guide-dog robots, first listen to the visually impairedon May 17, 2024 at 3:06 pm

What features does a robotic guide dog need? Ask the blind, say researchers. A new study identifies how to develop robot guide dogs with insights from guide dog users and trainers.

- Physicists propose path to faster, more flexible robotson May 17, 2024 at 2:29 pm

Physicists revealed a microscopic phenomenon that could greatly improve the performance of soft devices, such as agile flexible robots or microscopic capsules for drug delivery.

- Cats playing with robots proves a winning combo in novel art installationon May 17, 2024 at 1:59 pm

"At first glance, the project is about designing a robot to enrich the lives of a family of cats by playing with them," said co-author Steve Benford of the University of Nottingham, who led the ...

- Fact Check: Will Robots Replace Us?on May 17, 2024 at 12:15 am

The rise of AI and robotics prompts both optimism and worry about the future of work, as technologies displace some jobs but historically create new opportunities.

- Preakness 2024: PETA proposes robots replace live crabs in Lexington Market crab derbyon May 16, 2024 at 1:57 pm

A crab-bot? PETA, the animal rights organization, lobs another shot in its war on Baltimore’s seafood traditions.

- KS3/4 Computing: How robots can work together in a swarmon May 16, 2024 at 11:34 am

Paul Beardsley from Disney Research Zurich explains how the 50 pixelbot robots work together in a swarm to create animations.

- Floating robots reveal just how much airborne dust fertilizes the Southern Ocean—a key climate 'shock absorber'on May 16, 2024 at 9:02 am

The Southern Ocean, a region critical to Earth's climate, hosts vast blooms of microscopic ocean plants known as phytoplankton. They form the very basis of the Antarctic food web.

- New compound eye design could provide inexpensive way to give robots insect-like visionon May 16, 2024 at 7:20 am

A team of engineers and roboticists at Hong Kong University of Science and Technology has developed an electronic compound eye design to give robots the ability to swarm efficiently and inexpensively.

- Surgeons can use AI chatbot to tell robots to help with suturingon May 15, 2024 at 10:00 pm

A virtual assistant for surgeons translates text prompts into commands for a robot, offering a simple way to instruct machines to carry out small tasks in operations ...

- Cameras inspired by insect eyes could give robots a wider viewon May 15, 2024 at 11:00 am

Artificial compound eyes made without the need for expensive and precise lenses could provide cheap visual sensors for robots and driverless cars ...

via Bing News