Study of Deep Neural Networks Suggests Knowledge Comes Via Sensory Experience

As artificial intelligence becomes more sophisticated, much of the public attention has focused on how successfully these technologies can compete against humans at chess and other strategy games. A philosopher from the University of Houston has taken a different approach, deconstructing the complex neural networks used in machine learning to shed light on how humans process abstract learning.

“As we rely more and more on these systems, it is important to know how they work and why,” said Cameron Buckner, assistant professor of philosophy and author of a paper exploring the topic published in the journal Synthese. Better understanding how the systems work, in turn, led him to insights into the nature of human learning.

Philosophers have debated the origins of human knowledge since the days of Plato – is it innate, based on logic, or does knowledge come from sensory experience in the world?

Deep Convolutional Neural Networks, or DCNNs, suggest human knowledge stems from experience, a school of thought known as empiricism, Buckner concluded. These neural networks – multi-layered artificial neural networks, with nodes replicating how neurons process and pass along information in the brain – demonstrate how abstract knowledge is acquired, he said, making the networks a useful tool for fields including neuroscience and psychology.

In the paper, Buckner notes that the success of these networks at complex tasks involving perception and discrimination has at times outpaced the ability of scientists to understand how they work.

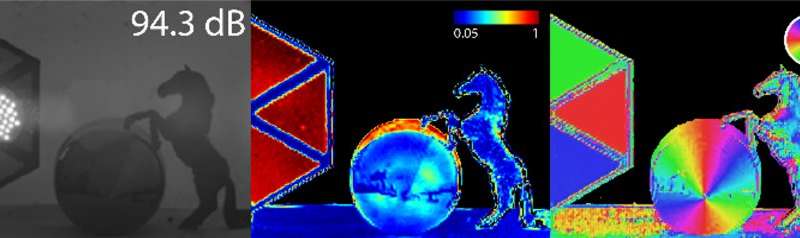

While some scientists who build neural network systems have referenced the thinking of British philosopher John Locke and other influential theorists, their focus has been on results rather than understanding how the networks intersect with traditional philosophical accounts of human cognition. Buckner set out to fill that void, considering the use of AI for abstract reasoning, ranging from strategy games to visual recognition of chairs, artwork and animals, tasks that are surprisingly complex considering the many potential variations in vantage point, color, style and other detail.

“Computer vision and machine learning researchers have recently noted that triangle, chair, cat, and other everyday categories are so dif?cult to recognize because they can be encountered in a variety of different poses or orientations that are not mutually similar in terms of their low-level perceptual properties,” Buckner wrote. “… a chair seen from the front does not look much like the same chair seen from behind or above; we must somehow unify all these diverse perspectives to build a reliable chair-detector.”

To overcome the challenges, the systems have to control for so-called nuisance variation, or the range of differences that commonly affect a system’s ability to identify objects, sounds and other tasks – size and position, for example, or pitch and tone. The ability to account for and digest that diversity of possibilities is a hallmark of abstract reasoning.

The DCNNs have also answered another lingering question about abstract reasoning, Buckner said. Empiricists from Aristotle to Locke have appealed to a faculty of abstraction to complete their explanations of how the mind works, but until now, there hasn’t been a good explanation for how that works. “For the first time, DCNNs help us to understand how this faculty actually works,” Buckner said.

He began his academic career in computer science, studying logic-based approaches to artificial intelligence. The stark differences between early AI and the ways in which animals and humans actually solve problems prompted his shift to philosophy.

Less than a decade ago, he said, scientists believed advances in machine learning would stop short of the ability to produce abstract knowledge. Now that machines are beating humans at strategic games, driverless cars are being tested around the world and facial recognition systems are deployed everywhere from cell phones to airports, finding answers has become more urgent.

“These systems succeed where others failed,” he said, “because they can acquire the kind of subtle, abstract, intuitive knowledge of the world that comes automatically to humans but has until now proven impossible to program into computers.”

Learn more: Artificial Intelligence Helps Reveal How People Process Abstract Thought

The Latest on: Deep Convolutional Neural Networks

[google_news title=”” keyword=”Deep Convolutional Neural Networks” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Deep Convolutional Neural Networks

- AI deciphers new gene regulatory code in plants and makes accurate predictions for newly sequenced genomeson April 26, 2024 at 9:05 am

Genome sequencing technology provides thousands of new plant genomes annually. In agriculture, researchers merge this genomic information with observational data (measuring various plant traits) to ...

- Sandia Pushes The Neuromorphic AI Envelope With Hala Point “Supercomputer”on April 25, 2024 at 6:20 pm

Not many devices in the datacenter have been etched with the Intel 4 process, which is the chip maker’s spin on 7 nanometer extreme ultraviolet immersion ...

- PV module fault detection technique based on convolutional neural networkon April 25, 2024 at 5:14 am

An international research team has used the convolutional neural network (CNN) deep learning algorithm to identify faults in solar panels. Its work showed the proposed technique has a high degree of ...

- On the trail of deepfakes, researchers identify 'fingerprints' of AI-generated videoon April 24, 2024 at 1:09 pm

OpenAI released videos created by its generative artificial intelligence program Sora. The strikingly realistic content, produced via simple text prompts, is the latest breakthrough for companies ...

- U of T researchers map protein network dynamics during cell divisionon April 22, 2024 at 11:23 am

An international team led by researchers at the University of Toronto has mapped the movement of proteins encoded by the yeast genome throughout its cell cycle. This is the first time that all the ...

- Big data boosts wearable tech: New study enhances physical activity trackingon April 21, 2024 at 7:10 pm

Researchers utilized a large-scale unlabeled dataset from the U.K. Biobank to train deep-learning models for accurately monitoring physical activity through wearables, demonstrating enhanced accuracy ...

- Hearing the impact of climate change in Okinawa, one bird call at a timeon April 21, 2024 at 2:04 am

From Okinawa to Australia, “passive acoustic monitoring” projects are feeding scientists with data about changes to ecosystems and biodiversity.

- Intel's Hala Point, the world's largest neuromorphic computer, has 1.15 billion neuronson April 18, 2024 at 7:37 am

The Hala Point system's 1,152 Loihi 2 chips enable a total of 1.15 billion artificial neurons, Intel said, "and 128 billion synapses distributed over 140,544 neuromorphic processing cores." That is an ...

- OpenAI winds down AI image generator that blew minds and forged friendships in 2022on April 18, 2024 at 4:00 am

The launch began an innovative and tumultuous period in AI history, marked by a sense of wonder and a polarizing ethical debate that reverberates in the AI space to this day. Last week, OpenAI turned ...

via Bing News