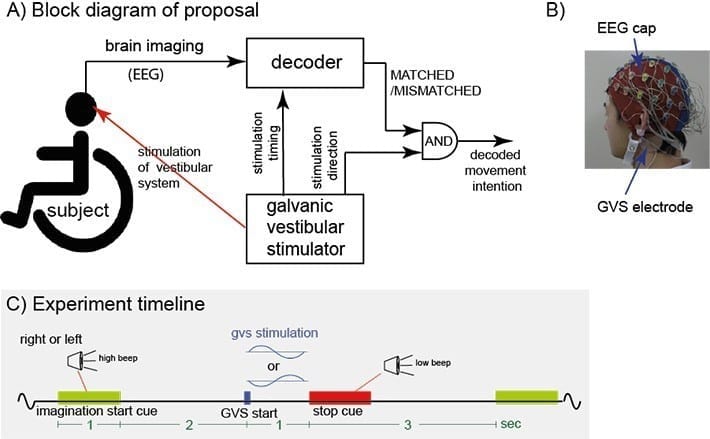

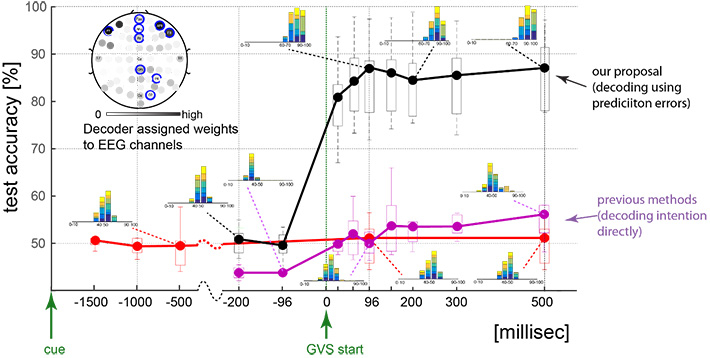

Figure 1.

Experiment to test the new technique:The researchers propose to use a sensory stimulator in parallel with EEG, and decode whether the stimulation matches (or not) the sensory feedback corresponding to the user’s motor intention. The presented experiment simulated a wheelchair turning scenario, and utilized a galvanic vestibular stimulator (GVS). B) The subjects were affixed with GVS electrodes during the EEG recording. The subliminal GVS stimulations induced a sensation of turning either right or left. C) Experiment timeline: In each trial, using stereo speakers and a ?high’ frequency beep, the subjects were instructed to imagine turning either left or right while sitting on a wheelchair. A subliminal GVS stimulation was applied 2 seconds after the end of each cue, randomly either corresponding to turning right or left. This was followed by a rest period of 3 seconds cued by a ?low’ frequency beep (stop cue).

A collaborative study by researchers at Tokyo Institute of Technology has developed a new technique to decode motor intention of humans from Electroencephalography (EEG).

This technique is motivated by the well documented ability of the brain to predict sensory outcomes of self-generated and imagined actions utilizing so called forward models. The method enabled for the first time, nearly 90% single trial decoding accuracy across tested subjects, within 96 ms of the stimulation, with zero user training, and with no additional cognitive load on the users.

The ultimate dream of brain computer interface (BCI) research is to develop an efficient connection between machines and the human brain, such that the machines may be used at will. For example, enabling an amputee to use a robot arm attached to him, just by thinking of it, as if it was his own arm. A big challenge for such a task is the deciphering of a human user’s movement intention from his brain activity, while minimizing the user effort. While a plethora of methods have been suggested for this in the last two decades (1-2), they all require large effort in part of the human user- they either require extensive user training, work well with only a section of the users, or need to use a conspicuous stimulus, inducing additional attentional and cognitive loads on the users. In this study, Researchers from Tokyo Institute of Technology (Tokyo Tech), Le Centre national de la recherche scientifique (CNRS-France), AIST and Osaka University propose a new movement intention decoding philosophy and technique that overcomes all these issues while providing equally much better decoding performance.

The fundamental difference between the previous methods and what they propose is in what is decoded. All the previous methods decode what movement a user intends/imagines, either directly (as in the so called active BCI systems) or indirectly, by decoding what he is attending to (like the reactive BCI systems). Here the researchers propose to use a subliminal sensory stimulator with the Electroencephalography (EEG), and decode, not what movement a user intends/imagines, but to decode whether the movement he intends matches (or not) the sensory feedback sent to the user using the stimulator. Their proposal is motivated by the multitude of studies on so called Forward models in the brain; the neural circuitry implicated in predicting sensory outcomes of self-generated movements (3). The sensory prediction errors, between the forward model predictions and the actual sensory signals, are known to be fundamental for our sensory-motor abilities- for haptic perception (4), motor control (5), motor learning (6), and even inter-personal interactions (7-8) and the cognition of self (9). The researchers therefore hypothesized the predictions errors to have a large signature in EEG, and perturbing the prediction errors (using an external sensory stimulator) to be a promising way to decode movement intentions.

This proposal was tested in a binary simulated wheelchair task, in which users thought of turning their wheelchair either left or right. The researchers stimulated the user’s vestibular system (as this is the dominant sensory feedback during turning), towards either the left or right direction, subliminally using a galvanic vestibular stimulator. They then decode for the presence of prediction errors (ie. whether or stimulation direction matches the direction the user imagines, or not) and consequently, as the direction of stimulation is known, the direction the user imagines. This procedure provides excellent single trial decoding accuracy (87.2% median) in all tested subjects, and within 96 ms of stimulation. These results were obtained with zero user training and with no additional cognitive load on the users, as the stimulation was subliminal.

- Figure 2.

- Decoding performance summary:The across subject median decoding performance when decoding for the direction in which a subject wants to turn (ie. the cue direction), as has been tried in previous methods, is shown in red and pink, while decoding using the new proposed method is shown in black. The data at each time point represents the decoding performance using data from the time period between a reference point (?cue’ for red data, and ?GVS start’ for pink and black data) and that time point. Box plot boundaries represent the 25th and 75th percentile, while the whiskers represent the data range across subjects. The inset histograms shows the subject ensemble decoding performance in the 140 (twenty X7 subjects) test trials, with each subject data shown in a different color.

This proposal promises to radically change how movement intention is decoded, due to several reasons. Primarily, because the method promises better decoding accuracies with no user training and without inducing additional cognitive loads on the users. Furthermore, the fact that the decoding can be done in less than 100 ms of the stimulation highlights its use for real-time decoding. Finally, this method is distinct from other methods utilizing ERP, ERD and ERN, showing that it can be used in parallel to current methods to improve their accuracy.

The Latest on: Brain computer interface

[google_news title=”” keyword=”brain computer interface” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Brain computer interface

- Brain chip unveiled that allows monkey to control robotic arm with brainon April 26, 2024 at 12:03 pm

Technology is advancing by leaps and bounds. Proof of this comes in the latest microchip presented in China, by means of which a monkey is able to control a robotic arm using only ...

- Brain Computer Interface Market Deciphering Consumer Decision-Making the Role of Ethnography Techniqueson April 25, 2024 at 3:27 pm

Request To Download Free Sample of This Strategic Report @- Brain Computer Interface Market is valued approximately at USD $ billion in 2019 and is anticipated to grow with a healthy growth rate of ...

- New Products Collect Data From Your Brain. Where Does It Go?on April 25, 2024 at 2:43 pm

An array of new products monitors users’ brain waves using caps or headbands. That neural data has few privacy protections.

- China develops Neuralink rival for brain computer interface techon April 25, 2024 at 8:14 am

C hinese company Beijing Xinzhida Neurotechnology unveiled a brain computer interface (BCI) implant to rival Elon Musk's Neuralink at the 2024 Zhongguancun Forum (ZGC Forum).

- China Creates 'Neucyber,' Its Version of a Neuralink Brain Chipon April 25, 2024 at 5:58 am

China has reportedly developed a brain-computer interface chip called Neucyber that allows a monkey to control a robotic arm with only its thoughts.

- Colorado enacts groundbreaking brain data privacy lawon April 24, 2024 at 6:15 pm

Colorado became the first state in the nation to enact a law protecting neural data, emphasizing the growing intersection of technology and human biology while ensuring privacy in the expanding field ...

- WVU RNI takes part in groundbreaking research on brain-computer interface device for people with neurological disabilitieson April 20, 2024 at 4:20 pm

Researchers at WVU’s Rockefeller Neuroscience Institute are part of a team exploring cutting-edge technology to help people with neurological injuries and disabilities function and communicate. ...

- Brain-computer interface research reaches new frontierson April 19, 2024 at 7:02 am

Brain-computer interfaces may seem like a science fiction concept, but multiple studies are working to make this technology a reality.

- MIT Technology Reviewon April 19, 2024 at 3:00 am

In the world of brain-computer interfaces, it can seem as if one company sucks up all the oxygen in the room. Last month, Neuralink posted a video to X showing the first human subject to receive its ...

via Bing News