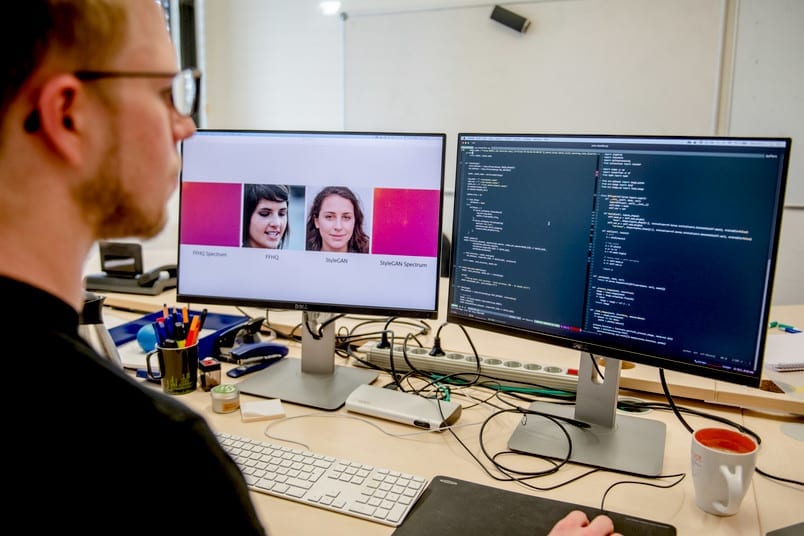

Frequency analysis reveals typical artefacts in computer-generated images. via RUB, Marquard

They look deceptively real, but they are made by computers: so-called deep-fake images are generated by machine learning algorithms, and humans are pretty much unable to distinguish them from real photos. Researchers at the Horst Görtz Institute for IT Security at Ruhr-Universität Bochum and the Cluster of Excellence “Cyber Security in the Age of Large-Scale Adversaries” (Casa) have developed a new method for efficiently identifying deep-fake images. To this end, they analyse the objects in the frequency domain, an established signal processing technique.

The team presented their work at the International Conference on Machine Learning (ICML) on 15 July 2020, one of the leading conferences in the field of machine learning. Additionally, the researchers make their code freely available online, so that other groups can reproduce their results.

Interaction of two algorithms results in new images

Deep-fake images – a portmanteau word from “deep learning” for machine learning and “fake” – are generated with the help of computer models, so-called Generative Adversarial Networks, GANs for short. Two algorithms work together in these networks: the first algorithm creates random images based on certain input data. The second algorithm needs to decide whether the image is a fake or not. If the image is found to be a fake, the second algorithm gives the first algorithm the command to revise the image – until it no longer recognises it as a fake.

In recent years, this technique has helped make deep-fake images more and more authentic. On the website www.whichfaceisreal.com, users can check if they’re able to distinguish fakes from original photos. “In the era of fake news, it can be a problem if users don’t have the ability to distinguish computer-generated images from originals,” says Professor Thorsten Holz from the Chair for Systems Security.

For their analysis, the Bochum-based researchers used the data sets that also form the basis of the above-mentioned page “Which face is real”. In this interdisciplinary project, Joel Frank, Thorsten Eisenhofer and Professor Thorsten Holz from the Chair for Systems Security cooperated with Professor Asja Fischer from the Chair of Machine Learning as well as Lea Schönherr and Professor Dorothea Kolossa from the Chair of Digital Signal Processing.

Frequency analysis reveals typical artefacts

To date, deep-fake images have been analysed using complex statistical methods. The Bochum group chose a different approach by converting the images into the frequency domain using the discrete cosine transform. The generated image is thus expressed as the sum of many different cosine functions. Natural images consist mainly of low-frequency functions.

The analysis has shown that images generated by GANs exhibit artefacts in the high-frequency range. For example, a typical grid structure emerges in the frequency representation of fake images. “Our experiments showed that these artefacts do not only occur in GAN generated images. They are a structural problem of all deep learning algorithms,” explains Joel Frank from the Chair for Systems Security. “We assume that the artefacts described in our study will always tell us whether the image is a deep-fake image created by machine learning,” adds Frank. “Frequency analysis is therefore an effective way to automatically recognise computer-generated images.”

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Detecting deep-fake images

- Lies, deceit to weigh heavily on EU vote, warns parliament head

European Union voters are set to be bombarded with lies and disinformation from outside and within the bloc in the June election for the new European Parliament, the president of the assembly warned ...

- Spurred by Teen Girls, States Move to Ban Deepfake Nudes

Legislators in two dozen states are working on bills, or have passed laws, to combat A.I.-generated sexually explicit images of minors.

- What does Meta AI do? The latest upgrade creates images as you type and more.

Meta AI, the assistant on Facebook, Instagram and other Meta platforms has gotten an upgrade that creates images as you type and more.

- Video claiming to show 'The Simpsons' predict the April 8 eclipse is fabricated | Fact check

A video purporting to show "The Simpsons" predicting the eclipse is an AI creation. The show has never mentioned the April 8 astronomical event.

- Residents react to new "Detroit" sign along I-94 on city's southwest side

(CBS DETROIT) - "It's beautiful. It's a beautiful sign," said one Detroiter driving by the intersection of Proctor Street and Edsel Ford West to get a look across Interstate 94 at the new Detroit ...

Go deeper with Google Headlines on:

Detecting deep-fake images

[google_news title=”” keyword=”detecting deep-fake images” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Detecting computer-generated images

- On the trail of deepfakes, researchers identify 'fingerprints' of AI-generated video

OpenAI released videos created by its generative artificial intelligence program Sora. The strikingly realistic content, produced via simple text prompts, is the latest breakthrough for companies ...

- The telltale signs of AI-generated images, video and audio, according to experts

Experts say it’s still possible to detect AI-generated imagery, but the tools for outsmarting our eyes are getting better and better. (Photo by Pau Barrena/AFP via Getty Images) The background ...

- Snapchat To Watermark AI-Generated Photos: All Details

Snapchat has announced that it will soon watermark AI-generated content for better AI transparency with the users.

- Tips on detecting AI-generated content

One way to tell if literature is man-made is if it has an authentic voice. AI-generated pieces are often robotic and lack emotion or personality. In turn, content written by humans might be full of ...

- Snap plans to add watermarks to images created with its AI-powered tools

Social media service Snap said on Tuesday that it plans to add watermarks to AI-generated images on its platform. The watermark is a translucent version of the Snap logo with a sparkle emoji, and ...

Go deeper with Google Headlines on:

Detecting computer-generated images

[google_news title=”” keyword=”detecting computer-generated images” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]