CREDIT: KAIST

KAIST researchers upgraded their smart glasses with a low-power multicore processor to employ stereo vision and deep-learning algorithms, making the user interface and experience more intuitive and convenient

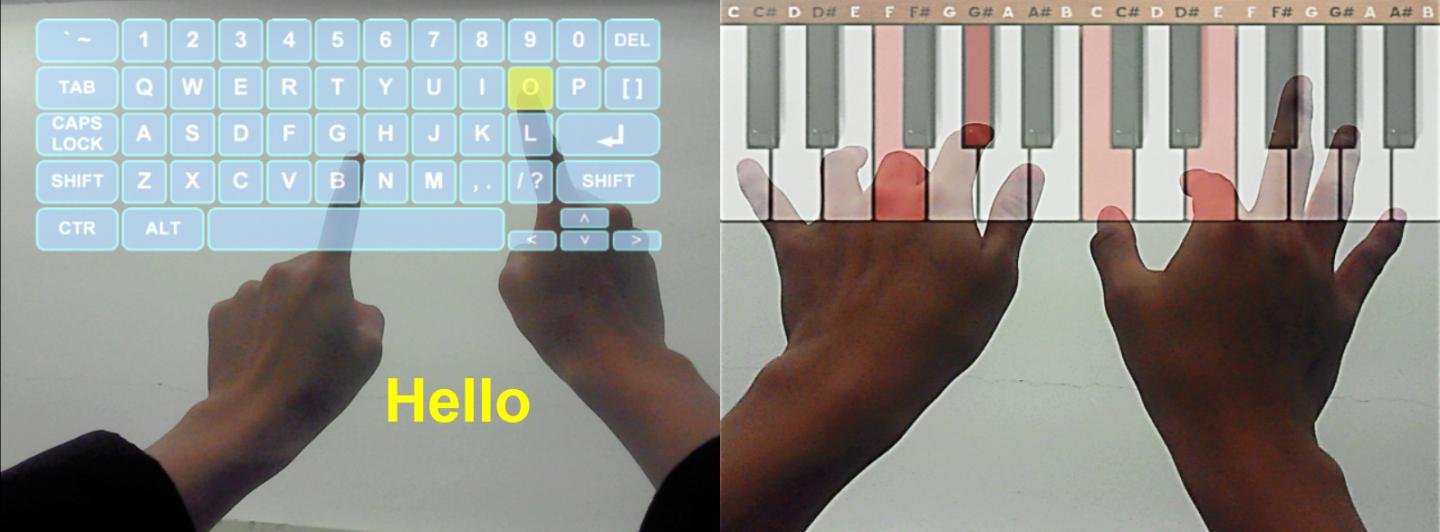

K-Glass, smart glasses reinforced with augmented reality (AR) that were first developed by the Korea Advanced Institute of Science and Technology (KAIST) in 2014, with the second version released in 2015, is back with an even stronger model. The latest version, which KAIST researchers are calling K-Glass 3, allows users to text a message or type in key words for Internet surfing by offering a virtual keyboard for text and even one for a piano.

Currently, most wearable head-mounted displays (HMDs) suffer from a lack of rich user interfaces, short battery lives, and heavy weight. Some HMDs, such as Google Glass, use a touch panel and voice commands as an interface, but they are considered merely an extension of smartphones and are not optimized for wearable smart glasses. Recently, gaze recognition was proposed for HMDs including K-Glass 2, but gaze is insufficient to realize a natural user interface (UI) and experience (UX), such as user’s gesture recognition, due to its limited interactivity and lengthy gaze-calibration time, which can be up to several minutes.

As a solution, Professor Hoi-Jun Yoo and his team from the Electrical Engineering Department recently developed K-Glass 3 with a low-power natural UI and UX processor to enable convenient typing and screen pointing on HMDs with just bare hands. This processor is composed of a pre-processing core to implement stereo vision, seven deep-learning cores to accelerate real-time scene recognition within 33 milliseconds, and one rendering engine for the display.

The stereo-vision camera, located on the front of K-Glass 3, works in a manner similar to three dimension (3D) sensing in human vision. The camera’s two lenses, displayed horizontally from one another just like depth perception produced by left and right eyes, take pictures of the same objects or scenes and combine these two different images to extract spatial depth information, which is necessary to reconstruct 3D environments. The camera’s vision algorithm has an energy efficiency of 20 milliwatts on average, allowing it to operate in the Glass more than 24 hours without interruption.

The research team adopted deep-learning-multi core technology dedicated for mobile devices to recognize user’s gestures based on the depth information. This technology has greatly improved the Glass’s recognition accuracy with images and speech, while shortening the time needed to process and analyze data. In addition, the Glass’s multi-core processor is advanced enough to become idle when it detects no motion from users. Instead, it executes complex deep-learning algorithms with a minimal power to achieve high performance.

Professor Yoo said, “We have succeeded in fabricating a low-power multi-core processer that consumes only 126.1 milliwatts of power with a high efficiency rate. It is essential to develop a smaller, lighter, and low-power processor if we want to incorporate the widespread use of smart glasses and wearable devices into everyday life. K-Glass 3’s more intuitive UI and convenient UX permit users to enjoy enhanced AR experiences such as a keyboard or a better, more responsive mouse.”

Learn more: K-Glass 3 offers users a keyboard to type text

The Latest on: Smart glasses

[google_news title=”” keyword=”smart glasses” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Smart glasses

- Meta’s Ray-Ban Smart Shades Get a Fresh Blast of AIon April 27, 2024 at 4:30 am

Plus: Leaked details tell us more about the new Google Pixel 8A, Freitag’s environmentally conscious bag is entirely recyclable, and it’s time to unpack a whole bunch of tech acronyms.

- Ray-Ban Meta encourages ‘living all in,’ seeing the world in style with smart glasseson April 26, 2024 at 2:33 pm

An effortlessly cool protagonist showcases how a pair of stylish smart glasses makes mundane moments extraordinary.

- What the New Ray-Ban Meta Smart Glasses Mean for Travelerson April 26, 2024 at 10:51 am

“Glasses are the ideal device for an AI assistant because you can let them see what you see and hear what you hear. So they have full context on what’s going on around you as they help you with ...

- More Smarts Coming To Meta’s Ray-Ban Smart Glasses Collaborationon April 24, 2024 at 3:21 pm

Smart glasses and headsets are in a moment of flux and innovation. Luxottica and Meta executives say they're bringing a new version of their smart Ray-Bans in "weeks." ...

- Meta's Ray-Ban smart glasses just got another useful feature for free (and a new style)on April 24, 2024 at 9:22 am

The improvements to the Ray-Ban Meta glasses and sunglasses include better integration with Apple Music, support for multimodal AI, and compatibility with WhatsApp and Messenger, allowing users to ...

- Meta’s update to its AI-powered Ray-Bans make the case for Apple to make smart glasseson April 23, 2024 at 3:08 pm

Meta has announced a new software update for its Ray-Ban smart glasses that adds more AI vision features, better video calling, and more.

- Ray-Ban Meta smart glasses just got a ton of upgrades, including new AI features and video callingon April 23, 2024 at 1:30 pm

The Ray-Ban Meta Smart Glasses just gained some significant upgrades. In a push from Meta to make its photo-taking smart glasses even smarter, camera-enabled AI features are rolling out in beta to all ...

- The Ray-Ban Meta Smart Glasses get video calling, Apple Music, and a new styleon April 23, 2024 at 12:53 pm

You can peep a pretty clear demo of the video calling feature from Zuckerberg’s reel. In it, he talks to Eva Chen, who runs fashion at Instagram, about the new Skyler frames and... chain lengths, of ...

- The Ray-Ban Meta Smart Glasses have multimodal AI nowon April 23, 2024 at 12:19 pm

The Humane AI Pin just launched and bellyflopped with reviewers after a universally poor user experience. It’s been somewhat of a bad omen hanging over AI gadgets. But having futzed around a bit with ...

- New Ray-Ban | Meta Smart Glasses Styles and Meta AI Updateson April 23, 2024 at 10:50 am

We’re adding new styles, video calling with WhatsApp and Messenger, and Meta AI with Vision, so you can ask your glasses about what you’re seeing and get helpful information.

via Bing News