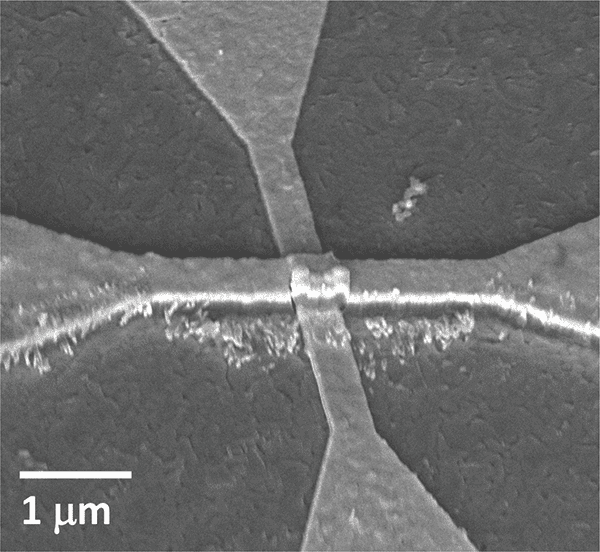

SEM image of the artificial neuron device.

Images courtesy of Nature Nanotechnology

Training neural networks to perform tasks, such as recognizing images or navigating self-driving cars, could one day require less computing power and hardware thanks to a new artificial neuron device developed by researchers at the University of California San Diego. The device can run neural network computations using 100 to 1000 times less energy and area than existing CMOS-based hardware.

Researchers report their work in a paper published Mar. 18 in Nature Nanotechnology.

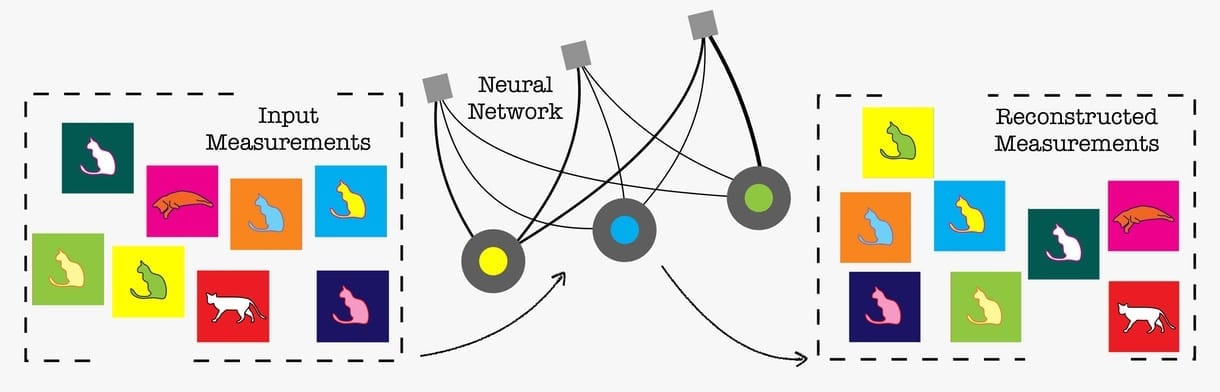

Neural networks are a series of connected layers of artificial neurons, where the output of one layer provides the input to the next. Generating that input is done by applying a mathematical calculation called a non-linear activation function. This is a critical part of running a neural network. But applying this function requires a lot of computing power and circuitry because it involves transferring data back and forth between two separate units—the memory and an external processor.

Now, UC San Diego researchers have developed a nanometer-sized device that can efficiently carry out the activation function.

“Neural network computations in hardware get increasingly inefficient as the neural network models get larger and more complex,” said corresponding author Duygu Kuzum, a professor of electrical and computer engineering at the UC San Diego Jacobs School of Engineering. “We developed a single nanoscale artificial neuron device that implements these computations in hardware in a very area- and energy-efficient way.”

The new study was performed as a collaboration between UC San Diego researchers from the Department of Electrical and Computer Engineering (led by Kuzum, who is part of the university’s Center for Machine-Integrated Computing and Security) and a DOE Energy Frontier Research Center (EFRC led by physics professor Ivan Schuller), which focuses on developing hardware implementations of energy-efficient artificial neural networks.

The device implements one of the most commonly used activation functions in neural network training called a rectified linear unit. What’s particular about this function is that it needs hardware that can undergo a gradual change in resistance in order to work. And that’s exactly what the UC San Diego researchers engineered their device to do—it can gradually switch from an insulating to a conducting state, and it does so with the help of a little bit of heat.

This switch is what’s called a Mott transition. It takes place in a nanometers-thin layer of vanadium dioxide. Above this layer is a nanowire heater made of titanium and gold. When current flows through the nanowire, the vanadium dioxide layer slowly heats up, causing a slow, controlled switch from insulating to conducting.

“This device architecture is very interesting and innovative,” said first author Sangheon Oh, an electrical and computer engineering Ph.D. student in Kuzum’s lab. Typically, materials in a Mott transition experience an abrupt switch from insulating to conducting because the current flows directly through the material, he explained. “In this case, we flow current through a nanowire on top of the material to heat it and induce a very gradual resistance change.”

To implement the device, the researchers first fabricated an array of these so-called activation (or neuron) devices, along with a synaptic device array. Then they integrated the two arrays on a custom printed circuit board and connected them together to create a hardware version of a neural network.

The researchers used the network to process an image—in this case, a picture of Geisel Library at UC San Diego. The network performed a type of image processing called edge detection, which identifies the outlines or edges of objects in an image. This experiment demonstrated that the integrated hardware system can perform convolution operations that are essential for many types of deep neural networks.

The researchers say the technology could be further scaled up to do more complex tasks such as facial and object recognition in self-driving cars. With interest and collaboration from industry, this could happen, noted Kuzum.

“Right now, this is a proof of concept,” Kuzum said. “It’s a tiny system in which we only stacked one synapse layer with one activation layer. By stacking more of these together, you could make a more complex system for different applications.”

Original Article: Artificial Neuron Device Could Shrink Energy Use and Size of Neural Network Hardware

More from: University of California San Diego

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Neural network hardware

- Fundamental Issues In Computer Vision Still Unresolved

The emergence of CNN-based neural networks began supplanting traditional CV techniques for object detection and recognition. “While first implemented using hardwired CNN accelerator hardware blocks, ...

- Intel Has Over 500 AI Models Running Optimized On Core Ultra CPUs

Indeed, that's the topic of a release that Intel just published titled "More Than 500 AI Models Run Optimized on Intel Core Ultra Processors." The headline pretty much tells the story: there are ...

- At The Heart Of The AI PC Battle Lies The NPU

As AI PCs ascend in the market, NPUs are stepping into the spotlight. Indeed, the NPU has become a new and popular destination for many next-generation AI workloads.

- AlabugaLeaks. Part 2: Kaspersky Lab and neural networks for Russian military drones

A drone with a neural network is not the only collaboration project of Kaspersky with Albatross. The presentation of the combat version of the Albatross M5 UAV obtained by Cyber Resistance hacktivists ...

- AI Efficiency Breakthrough: How Sound Waves Are Revolutionizing Optical Neural Networks

Researchers have developed a way to use sound waves in optical neural networks, enhancing their ability to process data with high speed and energy efficiency. Optical neural networks may provide the ...

Go deeper with Google Headlines on:

Neural network hardware

[google_news title=”” keyword=”neural network hardware” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Artificial neuron device

- 3D printed surgical implants may support cures for blindness, chronic pain and neurological diseases

Clever bio-inks that sit inside the human body and restore damaged neurons could cure a whole swathe of diseases in the next 20 years: conditions that have baffled scientists and clinicians for ...

- Sandia Pushes The Neuromorphic AI Envelope With Hala Point “Supercomputer”

Not many devices in the datacenter have been etched with the Intel 4 process, which is the chip maker’s spin on 7 nanometer extreme ultraviolet immersion ...

- Magnetic microcoils unlock targeted single-neuron therapies for neurodegenerative disorders

Researchers deploy an array of microscopic coils to create a magnetic field and stimulate individual neurons. The magnetic field can induce an electric field in any nearby neurons, the same effect ...

- Nanofluidic memristors compute in brain-inspired logic circuits

Building artificial nanofluidic neural networks could provide a closer analogy to real neural systems, and could also be more energy-efficient. A memristor is a circuit element with a resistance (and ...

- Intel builds world’s largest neuromorphic system

Intel has announced that it has built the world's largest neuromorphic system.

Go deeper with Google Headlines on:

Artificial neuron device

[google_news title=”” keyword=”artificial neuron device” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]