Researchers from NVIDIA, led by Stan Birchfield and Jonathan Tremblay, developed a first of its kind deep learning-based system that can teach a robot to complete a task by just observing the actions of a human. The method is designed to enhance communication between humans and robots and at the same time further research that will enable people to work alongside robots seamlessly.

“For robots to perform useful tasks in real-world settings, it must be easy to communicate the task to the robot; this includes both the desired result and any hints as to the best means to achieve that result,” the researchers stated in their research paper. “With demonstrations, a user can communicate a task to the robot and provide clues as to how to best perform the task.”

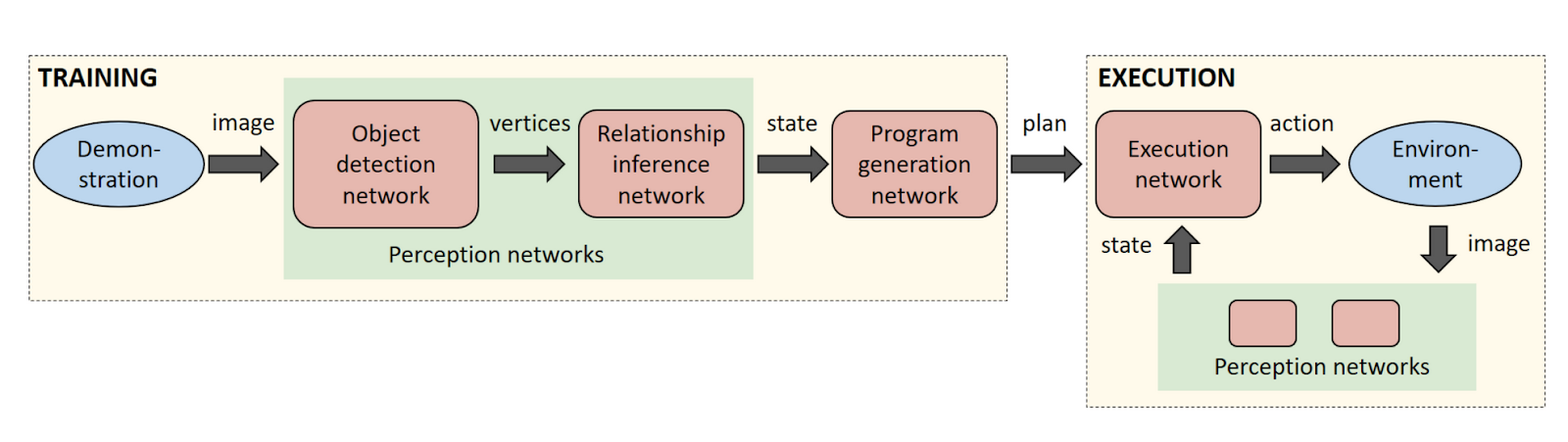

Using NVIDIA TITAN X GPUs, the researchers trained a sequence of neural networks to perform duties associated with perception, program generation, and program execution. As a result, the robot was able to learn a task from a single demonstration in the real world.

Once the robot sees a task, it generates a human-readable description of the steps necessary to re-perform the task. The description allows the user to quickly identify and correct any issues with the robot’s interpretation of the human demonstration before execution on the real robot.

The key to achieving this capability is leveraging the power of synthetic data to train the neural networks. Current approaches to training neural networks require large amounts of labeled training data, which is a serious bottleneck in these systems. With synthetic data generation, an almost infinite amount of labeled training data can be produced with very little effort.

This is also the first time an image-centric domain randomization approach has been used on a robot. Domain randomization is a technique to produce synthetic data with large amounts of diversity, which then fools the perception network into seeing the real-world data as simply another variation of its training data. The researchers chose to process the data in an image-centric manner to ensure that the networks are not dependent on the camera or environment.

“The perception network as described applies to any rigid real-world object that can be reasonably approximated by its 3D bounding cuboid,” the researchers said. “Despite never observing a real image during training, the perception network reliably detects the bounding cuboids of objects in real images, even under severe occlusions.”

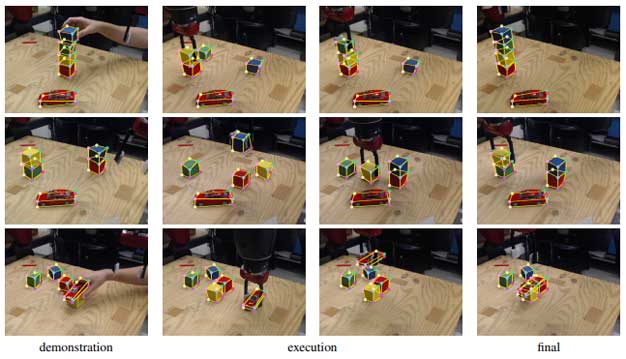

For their demonstration, the team trained object detectors on several colored blocks and a toy car. The system was taught the physical relationship of blocks, whether they are stacked on top of one another or placed next to each other.

In the video above, the human operator shows a pair of stacks of cubes to the robot. The system then infers an appropriate program and correctly places the cubes in the correct order. Because it takes the current state of the world into account during execution, the system is able to recover from mistakes in real time.

The researchers will present their research paper and work at the International Conference on Robotics and Automation (ICRA), in Brisbane, Australia this week.

The team says they will continue to explore the use of synthetic training data for robotics manipulation to extend the capabilities of their method to additional scenarios.

via NVIDIA: Read the research paper

The Latest on: Robot learning

[google_news title=”” keyword=”robot learning” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Robot learning

- FDA-approved surgical robots trend toward autonomy, study findson April 29, 2024 at 6:24 pm

A systematic review in npj Digital Medicine categorizes FDA-approved surgical robots by their levels of autonomy, highlighting a need for standardized definitions and greater regulatory clarity as ...

- New Chinese AI Robot: Astribot S1 Can Perform Household Choreson April 29, 2024 at 3:57 pm

Shenzhen-based tech startup Stardust Intelligence has made waves in the robotics industry with the release of its latest innovation: Astribot S1. This AI robot has blended advanced artificial ...

- Researchers use ChatGPT for choreographies with flying robotson April 29, 2024 at 11:57 am

Prof. Angela Schoellig from the Technical University of Munich (TUM) uses ChatGPT to develop choreographies for swarms of drones to perform along to music. An additional safety filter prevents mid-air ...

- Female robotics founders discuss their journeys in the industryon April 29, 2024 at 5:00 am

We spoke to the founders of Adagy Robotics and the founders of Diligent Robotics about their experiences within the industry.

- This is the first humanoid electric robot that can run at 6km/hon April 29, 2024 at 4:34 am

China has recently unveiled the first ever fully electric humanoid robot called 'Tiangong' which is capable of running at a speed of 6 km/hr.

- Humanoid robots are learning to fall wellon April 28, 2024 at 1:15 pm

The savvy marketers at Boston Dynamics produced two major robotics news cycles last week. As I write this, the sub-40 second video is steadily approaching five million views. The accompanying video ...

- Robotics brings fun in learningon April 27, 2024 at 9:03 am

ONE of the country's leading solutions integrators is showing teachers and students the most advanced and creative ways to teach and learn programming and robotics. Radenta Technologies introduced the ...

- Astribot S1 AI Humanoid robot unveiled demonstrating its agility, dexterity and accuracyon April 27, 2024 at 12:55 am

This week the Astribot S1 humanoid robot was unveiled in Shenzhen, China, marking another significant leap forward in autonomous robotics ...

- 3 Robotics Stocks That Will Make Early Investors Exceedingly Wealthyon April 26, 2024 at 11:19 am

InvestorPlace - Stock Market News, Stock Advice & Trading Tips Robotics has moved far before the realm of science fiction, revolutionizing ...

via Bing News