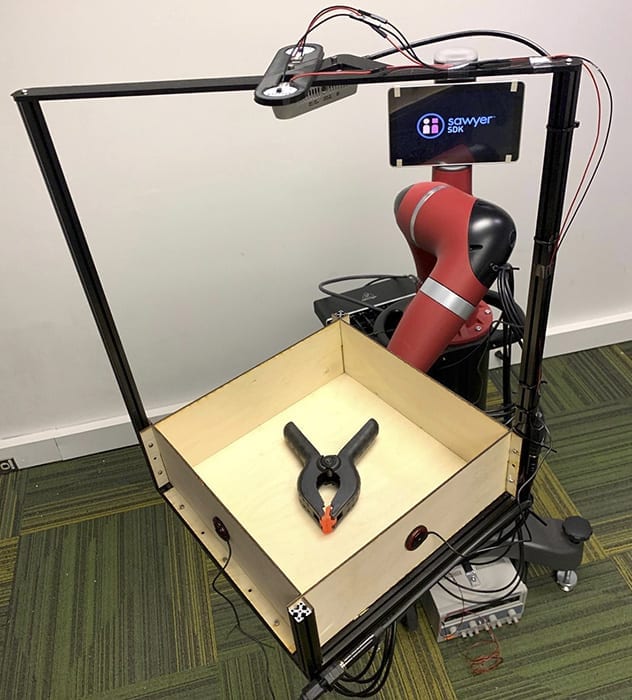

To prove that sound can be an asset to robots, SCS researchers built a dataset by recording video and audio of 60 common objects as they rolled around a tray. The team captured these interactions using Tilt-Bot — a square tray attached to the arm of a Sawyer robot.

Carnegie Mellon builds dataset capturing interaction of sound, action, vision

People rarely use just one sense to understand the world, but robots usually only rely on vision and, increasingly, touch. Carnegie Mellon University researchers find that robot perception could improve markedly by adding another sense: hearing.

In what they say is the first large-scale study of the interactions between sound and robotic action, researchers at CMU’s Robotics Institute found that sounds could help a robot differentiate between objects, such as a metal screwdriver and a metal wrench. Hearing also could help robots determine what type of action caused a sound and help them use sounds to predict the physical properties of new objects.

“A lot of preliminary work in other fields indicated that sound could be useful, but it wasn’t clear how useful it would be in robotics,” said Lerrel Pinto, who recently earned his Ph.D. in robotics at CMU and will join the faculty of New York University this fall. He and his colleagues found the performance rate was quite high, with robots that used sound successfully classifying objects 76 percent of the time.

The results were so encouraging, he added, that it might prove useful to equip future robots with instrumented canes, enabling them to tap on objects they want to identify.

The researchers presented their findings last month during the virtual Robotics Science and Systems conference. Other team members included Abhinav Gupta, associate professor of robotics, and Dhiraj Gandhi, a former master’s student who is now a research engineer at Facebook Artificial Intelligence Research’s Pittsburgh lab.

To perform their study, the researchers created a large dataset, simultaneously recording video and audio of 60 common objects — such as toy blocks, hand tools, shoes, apples and tennis balls — as they slid or rolled around a tray and crashed into its sides. They have since released this dataset, cataloging 15,000 interactions, for use by other researchers.

The team captured these interactions using an experimental apparatus they called Tilt-Bot — a square tray attached to the arm of a Sawyer robot. It was an efficient way to build a large dataset; they could place an object in the tray and let Sawyer spend a few hours moving the tray in random directions with varying levels of tilt as cameras and microphones recorded each action.

They also collected some data beyond the tray, using Sawyer to push objects on a surface.

Though the size of this dataset is unprecedented, other researchers have also studied how intelligent agents can glean information from sound. For instance, Oliver Kroemer, assistant professor of robotics, led research into using sound to estimate the amount of granular materials, such as rice or pasta, by shaking a container, or estimating the flow of those materials from a scoop.

Pinto said the usefulness of sound for robots was therefore not surprising, though he and the others were surprised at just how useful it proved to be. They found, for instance, that a robot could use what it learned about the sound of one set of objects to make predictions about the physical properties of previously unseen objects.

“I think what was really exciting was that when it failed, it would fail on things you expect it to fail on,” he said. For instance, a robot couldn’t use sound to tell the difference between a red block or a green block. “But if it was a different object, such as a block versus a cup, it could figure that out.”

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Robot hearing

- Teacher Techniques: Pupils with additional support needs - I Am A Robot

Look at the I Am A Robot lesson plan and lyric sheet to start preparing your lesson.

- Humanoid Robot With AI Mind Is Meant to Think Just Like People, And It’s Learning

Canadian startup Sanctuary AI introduces the seventh generation of the Phoenix robot with a mind and capabilities meant to mimic the human one ...

- Meet the BionicBee, The World's Smallest Robotic Insect

Engineers can’t make them as tiny as real bees, but these robots are small, coming in at only 1.2 oz with a 240 mm wingspan. Autonomous Rather than being piloted like drones, the BionicBees actually ...

- Best deals on robot vacuums ahead of Memorial Day

It's time for a thorough house cleaning this spring, and a robot vacuum can help you do all the heavy lifting.

- Best robot vacuum deals in May 2024

A robot vacuum can set you back a few hundred dollars. Fortunately, the best robot vacuum deals can help lower the cost of your robotic assistant. Currently, we're seeing some great sales on some ...

Go deeper with Google Headlines on:

Robot hearing

[google_news title=”” keyword=”robot hearing” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Robot perception

- From Robots to AI: Understanding the Uncanny Valley in Digital Innovation

As technology and AI continue to advance, pushing the boundaries of realism, it becomes crucial to understand where this phenomenon can have a negative impact.

- America Can’t Stop Watching Creepy Robot Videos

Boston Dynamics has perfected the art of freaking people out. Now other tech companies are trying to do the same.

- Universal Robots Emerges as Preferred Robotics Platform for AI Solutions at Automate 2024

At the show, UR will share a booth with sister company Mobile Industrial Robots (MiR), the two companies will show a joint offering traversing the shared show floor; a mobile cobot from Enabled ...

- Mitsubishi And Extend Robotics To Roll Out VR For Industrial Robotic Applications Robotics & Automation News

The resulting human-robot interface renders the workspace in 3D to provide authentic depth perception during teleoperation, while facilitating fast user gesture input to control the robot with low ...

- Next-Level Robotics: Enhancing Accuracy with Advanced Multisensory Interfaces

Researchers introduced an advanced multisensory interface in Actuators journal, enhancing robot-assisted pouring tasks with detailed sensory feedback while managing high visual demands. Their ...

Go deeper with Google Headlines on:

Robot perception

[google_news title=”” keyword=”robot perception” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]