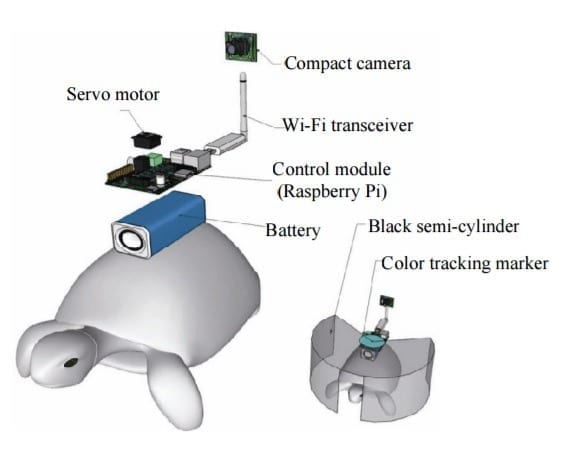

A human controller influences the turtle’s escape behavior by sending left and right signals via Wi-Fi to a control system on the back of the turtle.

In the 2009 blockbuster “Avatar,” a human remotely controls the body of an alien. It does so by injecting human intelligence into a remotely located, biological body. Although still in the realm of science fiction, researchers are nevertheless developing so-called ‘brain-computer interfaces’ (BCIs) following recent advances in electronics and computing. These technologies can ‘read’ and use human thought to control machines, for example, humanoid robots.

New research has demonstrated the possibility of combining a BCI with a device that transmits information from a computer to a brain, or a so-called ‘computer-to-brain interface’ (CBI). The combination of these devices could be used to establish a functional link between the brains of different species. Now, researchers from the Korea Advanced Institute of Science and Technology (KAIST) have developed a human-turtle interaction system in which a signal originating from a human brain can affect where a turtle moves.

Unlike previous research that has tried to control animal movement by applying invasive methods, most notably in insects, KAIST researchers propose a conceptual system that can guide an animal’s moving path by controlling its instinctive escape behaviour. They chose the turtle because of its cognitive abilities as well as its ability to distinguish different wavelengths of light. Specifically, turtles can recognize a white light source as an open space and so move toward it. They also show specific avoidance behaviour to things that might obstruct their view. Turtles also move toward and away from obstacles in their environment in a predictable manner. It was this instinctive, predictable behaviour that the researchers induced using the BCI.

The entire human-turtle setup is as follows: A head-mounted display (HMD) is combined with a BCI to immerse the human user in the turtle’s environment. The human operator wears the BCI-HMD system, while the turtle has a ‘cyborg system’ — consisting of a camera, a Wi-Fi transceiver, a computer control module and a battery — all mounted on the turtle’s upper shell. Also included on the turtle’s shell is a black semi-cylinder with a slit, which forms the ‘stimulation device’. This can be turned ±36 degrees via the BCI.

The entire process works like this: the human operator receives images from the camera mounted on the turtle. These real-time video images allow the human operator to decide where the turtle should move. The human provides thought commands that are recognized by the wearable BCI system as electroencephalography (EEG) signals. The BCI can distinguish between three mental states: left, right and idle. The left and right commands activate the turtle’s stimulation device via Wi-Fi, turning it so that it obstructs the turtle’s view. This invokes its natural instinct to move toward light and change its direction. Finally, the human acquires updated visual feedback from the camera mounted on the shell and in this way continues to remotely navigate the turtle’s trajectory.

The research demonstrates that the animal guiding scheme via BCI can be used in a variety of environments with turtles moving indoors and outdoors on many different surfaces, like gravel and grass, and tackling a range of obstacles, such as shallow water and trees. This technology could be developed to integrate positioning systems and improved augmented and virtual reality techniques, enabling various applications, including devices for military reconnaissance and surveillance.

Learn more: Controlling turtle motion with human thought

[osd_subscribe categories=’computer-to-brain-interface’ placeholder=’Email Address’ button_text=’Subscribe Now for any new posts on the topic “COMPUTER-TO-BRAIN-INTERFACE”‘]

The Latest on: Computer-to-brain interface

[google_news title=”” keyword=”computer-to-brain interface” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Computer-to-brain interface

- Brain-computer interface translates ALS patient's brain activity into spoken wordson May 8, 2024 at 10:22 pm

Researchers successfully used a brain-computer interface to synthesize speech directly from brain activity in an ALS patient, achieving 80% word recognition accuracy by listeners, showcasing the ...

- How Not to Get Brain-Eating Worms and Mercury Poisoningon May 8, 2024 at 5:55 pm

Are you experiencing unexplained memory lapses or brain fog? Ever considered that you may have mercury poisoning? For RFK Jr. it was worse—he also had a brain parasite. Here’s how to avoid both ...

- ArdEEG Lowers The Cost Of Brain-Computer Interfaceson May 7, 2024 at 5:00 pm

Considering the incredible potential offered by brain-computer interfaces (BCIs), it’s no wonder there are so many companies scrambling to make their mark in the field. Some see it as an assistive ...

- Neurable raises $13M for brain-computer interface with everyday productson May 7, 2024 at 11:00 am

Neurable raised $13 million for its brain-computer interface (BCI) technology that can work with everyday products.

- Neurable Inc. Raises $13M Paving the Way for Brain-Computer Interface Technology in Everyday Productson May 7, 2024 at 4:00 am

Neurable Inc., the neurotechnology company democratizing BCI technology, announced today that it has raised an additional $13 million in funding from Ultratech Capital Partners, TRAC, Pace Ventures, ...

- AI Deep Learning Improves Brain-Computer Interface Performanceon May 6, 2024 at 8:54 am

AI deep learning powers a brain-computer interface that enables humans to continuously control a cursor using thoughts.

- New Non-Invasive Brain-Computer Interface Enables Thought-Controlled Object Manipulationon May 5, 2024 at 8:50 pm

Researchers have showcased noninvasive BCIs in their recent study, offering a promising alternative with enhanced safety, affordability, scalability, and accessibility for a broader demographic.

- Chip in the Brain? How Brain-Computer Interfaces Could Change Medicineon May 2, 2024 at 11:00 pm

Looking for more episodes? Find them wherever you listen to podcasts. Danny Lewis is an audio reporter and co-host for The Wall Street Journal's Future of Everything podcast, where he covers the ...

- Deep-learning decoding for a noninvasive brain-computer interfaceon April 30, 2024 at 5:00 pm

Brain-computer interfaces (BCIs) have the potential to make life easier for people with motor or speech disorders, allowing them to manipulate prosthetic limbs and employ computers, among other uses.

via Bing News