Researchers from the School of Interactive Computing and the Institute for Robotics and Intelligent Machines developed a new method that teaches computers to “see” and understand what humans do in a typical day.

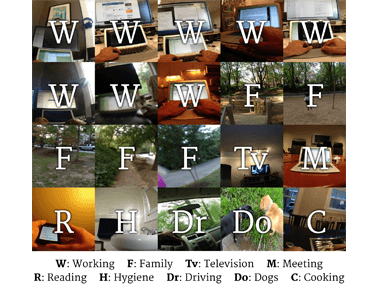

The technique gathered more than 40,000 pictures taken every 30 to 60 seconds, over a 6 month period, by a wearable camera and predicted with 83 percent accuracy what activity that person was doing. Researchers taught the computer to categorize images across 19 activity classes. The test subject wearing the camera could review and annotate the photos at the end of each day (deleting any necessary for privacy) to ensure that they were correctly categorized.

“It was surprising how the method’s ability to correctly classify images could be generalized to another person after just two more days of annotation,” said Steven Hickson, a Ph.D. candidate in Computer Science and a lead researcher on the project.

“This work is about developing a better way to understand people’s activities, and build systems that can recognize people’s activities at a finely-grained level of detail,” said Edison Thomaz, co-author and graduate research assistant in the School of Interactive Computing. “Activity tracking devices like the Fitbit can tell how many steps you take per day, but imagine being able to track all of your activities – not just physical activities like walking and running. This work is moving toward full activity intelligence. At a technical level, we are showing that it’s becoming possible for computer vision techniques alone to be used for this.”

The group believes they have gathered the largest annotated dataset of first-person images to demonstrate that deep-learning can understand human behavior and the habits of a specific person.

Student Daniel Casto, a Ph.D. candidate in Computer Science and a lead researcher on the project, helped present the method earlier this month at UBICOMP 2015 in Osaka, Japan. He says reaction from conference-goers was positive.

“People liked that we had a method that combines time and images,” Castro says. “Time (of activity) can be especially important for some activity classes. This system learned how relevant images were because of people’s schedules. What does it think the image is showing? It sees both time and image probabilities and makes a better prediction.”

The ability to literally see and recognize human activities has implications in a number of areas – from developing improved personal assistant applications like Siri to helping researchers explain links between health and behavior, Thomaz says.

Castro and Hickson believe that someday within the next decade we will have ubiquitous devices that can improve our personal choices throughout the day.

“Imagine if a device could learn what I would be doing next – ideally predict it – and recommend an alternative?” Castro says. “Once it builds your own schedule by knowing what you are doing, it might tell you there is a traffic delay and you should leave sooner or take a different route.”

Read more: Researchers Develop Deep-Learning Method to Predict Daily Activities

The Latest on: Deep learning

[google_news title=”” keyword=”Deep learning” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Deep learning

- ARVO 2024: How a deep learning model can benefit femtosecond laser-assisted cataract surgeryon May 7, 2024 at 1:02 pm

Dustin Morley, PhD, principal research scientist at LENSAR, discusses research on applying deep learning to benefit FLACS procedures.

- Carnegie Mellon develops deep-learning alternative to in-situ PBF-LB monitoringon May 7, 2024 at 5:26 am

Researchers have developed a deep-learning approach to capture melt pools in PBF-LB Additive Manufacturing using airborne or thermal emissions ...

- AI Deep Learning Improves Brain-Computer Interface Performanceon May 6, 2024 at 8:54 am

AI deep learning powers a brain-computer interface that enables humans to continuously control a cursor using thoughts.

- RoadMap Technologies Releases RoadMap TrailBlazer™ Cloud-Based Deep Learning Software for Time Series Forecastingon May 6, 2024 at 5:00 am

RoadMap Technologies, a leading provider of data science and software solutions for the Life Sciences industry, today announced the release of RoadMap TrailBlazer™ Cloud-based forecasting software ...

- ARVO 2024: Deep learning model for GA segmentation, adaptable to SS-OCT and SD-OCT dataon May 5, 2024 at 3:38 pm

At this year's ARVO meeting, Qinqin Zhang, PhD, presented a poster titled "A unified deep learning model for geographic atrophy segmentation: Adaptable to SS-OCT and SD-OCT data with multiple scan ...

- Researchers develop deep-learning model for streamflow, flood forecastingon May 5, 2024 at 9:27 am

Chinese researchers have proposed a novel hybrid deep-learning model to address streamflow forecasting for water catchment areas at a global scale wi ...

- Deep learning techniques for Hyperspectral image analysis in agricultureon May 5, 2024 at 5:20 am

These innovations underline the industry’s brisk evolution, paving the way for hyperspectral imaging (HSI) and deep learning to further revolutionize farming practices, enhancing efficiency and ...

- New multi-task deep learning framework integrates large-scale single-cell proteomics and transcriptomics dataon April 26, 2024 at 7:35 am

The exponential progress in single-cell multi-omics technologies has led to the accumulation of large and diverse multi-omics datasets. However, the integration of single-cell proteomics and ...

- Europe taps deep learning to make industrial robots safer colleagueson April 26, 2024 at 1:07 am

European researchers have launched the RoboSAPIENS project to make adaptive industrial robots more efficient and safer to work with humans.

- Using deep learning to image the Earth's planetary boundary layeron April 17, 2024 at 5:00 pm

While this approach has improved observations of the atmosphere down to the surface overall, including the PBL, laboratory staff determined that newer "deep" learning techniques that treat the ...

via Bing News