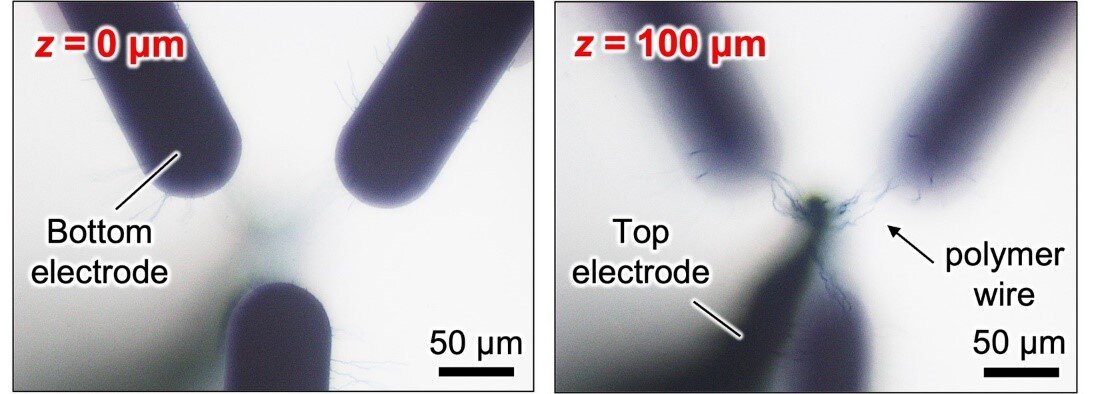

Fig. 1

Optical microscopy images of the 3D polymer wiring between a top electrode (TE) and three bottom electrodes (BEs) at the vertical distance from the surface of glass substrate z = 0 and 100 ?m.

Credit: 2023 Naruki Hagiwara et al., Advanced Functional Materials

Researchers from Japan have developed a technique for growing conductive polymer wire connections between electrodes to realize artificial neural networks that overcome the limits of traditional computer hardware

The development of neural networks to create artificial intelligence in computers was originally inspired by how biological systems work. These ‘neuromorphic’ networks, however, run on hardware that looks nothing like a biological brain, which limits performance. Now, researchers from Osaka University and Hokkaido University plan to change this by creating neuromorphic ‘wetware’.

While neural-network models have achieved remarkable success in applications such as image generation and cancer diagnosis, they still lag far behind the general processing abilities of the human brain. In part, this is because they are implemented in software using traditional computer hardware that is not optimized for the millions of parameters and connections that these models typically require.

Neuromorphic wetware, based on memristive devices, could address this problem. A memristive device is a device whose resistance is set by its history of applied voltage and current. In this approach, electropolymerization is used to link electrodes immersed in a precursor solution using wires made of conductive polymer. The resistance of each wire is then tuned using small voltage pulses, resulting in a memristive device.

“The potential to create fast and energy-efficient networks has been shown using 1D or 2D structures,” says senior author Megumi Akai-Kasaya. “Our aim was to extend this approach to the construction of a 3D network.”

The researchers were able to grow polymer wires from a common polymer mixture called ‘PEDOT:PSS’, which is highly conductive, transparent, flexible, and stable. A 3D structure of top and bottom electrodes was first immersed in a precursor solution. The PEDOT:PSS wires were then grown between selected electrodes by applying a square-wave voltage on these electrodes, mimicking the formation of synaptic connections through axon guidance in an immature brain.

Once the wire was formed, the characteristics of the wire, especially the conductance, were controlled using small voltage pulses applied to one electrode, which changes the electrical properties of the film surrounding the wires.

“The process is continuous and reversible,” explains lead author Naruki Hagiwara, “and this characteristic is what enables the network to be trained, just like software-based neural networks.”

The fabricated network was used to demonstrate unsupervised Hebbian learning (i.e., when synapses that often fire together strengthen their shared connection over time). What’s more, the researchers were able to precisely control the conductance values of the wires so that the network could complete its tasks. Spike-based learning, another approach to neural networks that more closely mimics the processes of biological neural networks, was also demonstrated by controlling the diameter and conductivity of the wires.

Next, by fabricating a chip with a larger number of electrodes and using microfluidic channels to supply the precursor solution to each electrode, the researchers hope to build a larger and more powerful network. Overall, the approach determined in this study is a big step toward the realization of neuromorphic wetware and closing the gap between the cognitive abilities of humans and computers.

Original Article: Growing bio-inspired polymer brains for artificial neural networks

More from: Osaka University | Hokkaido University

The Latest Updates from Bing News

Go deeper with Bing News on:

Neuromorphic wetware

- Giant artificial brain computer unboxed at Sandia labs

Computer development has taken a wide path away from the silicon-based hardware we've grown accustomed to. Research has been conducted into various other ways of building even more efficient systems.

- Neuromorphic sensing and computing to generate US$28 million revenue in 2024

Yole reported that the combined neuromorphic sensing and computing markets are anticipated to generate from US$28 million in 2024 to US$8.4 billion in revenue by the year 2034. Yole's further analysis ...

- Studying optimization for neuromorphic imaging and digital twins

This project will set up the Neuromorphic Imaging and Digital Twins Lab—a first of its kind physical lab in the country under the Center for Mathematics and Artificial Intelligence (CMAI ...

- Intel builds world’s largest neuromorphic system

Meanwhile, another new technology is poised to make a much more immediate difference: neuromorphic computing. Neuromorphic computing looks to redesign how computer chips are built by looking at ...

- Intel Breakthrough Neuromorphic Human Brain Inspired System

Today, Intel has built the world’s largest neuromorphic system. It is code-named Hala Point, this large-scale neuromorphic system, initially deployed at Sandia National Laboratories, utilizes Intel’s ...

Go deeper with Bing News on:

Neural networks

- Is 8K resolution worth it? Hands on with Samsung's stunning new QN900D smart TV

The new 2024 editions of Samsung 8K smart TVs are here. Is it worth it up upgrade? Our tech experts offer advice.

- Study finds dorsal hand images as effective as face biometrics for age estimation

A key word, however, is “seem.” Given Haut.AI’s focus on the skincare market, they are bullish on AI’s ability to help understand the aging process.

- Economic Shifts Ahead as AI Integrates Deeply into Work and Society, Fueling $4.4 Trillion Growth

Amazon.com, Inc. (NASDAQ: AMZN) through its global Amazon Web Services (AWS) cloud system subsidiary recently rolled out its new AI system called Q, which it has dubbed as "the most capable generative ...

- High Accuracy Low-Bit Quantized Neural Networks on a 10-cent Microcontroller

The BitNetMCU initiative streamlines the development of highly accurate neural networks for basic microcontrollers, such as the CH32V003, the famous 10 cents RISC-V MCU from WCH. Although recent ...

- Drift of neural ensembles driven by slow fluctuations of intrinsic excitability

Internal neural variability can induce drift of memory ensembles through synaptic plasticity, allowing for encoding of temporal information.