via Cornell

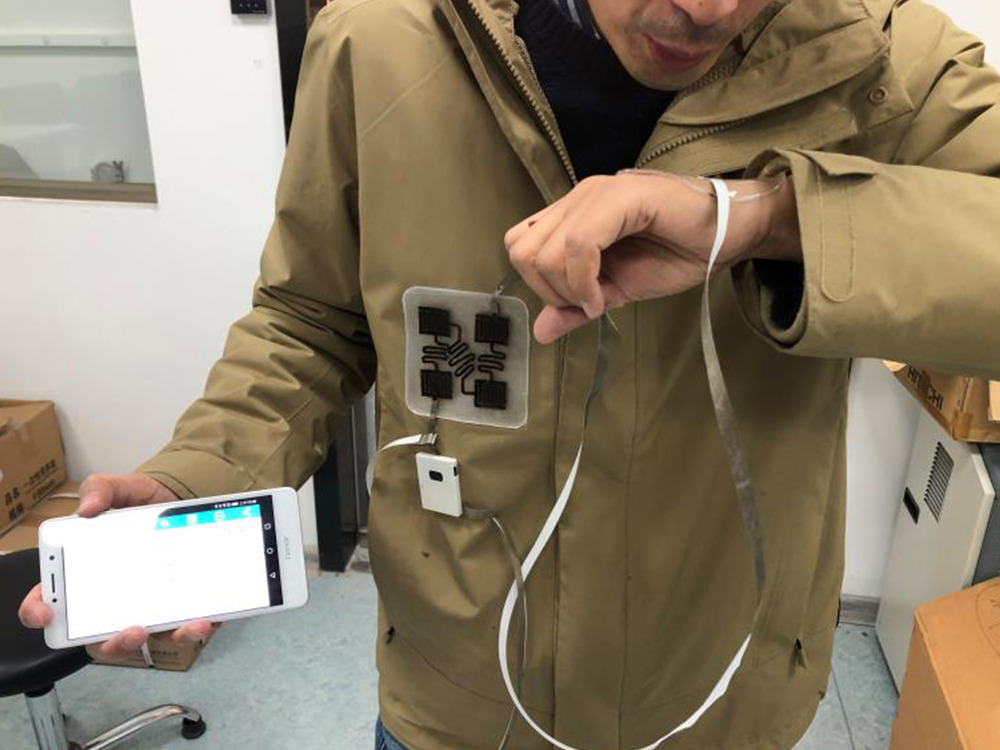

It may look like Ruidong Zhang is talking to himself, but in fact the doctoral student in the field of information science is silently mouthing the passcode to unlock his nearby smartphone and play the next song in his playlist.

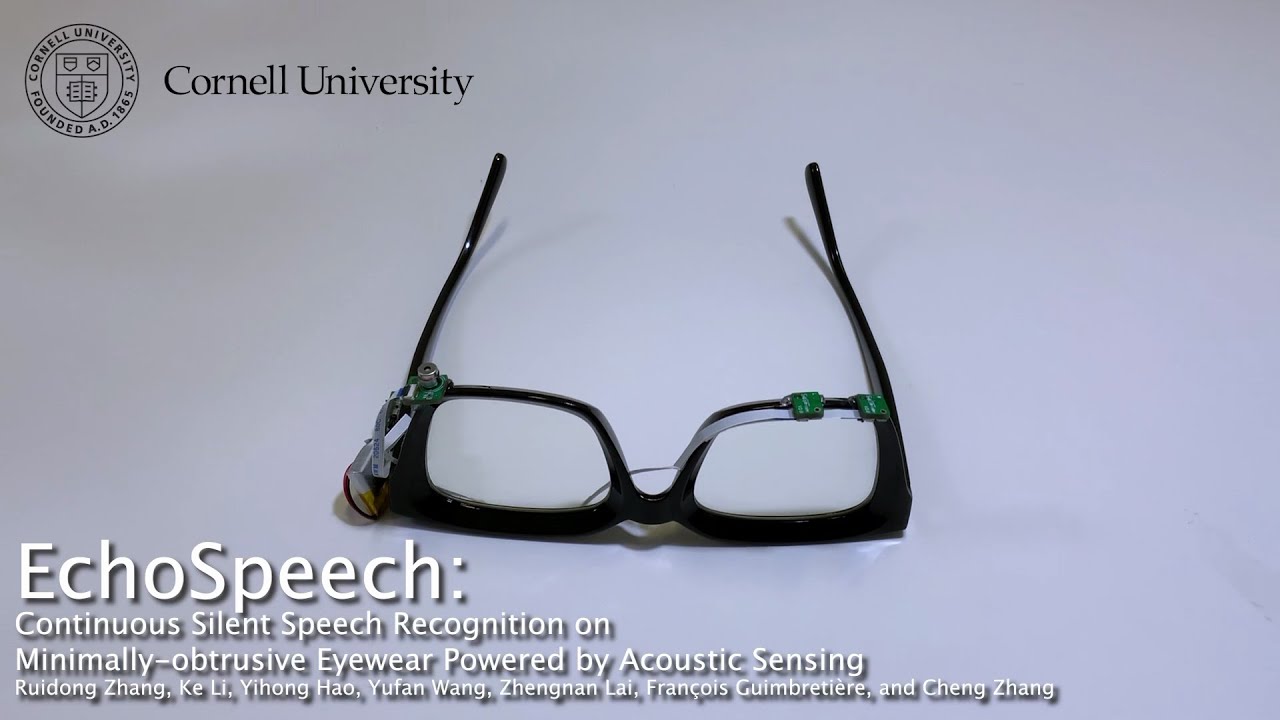

It’s not telepathy: It’s the seemingly ordinary, off-the-shelf eyeglasses he’s wearing, called EchoSpeech – a silent-speech recognition interface that uses acoustic-sensing and artificial intelligence to continuously recognize up to 31 unvocalized commands, based on lip and mouth movements.

Developed by Cornell’s Smart Computer Interfaces for Future Interactions (SciFi) Lab, the low-power, wearable interface requires just a few minutes of user training data before it will recognize commands and can be run on a smartphone, researchers said.

Zhang is the lead author of “EchoSpeech: Continuous Silent Speech Recognition on Minimally-obtrusive Eyewear Powered by Acoustic Sensing,” which will be presented at the Association for Computing Machinery Conference on Human Factors in Computing Systems (CHI) this month in Hamburg, Germany.

“For people who cannot vocalize sound, this silent speech technology could be an excellent input for a voice synthesizer. It could give patients their voices back,” Zhang said of the technology’s potential use with further development.

In its present form, EchoSpeech could be used to communicate with others via smartphone in places where speech is inconvenient or inappropriate, like a noisy restaurant or quiet library. The silent speech interface can also be paired with a stylus and used with design software like CAD, all but eliminating the need for a keyboard and a mouse.

Outfitted with a pair of microphones and speakers smaller than pencil erasers, the EchoSpeech glasses become a wearable AI-powered sonar system, sending and receiving soundwaves across the face and sensing mouth movements. A deep learning algorithm, also developed by SciFi Lab researchers, then analyzes these echo profiles in real time, with about 95% accuracy.

“We’re moving sonar onto the body,” said Cheng Zhang, assistant professor of information science in the Cornell Ann S. Bowers College of Computing and Information Science and director of the SciFi Lab.

“We’re very excited about this system,” he said, “because it really pushes the field forward on performance and privacy. It’s small, low-power and privacy-sensitive, which are all important features for deploying new, wearable technologies in the real world.”

The SciFi Lab has developed several wearable devices that track body, hand and facial movements using machine learning and wearable, miniature video cameras. Recently, the lab has shifted away from cameras and toward acoustic sensing to track face and body movements, citing improved battery life; tighter security and privacy; and smaller, more compact hardware. EchoSpeech builds off the lab’s similar acoustic-sensing device called EarIO, a wearable earbud that tracks facial movements.

Most technology in silent-speech recognition is limited to a select set of predetermined commands and requires the user to face or wear a camera, which is neither practical nor feasible, Cheng Zhang said. There also are major privacy concerns involving wearable cameras – for both the user and those with whom the user interacts, he said.

Acoustic-sensing technology like EchoSpeech removes the need for wearable video cameras. And because audio data is much smaller than image or video data, it requires less bandwidth to process and can be relayed to a smartphone via Bluetooth in real time, said François Guimbretière, professor in information science in Cornell Bowers CIS and a co-author.

“And because the data is processed locally on your smartphone instead of uploaded to the cloud,” he said, “privacy-sensitive information never leaves your control.”

Battery life improves exponentially, too, Cheng Zhang said: Ten hours with acoustic sensing versus 30 minutes with a camera.

The team is exploring commercializing the technology behind EchoSpeech, thanks in part to Ignite: Cornell Research Lab to Market gap funding.

In forthcoming work, SciFi Lab researchers are exploring smart-glass applications to track facial, eye and upper body movements.

“We think glass will be an important personal computing platform to understand human activities in everyday settings,” Cheng Zhang said.

Original Article: AI-EQUIPPED EYEGLASSES CAN READ SILENT SPEECH

More from: Cornell Ann S. Bowers College of Computing and Information Science

The Latest Updates from Bing News

Go deeper with Bing News on:

Acoustic-sensing technology

- PMD Device Solutions Signs Letter of Intent for the Coala-Life Technology for 2 MSEK Per Year with Royalties

Mr Sorlander was the original inventor of the technology and brings significant expertise in electrocardiography, acoustic sensing, and machine learning technologies. The agreement will see a 2 MSEK ...

- Sensonic Opens New US Office

Sensonic has expanded its global footprint with a new US office, deepening its commitment to the North American rail industry.

- A new use for fiber optic lines: detecting earthquakes, Caltech researchers say

Imagine repurposing underground fiber optic cables, typically used for delivering high-speed Internet to California residents, to detect and measure earthquakes. That is the focus of a recent ...

- Torque Sensors Market Size to Grow USD 11810 Million by 2029 at a CAGR of 4.5% | Valuates Reports

The Global Torque Sensors Market size is expected to reach US$ 11810 million by 2029, growing at a CAGR of 4.5% from 2023 to 2029. Get Free Sample: ...

- A new use for fiber optic lines: detecting earthquakes, Caltech researchers say

That is the focus of a recent research by scientists at the California Institute of Technology led by Zhongwen ... Zhan’s team employed distributed acoustic sensing to study a section of the ...

Go deeper with Bing News on:

Silent-speech recognition interface

- Brain-computer interface translates ALS patient's brain activity into spoken words

Researchers successfully used a brain-computer interface to synthesize speech directly from brain activity in an ALS patient, achieving 80% word recognition accuracy by listeners, showcasing the ...

- Best speech-to-text app of 2024

However, speech-to-text is moving more and more into the mainstream as office work can now routinely be completed more simply and easily by using voce-recognition ... the interface is easy to ...

- Microsoft to replace Windows Speech Recognition with Voice Access

Microsoft is sunsetting Windows Speech Recognition (WSR) and replacing it with Voice Access. The change has been on the cards since last year, but the company has now indicated the timeline.

- speech interface

And should you have a prototype idea that isn’t necessarily AR or VR but would benefit from AI-assisted speech recognition that can run locally? This project has what you need.

- What Is The Actual Value Of Voice Recognition In Digital Devices?

Voice recognition as a user interface and data collection vehicle is no longer science fiction. It has now become a significant way for companies to learn about their customers so that it can be ...