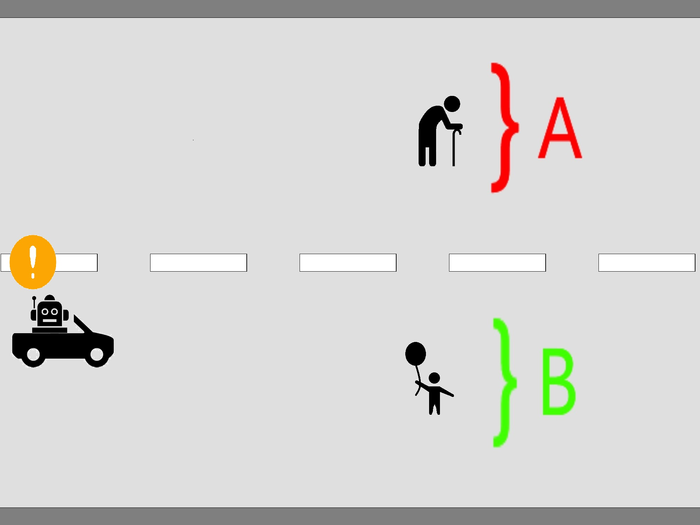

Participants rated from 1 to 5 stars the ethical decision of either a human or an AI driver.

(Image courtesy of Johann Caro-Burnett, Hiroshima University)

With the accelerating evolution of technology, artificial intelligence (AI) plays a growing role in decision-making processes. Humans are becoming increasingly dependent on algorithms to process information, recommend certain behaviors, and even take actions of their behalf. A research team has studied how humans react to the introduction of AI decision making. Specifically, they explored the question, “is society ready for AI ethical decision making?” by studying human interaction with autonomous cars.

The team published their findings on May 6, 2022, in the Journal of Behavioral and Experimental Economics.

In the first of two experiments, the researchers presented 529 human subjects with an ethical dilemma a driver might face. In the scenario the researchers created, the car driver had to decide whether to crash the car into one group of people or another – the collision was unavoidable. The crash would cause severe harm to one group of people, but would save the lives of the other group. The subjects in the study had to rate the car driver’s decision, when the driver was a human and also when the driver was AI. This first experiment was designed to measure the bias people might have against AI ethical decision making.

In their second experiment, 563 human subjects responded to the researchers’ questions. The researchers determined how people react to the debate over AI ethical decisions once they become part of social and political discussions. In this experiment, there were two scenarios. One involved a hypothetical government that had already decided to allow autonomous cars to make ethical decisions. Their other scenario allowed the subjects to “vote” whether to allow the autonomous cars to make ethical decisions. In both cases, the subjects could choose to be in favor of or against the decisions made by the technology. This second experiment was designed to test the effect of two alternative ways of introducing AI into society.

The researchers observed that when the subjects were asked to evaluate the ethical decisions of either a human or AI driver, they did not have a definitive preference for either. However, when the subjects were asked their explicit opinion on whether a driver should be allowed to make ethical decisions on the road, the subjects had a stronger opinion against AI-operated cars. The researchers believe that the discrepancy between the two results is caused by a combination of two elements.

The first element is that individual people believe society as a whole does not want AI ethical decision making, and so they assign a positive weight to their beliefs when asked for their opinion on the matter. “Indeed, when participants are asked explicitly to separate their answers from those of society, the difference between the permissibility for AI and human drivers vanishes,” said Johann Caro-Burnett, an assistant professor in the Graduate School of Humanities and Social Sciences, Hiroshima University.

The second element is that when introducing this new technology into society, allowing discussion of the topic has mixed results depending on the country. “In regions where people trust their government and have strong political institutions, information and decision-making power improve how subjects evaluate the ethical decisions of AI. In contrast, in regions where people do not trust their government and have weak political institutions, decision-making capability deteriorates how subjects evaluate the ethical decisions of AI,” said Caro-Burnett.

“We find that there is a social fear of AI ethical decision-making. However, the source of this fear is not intrinsic to individuals. Indeed, this rejection of AI comes from what individuals believe is the society’s opinion,” said Shinji Kaneko, a professor in the Graduate School of Humanities and Social Sciences, Hiroshima University, and the Network for Education and Research on Peace and Sustainability. So when not being asked explicitly, people do not show any signs of bias against AI ethical decision-making. However, when asked explicitly, people show an aversion to AI. Furthermore, where there is added discussion and information on the topic, the acceptance of AI improves in developed countries and worsens in developing countries.

The researchers believe this rejection of a new technology, that is mostly due to incorporating individuals’ beliefs about society’s opinion, is likely to apply in other machines and robots. “Therefore, it will be important to determine how to aggregate individual preferences into one social preference. Moreover, this task will also have to be different across countries, as our results suggest,” said Kaneko.

Original Article: Researchers study society’s readiness for AI ethical decision making

More from: Hiroshima University

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Artificial intelligence ethical decision making

- The EU's Approach To Regulating Artificial Intelligence

Regulating artificial intelligence (AI) is crucial for several reasons, primarily for ethical, safety and societal considerations. The rapid advance ...

- A university creates an artificial intelligence institute, partly to help government

The University of Maryland has launched of a new institute dedicated to developing the next generation of artificial intelligence people.

- Implementing ethical AI for FIs

While AI offers significant benefits to the financial sector, such as personalised customer experiences or optimised risk management, it also poses ethical risks. This becomes particularly clear when ...

- Turing test study shows humans rate artificial intelligence as more 'moral' than other people

A new study has found that when people are presented with two answers to an ethical question, most will think the answer from artificial intelligence (AI) is better than the response from another ...

- A physicists’ guide to the ethics of artificial intelligence

Physics may seem like its own world, but different sectors using machine learning are all part of the same universe.

Go deeper with Google Headlines on:

Artificial intelligence ethical decision making

[google_news title=”” keyword=”artificial intelligence ethical decision making” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

AI ethical decision making

- The EU's Approach To Regulating Artificial Intelligence

Regulating artificial intelligence (AI) is crucial for several reasons, primarily for ethical, safety and societal considerations. The rapid advance ...

- Implementing ethical AI for FIs

A cornerstone of AI ethics is the interpretability of decisions made by AI systems or machine learning algorithms. Without knowledge of the underlying parameters and decision-making processes, it is ...

- AI Outperforms Humans in Moral Judgments

In the study, participants rated responses from AI and humans without knowing the source, and overwhelmingly favored the AI’s responses in terms of virtuousness, intelligence, and trustworthiness.

- The role and risks of AI in medical decision-making

By Godson Kofi DAVIES…While AI offers transformative potential in healthcare, its integration into medical decision-making necessitates a nuanced understanding of its capabilities and pitfalls.In the ...

- AI Use in Court Is Ethical When Used Correctly | Opinion

AI should be used to better society, enable people to do their jobs with fewer limits, and allow them to better practice law, write briefs, and advise clients.

Go deeper with Google Headlines on:

AI ethical decision making

[google_news title=”” keyword=”AI ethical decision making” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]