Researchers have shown how to write any magnetic pattern desired onto nanowires, which could help computers mimic how the brain processes information.

Much current computer hardware, such as hard drives, use magnetic memory devices. These rely on magnetic states – the direction microscopic magnets are pointing – to encode and read information.

With this new writing method, we open up research into ‘training’ these magnetic nanowires to solve useful problems.– Dr Jack Gartside

Exotic magnetic states – such as a point where three south poles meet – represent complex systems. These may act in a similar way to many complex systems found in nature, such as the way our brains process information.

Computing systems that are designed to process information in similar ways to our brains are known as ‘neural networks’. There are already powerful software-based neural networks – for example one recently beat the human champion at the game ‘Go’ – but their efficiency is limited as they run on conventional computer hardware.

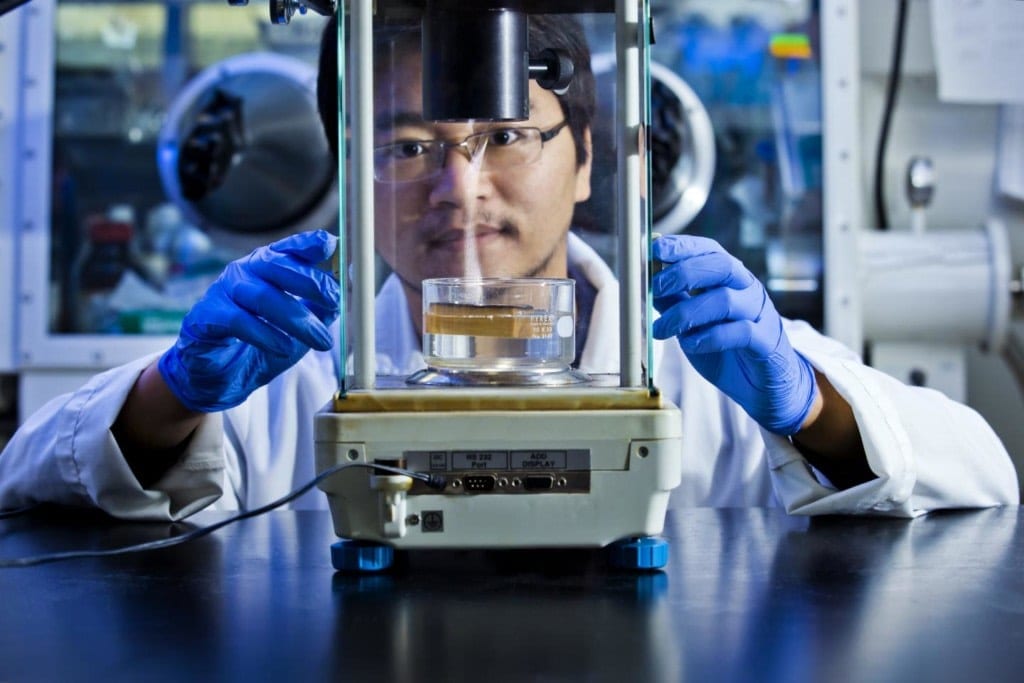

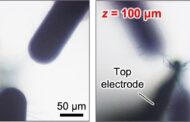

Now, researchers from Imperial College London have devised a method for writing magnetic information in any pattern desired, using a very small magnetic probe called a magnetic force microscope.

With this new writing method, arrays of magnetic nanowires may be able to function as hardware neural networks – potentially more powerful and efficient than software-based approaches.

The team, from the Departments of Physics and Materials at Imperial, demonstrated their system by writing patterns that have never been seen before. They published their results today in Nature Nanotechnology.

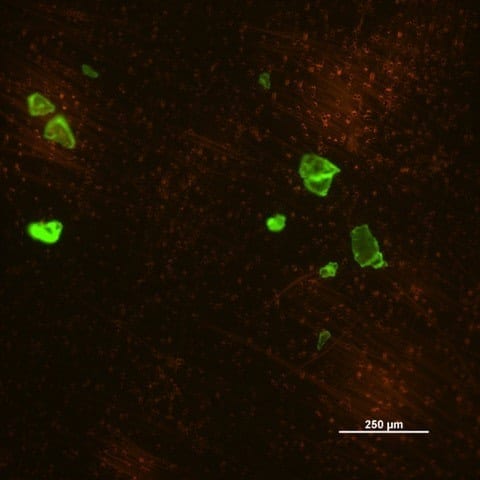

‘Hexagonal artificial spin ice ground state’ – a

pattern never demonstrated before. Coloured arrows

show north or south polarisation

Dr Jack Gartside, first author from the Department of Physics, said: “With this new writing method, we open up research into ‘training’ these magnetic nanowires to solve useful problems. If successful, this will bring hardware neural networks a step closer to reality.”

As well as applications in computing, the method could be used to study fundamental aspects of complex systems, by creating magnetic states that are far from optimal (such as three south poles together) and seeing how the system responds.

Learn more: New way to write magnetic info could pave the way for hardware neural networks

The Latest on: Hardware neural networks

[google_news title=”” keyword=”Hardware neural networks” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

- Tesla Gives Sneak Peek of Robotaxi Ride-Hailing App in First-Quarter Shareholder Deckon April 25, 2024 at 1:16 pm

Tesla's first-quarter shareholder deck provided a first look at its potential Robotaxi ride-hailing service app. The app render showed details like vehicle tracking, interior climate control, and othe ...

- Microsoft open-sources Pi-3 Mini small language model that outperforms Meta’s Llama 2on April 24, 2024 at 7:10 am

The dataset is an expanded version of the information repository the company used to build Pi-2, a previous-generation small language model. Pi-3 Mini’s dataset comprises 33 million tokens’ worth of ...

- AI Efficiency Breakthrough: How Sound Waves Are Revolutionizing Optical Neural Networkson April 23, 2024 at 12:34 pm

Researchers have developed a way to use sound waves in optical neural networks, enhancing their ability to process data with high speed and energy efficiency. Optical neural networks may provide the ...

- Neural networks can mediate between download size and quality, according to researcheron April 22, 2024 at 1:07 pm

Application data requirements vs. available network bandwidth have been the ongoing Battle of the Information Age, but now it appears that a truce is within reach, based on new research from NJIT ...

- Neural networks can mediate between download size and quality, according to NJIT researcheron April 18, 2024 at 5:00 pm

The BONES system will make network requests err on the side of smallness and upscale the difference through a neural network running on the receiving hardware. Application data requirements vs.

- Synaptics Introduces Astra Platform For Edge AIon April 16, 2024 at 10:26 am

Synaptics launched the Astra embedded computing platform for edge AI applications at the Embedded World 2024 conference last week.

- Arm’s most powerful neural processor for microcontrollerson April 8, 2024 at 9:24 pm

Arm has announced its most powerful microcontroller-grade neural processor, which will reach 4Top/s in its maximum configuration and is 20% more power ...

- Classical optical neural network exhibits 'quantum speedup'on April 2, 2024 at 12:28 pm

However, due to technical limitations, it is currently difficult to execute such neural network algorithms on hardware at a large scale, making it challenging for their application in practical ...

- Amazing! The classical optical neural network exhibits “quantum speedup”on April 1, 2024 at 5:00 pm

However, due to technical limitations, it is currently difficult to execute such neural network algorithms on hardware at a large scale, making it challenging for their application in practical ...

via Google News and Bing News