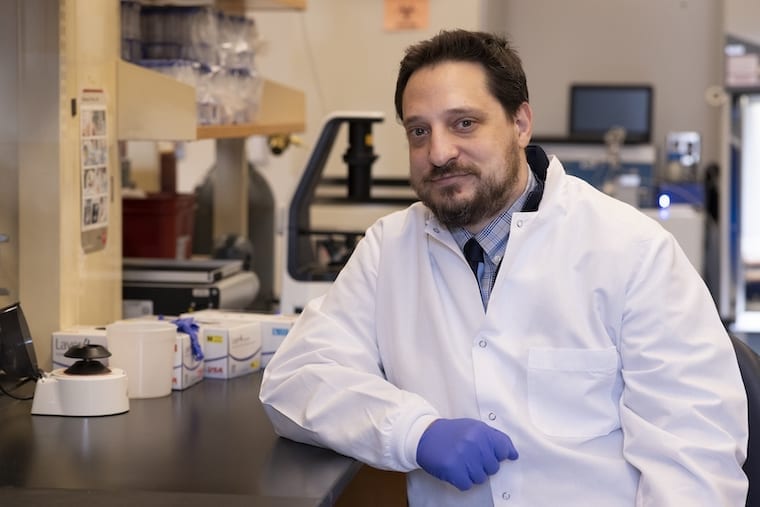

Anshumali Shrivastava, Shabnam Daghaghi, Nicholas Meisburger

Rice University computer scientists have demonstrated artificial intelligence (AI) software that runs on commodity processors and trains deep neural networks 15 times faster than platforms based on graphics processors.

“The cost of training is the actual bottleneck in AI,” said Anshumali Shrivastava, an assistant professor of computer science at Rice’s Brown School of Engineering. “Companies are spending millions of dollars a week just to train and fine-tune their AI workloads.”

Shrivastava and collaborators from Rice and Intel will present research that addresses that bottleneck April 8 at the machine learning systems conference MLSys.

Deep neural networks (DNN) are a powerful form of artificial intelligence that can outperform humans at some tasks. DNN training is typically a series of matrix multiplication operations, an ideal workload for graphics processing units (GPUs), which cost about three times more than general purpose central processing units (CPUs).

“The whole industry is fixated on one kind of improvement — faster matrix multiplications,” Shrivastava said. “Everyone is looking at specialized hardware and architectures to push matrix multiplication. People are now even talking about having specialized hardware-software stacks for specific kinds of deep learning. Instead of taking an expensive algorithm and throwing the whole world of system optimization at it, I’m saying, ‘Let’s revisit the algorithm.’”

Shrivastava’s lab did that in 2019, recasting DNN training as a search problem that could be solved with hash tables. Their “sub-linear deep learning engine” (SLIDE) is specifically designed to run on commodity CPUs, and Shrivastava and collaborators from Intel showed it could outperform GPU-based training when they unveiled it at MLSys 2020.

The study they’ll present this week at MLSys 2021 explored whether SLIDE’s performance could be improved with vectorization and memory optimization accelerators in modern CPUs.

“Hash table-based acceleration already outperforms GPU, but CPUs are also evolving,” said study co-author Shabnam Daghaghi, a Rice graduate student. “We leveraged those innovations to take SLIDE even further, showing that if you aren’t fixated on matrix multiplications, you can leverage the power in modern CPUs and train AI models four to 15 times faster than the best specialized hardware alternative.”

Study co-author Nicholas Meisburger, a Rice undergraduate, said “CPUs are still the most prevalent hardware in computing. The benefits of making them more appealing for AI workloads cannot be understated.”

Original Article: Rice, Intel optimize AI training for commodity hardware

More from: Rice University

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Deep neural networks

- Smart Engines says new method boosts neural network efficiency by 40%

Scientists from Smart Engines have announced they have found a way to improve the efficiency of neural networks by 40 percent.

- Tesla’s 2 million car Autopilot recall is now under federal scrutiny

In June 2022, the ODI upgraded that investigation into an engineering analysis, and in December 2023, Tesla was forced to recall more than 2 million cars after the analysis found that the car company ...

- Europe taps deep learning to make industrial robots safer colleagues

European researchers have launched the RoboSAPIENS project to make adaptive industrial robots more efficient and safer to work with humans.

- AI-powered 'deep medicine' could transform health care in the NHS and reconnect staff with their patients

Today's NHS faces severe time constraints, with the risk of short consultations and concerns about the risk of misdiagnosis or delayed care. These challenges are compounded by limited resources and ...

- Forget the AI doom and hype, let's make computers useful

Machine learning has its place, just not in ways that suits today's hypesters Systems Approach Full disclosure: I have a history with AI, having flirted with it in the 1980s (remember expert systems?) ...

Go deeper with Google Headlines on:

Deep neural networks

[google_news title=”” keyword=”deep neural networks” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Training deep neural networks

- OpenELM: New local LLMs introduced by Apple

Apple researchers have presented another new language model family. OpenELM was placed at HuggingFace and has a few interesting features.

- Mastering AI Powerhouse: Unleashing C++ for Machine Learning and AI Programming

Why is C++ the preferred language for AI development? Explore emerging trends, essential tools, and prospects within this dynamic landscape.

- Classical optical neural network exhibits 'quantum speedup'

It shows the speedup in training process on learning ... "Despite the potential advantages of quantum neural networks, implementing them practically requires deep quantum circuits with many ...

- Amazing! The classical optical neural network exhibits “quantum speedup”

Therefore, effectively reducing the training cost of machine ... potential advantages of quantum neural networks, implementing them practically requires deep quantum circuits with many multi ...

- Top 10 Best Python Libraries for Deep Learning in 2024

To understand deep learning, it’s important to have a basic understanding of machine learning and neural networks. Machine learning is a type of artificial intelligence that involves training ...

Go deeper with Google Headlines on:

Training deep neural networks

[google_news title=”” keyword=”training deep neural networks” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]