Brain models are helping to discover better robot navigation methods

Brain models are helping to discover better robot navigation methods

On the new EBRAINS research infrastructure, scientists of the Human Brain Project have connected brain-inspired deep learning to biomimetic robots.

How the brain lets us perceive and navigate the world is one of the most fascinating aspects of cognition. When orienting ourselves, we constantly combine information from all six senses in a seemingly effortless way – a feature that even the most advanced AI systems struggle to replicate.

On the new EBRAINS research infrastructure, cognitive neuroscientists, computational modellers and roboticists are now working together to shed new light on the neural mechanisms behind this, by creating robots whose internal workings mimic the brain.

“We believe that robots can be improved through the use of knowledge about the brain. But at the same time, this can also help us better understand the brain”, says Cyriel Pennartz, a Professor of Cognition and Systems Neurosciences at the University of Amsterdam.

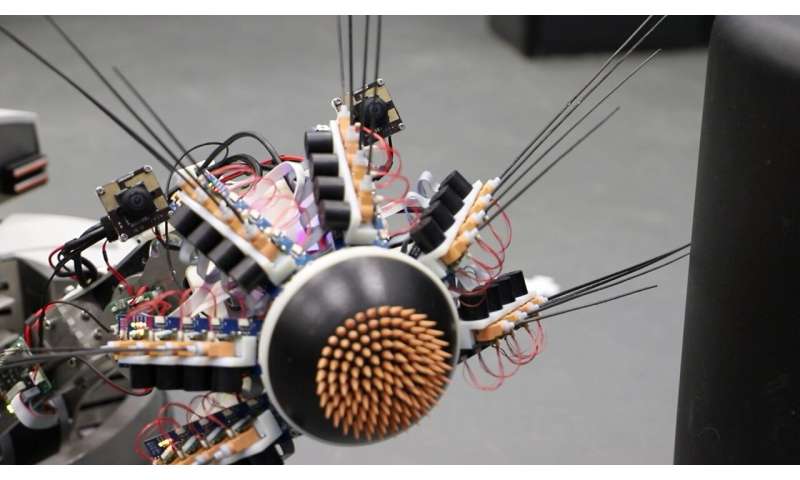

In the Human Brain Project, Pennartz has collaborated with computational modellers Shirin Dora, Sander Bohte and Jorge F. Mejias to create complex neural network architectures for perception based on real-life data from rats. Their model, dubbed “MultiPrednet” consists of modules for visual and tactile input, and a third that merges them.

“What we were able to replicate for the first time, is that the brain makes predictions across different senses”, Pennartz explains. “So you can predict how something will feel from looking at it, and vice versa”.

The way these networks “train” resembles how scientists think our brain learns: By constantly generating predictions about the world, comparing them to actual sensory inputs, and then adapting the network to avoid future error signals.

To test how the MultiPrednet performs in a body, the researchers teamed up with Martin Pearson at Bristol Robotics Lab. Together they integrated it into Whiskeye, a rodent-like robot that autonomously explores its environment, using head-mounted cameras for eyes, and 24 artificial whiskers to gather tactile information.

The researchers observed first indications that the brain-based model has an edge over traditional deep learning systems: Especially when it comes to navigation and recognition of familiar scenes, the MultiPredNet seems to perform better – a discovery the team now investigates further.

To accelerate this research, the robot has been recreated as a simulation on the Neurorobotics Platform of the EBRAINS research infrastructure. “This allows us to do long duration or even parallel experiments under controlled conditions”, says Pearson. “We also plan to use the High Performance and Neuromorphic Computing Platforms for much more detailed models of control and perception in the future.”

All code and analysis tools of the work are open on EBRAINS, so that researchers can run their own experiments. “It’s a unique situation”, says Pennartz: “We were able to say, here’s an interesting model of perception based on neurobiology, and it would be great to test it on a larger scale with supercomputers and embodied in a robot. Doing this is normally very complicated, but EBRAINS makes it possible.“

Katrin Amunts, Scientific Research Director of the HBP says: “To understand cognition, we will need to explore how the brain acts as part of the body in an environment. Cognitive neuroscience and robotics have much to gain from each other in this respect. The Human Brain Project brought these communities together, and now with our standing infrastructure, it’s easier than ever to collaborate.”

Pawel Swieboda, CEO of EBRAINS and Director General of the HBP, comments: “The robots of the future will benefit from innovations that connect insights from brain science to AI and robotics. With EBRAINS, Europe can be at the center of this shift to more bio-inspired AI and technology.”

Video: Perception and Recognition of Objects and Scenes

Learn how Human Brain Project scientists use EBRAINS to teach robots vision and touch

Video description on Youtube

Scientists in the Human Brain Project have constructed a brain-like, deep learning network that enables more efficient robot navigation. The so-called predictive coding networks merge information from visual and tactile sensors of a robot. Using EBRAINS, the brain-based network is connected to a virtual robotics platform for rapid testing and parallel experiments.

Access to the Experiment via the EBRAINS Knowledge Graph: https://search.kg.ebrains.eu/instances/Model/2164c2b9bbb66b42ce358d108b5081ce

Original Article: A robot on EBRAINS has learned to combine vision and touch

More from: University of Amsterdam

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Robot navigation

- How are Robots Transforming Coal Mining Operations?

This article explores the integration of robotics in the coal mining industry, highlighting its transformative impact on safety, efficiency, and environmental sustainability. It discusses the shift ...

- Get an iRobot Braava Jet m6 robot mop for $299 with this limited-time deal

The Braava Jet m6 is iRobot's tried-and-true robot mop. Popular for its effective mopping and reliability, buyers can take advantage of a limited-time deal and get it for 34% off.

- The cheapest robot vacuum sales and deals for May 2024

Robot vacuum cleaners are brilliant things ... It delivers almost everything its 980 sibling does for less money, its navigation is superb and you’ll rarely find it confused in a corner or ...

- The World's First 2-in-1 Robot Vacuum and Mop with Auto Changing Disposable Mop Pad Celebrates National Pet Month

Noesis, a leading innovator in robotic floor cleaning, is celebrating May's National Pet Month by announcing a $200 discount (to $1,599.00) for the Noesis F10 Pro, the world's first 2-in-1 robot ...

- Forexiro by Avenix Fzco: A New Forex Robot for Automated Investing

Hence, navigation through different features is quick without any difficulty being encountered while operating this foreign exchange robot system. In addition to being user-friendly, there are various ...

Go deeper with Google Headlines on:

Robot navigation

[google_news title=”” keyword=”robot navigation” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Human Brain Project

- Scientists create 3D reconstruction of a millimetre-sized fragment of human brain tissue

“Just a miniscule teeny-weeny bit of the human brain is still thousands of terabytes,” Lichtman ... “Given the enormous investment put into this project, it was important to present the results in a ...

- Scientists find 57,000 cells and 150m neural connections in tiny sample of human brain

Harvard researchers teamed up with Google to analyse the makeup of the brain, much of which is not yet understood ...

- Microscopic Brain Tissue Map Reveals Vast Neural Networks

Researchers created the largest 3D reconstruction of human brain tissue at synaptic resolution, capturing detailed images of a cubic millimeter of human temporal cortex.

- Human brain map contains never-before-seen details of structure

"A terabyte is, for most people, gigantic, yet a fragment of a human brain—just a miniscule ... "Given the enormous investment put into this project, it was important to present the results in a way ...

- See the most detailed map of human brain matter ever created

Explore the tiny 'pizza slice' down to the neuron. By Lauren Leffer | Published May 9, 2024 2:00 PM EDT Researchers built a 3D image of nearly every neuron and its connections within a small piece of ...

Go deeper with Google Headlines on:

Human Brain Project

[google_news title=”” keyword=”Human Brain Project” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]