Brain models are helping to discover better robot navigation methods

Brain models are helping to discover better robot navigation methods

On the new EBRAINS research infrastructure, scientists of the Human Brain Project have connected brain-inspired deep learning to biomimetic robots.

How the brain lets us perceive and navigate the world is one of the most fascinating aspects of cognition. When orienting ourselves, we constantly combine information from all six senses in a seemingly effortless way – a feature that even the most advanced AI systems struggle to replicate.

On the new EBRAINS research infrastructure, cognitive neuroscientists, computational modellers and roboticists are now working together to shed new light on the neural mechanisms behind this, by creating robots whose internal workings mimic the brain.

“We believe that robots can be improved through the use of knowledge about the brain. But at the same time, this can also help us better understand the brain”, says Cyriel Pennartz, a Professor of Cognition and Systems Neurosciences at the University of Amsterdam.

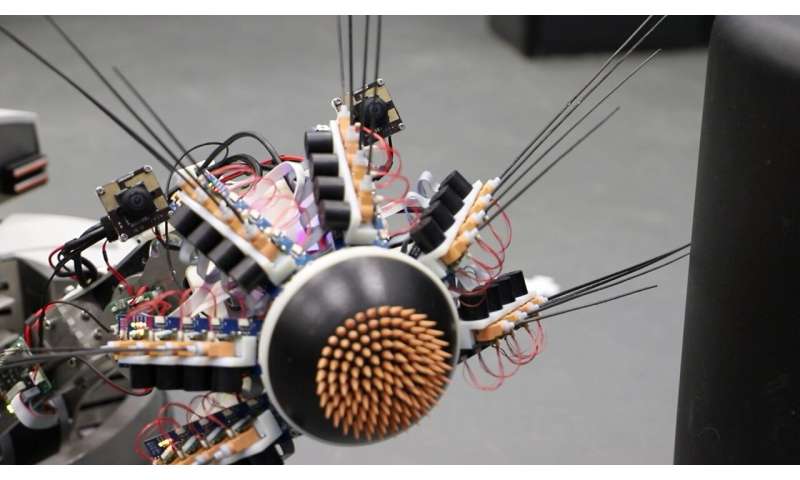

In the Human Brain Project, Pennartz has collaborated with computational modellers Shirin Dora, Sander Bohte and Jorge F. Mejias to create complex neural network architectures for perception based on real-life data from rats. Their model, dubbed “MultiPrednet” consists of modules for visual and tactile input, and a third that merges them.

“What we were able to replicate for the first time, is that the brain makes predictions across different senses”, Pennartz explains. “So you can predict how something will feel from looking at it, and vice versa”.

The way these networks “train” resembles how scientists think our brain learns: By constantly generating predictions about the world, comparing them to actual sensory inputs, and then adapting the network to avoid future error signals.

To test how the MultiPrednet performs in a body, the researchers teamed up with Martin Pearson at Bristol Robotics Lab. Together they integrated it into Whiskeye, a rodent-like robot that autonomously explores its environment, using head-mounted cameras for eyes, and 24 artificial whiskers to gather tactile information.

The researchers observed first indications that the brain-based model has an edge over traditional deep learning systems: Especially when it comes to navigation and recognition of familiar scenes, the MultiPredNet seems to perform better – a discovery the team now investigates further.

To accelerate this research, the robot has been recreated as a simulation on the Neurorobotics Platform of the EBRAINS research infrastructure. “This allows us to do long duration or even parallel experiments under controlled conditions”, says Pearson. “We also plan to use the High Performance and Neuromorphic Computing Platforms for much more detailed models of control and perception in the future.”

All code and analysis tools of the work are open on EBRAINS, so that researchers can run their own experiments. “It’s a unique situation”, says Pennartz: “We were able to say, here’s an interesting model of perception based on neurobiology, and it would be great to test it on a larger scale with supercomputers and embodied in a robot. Doing this is normally very complicated, but EBRAINS makes it possible.“

Katrin Amunts, Scientific Research Director of the HBP says: “To understand cognition, we will need to explore how the brain acts as part of the body in an environment. Cognitive neuroscience and robotics have much to gain from each other in this respect. The Human Brain Project brought these communities together, and now with our standing infrastructure, it’s easier than ever to collaborate.”

Pawel Swieboda, CEO of EBRAINS and Director General of the HBP, comments: “The robots of the future will benefit from innovations that connect insights from brain science to AI and robotics. With EBRAINS, Europe can be at the center of this shift to more bio-inspired AI and technology.”

Video: Perception and Recognition of Objects and Scenes

Learn how Human Brain Project scientists use EBRAINS to teach robots vision and touch

Video description on Youtube

Scientists in the Human Brain Project have constructed a brain-like, deep learning network that enables more efficient robot navigation. The so-called predictive coding networks merge information from visual and tactile sensors of a robot. Using EBRAINS, the brain-based network is connected to a virtual robotics platform for rapid testing and parallel experiments.

Access to the Experiment via the EBRAINS Knowledge Graph: https://search.kg.ebrains.eu/instances/Model/2164c2b9bbb66b42ce358d108b5081ce

Original Article: A robot on EBRAINS has learned to combine vision and touch

More from: University of Amsterdam

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Robot navigation

- Roborock S8 Robot Vacuum Review

It takes a bit to get excited about a robot vacuum, but the Roborock S8 manages to be a game-changer in the world of robotic cleaning. As someone who has tested numerous robot vacuums over the years ...

- This is the Best Robot Vacuum and it's now only $999

If you were to ask me, someone who has reviewed over ten different robot vacuums this year and over 50 in the past five years, which is the best robot vacuum? I’d have to say the ECOVACS DEEBOT X2 ...

- Dyson’s new robot vacuum is $1,200 — here are some of our more affordable favorites

We love Dyson’s other vacuums. And this robot vacuum is packed with features. But does it justify that price tag?

- Somehow This $10,000 Flame-Thrower Robot Dog Is Completely Legal in 48 States

Back in 2018, Elon Musk made the news for offering an official Boring Company flamethrower that reportedly sold 10,000 units in 48 hours. It sparked some controversy, because flamethrowers can also ...

- ETH Zurich’s wheeled-legged robot masters urban terrain autonomously

ETH Zurich researchers use advanced learning methods to create an autonomous wheeled-legged robot's control system.

Go deeper with Google Headlines on:

Robot navigation

[google_news title=”” keyword=”robot navigation” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Human Brain Project

- Last Chance To See 'Your Brain To Me, My Brain To You' Exhibition At NMOQ

Doha, Qatar: Swiss artist Pipilotti Rist's installation 'Your Brain to Me, My Brain to You' will conclude on Tuesday, April 30, 2024, after an impressive two-year run at the National Museum of Qatar ...

- Brain chip unveiled that allows monkey to control robotic arm with brain

Technology is advancing by leaps and bounds. Proof of this comes in the latest microchip presented in China, by means of which a monkey is able to control a robotic arm using only ...

- You Used to Think Like a Mouse, Brain Study Reveals

Scientists said mice are more purposeful in their actions than previously thought and learn similarly to how human babies do.

- Luke Hemmings Has 'Finally Found a Sound' with 'Melancholy' Second Solo Project, boy (Exclusive)

Seconds of Summer's Luke Hemmings tells PEOPLE he's 'finally sound a sound' on his second-ever solo project, a 'melancholy' seven-song EP titled 'boy', which is out now.

- Boost Your Brain: Scientists Develop New Method To Improve Your Reading Efficiency

Researchers from the University of Cologne and the University of Würzburg have discovered through training studies that individuals can improve their ability to distinguish between familiar and ...

Go deeper with Google Headlines on:

Human Brain Project

[google_news title=”” keyword=”Human Brain Project” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]