Is it a computer program or a living being? At TU Wien (Vienna), the boundaries become blurred. The neural system of a nematode was translated into computer code – and then the virtual worm was taught amazing tricks.

It is not much to look at: the nematode C. elegans is about one millimetre in length and is a very simple organism. But for science, it is extremely interesting. C. elegans is the only living being whose neural system has been analysed completely. It can be drawn as a circuit diagram or reproduced by computer software, so that the neural activity of the worm is simulated by a computer program.

Such an artificial C. elegans has now been trained at TU Wien (Vienna) to perform a remarkable trick: The computer worm has learned to balance a pole at the tip of its tail.

The Worm’s Reflexive behaviour as Computer Code

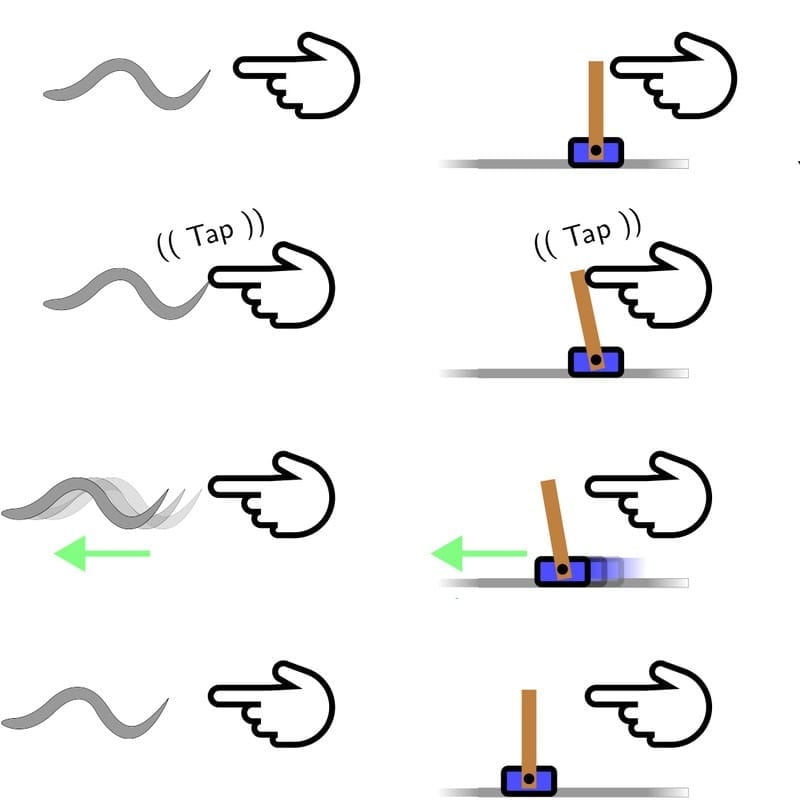

C. elegans has to get by with only 300 neurons. But they are enough to make sure that the worm can find its way, eat bacteria and react to certain external stimuli. It can, for example, react to a touch on its body. A reflexive response is triggered and the worm squirms away.

This behaviour can be perfectly explained: it is determined by the worm’s nerve cells and the strength of the connections between them. When this simple reflex-network is recreated on a computer, then the simulated worm reacts in exactly the same way to a virtual stimulation – not because anybody programmed it to do so, but because this kind of behaviour is hard-wired in its neural network.

“This reflexive response of such a neural circuit, is very similar to the reaction of a control agent balancing a pole”, says Ramin Hasani (Institute of Computer Engineering, TU Wien). This is a typical control problem which can be solved quite well by standard controllers: a pole is fixed on its lower end on a moving object, and it is supposed to stay in a vertical position. Whenever it starts tilting, the lower end has to move slightly to keep the pole from tipping over. Much like the worm has to change its direction whenever it is stimulated by a touch, the pole must be moved whenever it tilts.

Mathias Lechner, Radu Grosu and Ramin Hasani wanted to find out, whether the neural system of C. elegans, uploaded to a computer, could solve this problem – without adding any nerve cells, just by tuning the strength of the synaptic connections. This basic idea (tuning the connections between nerve cells) is also the characteristic feature of any natural learning process.

A Program without a Programmer

“With the help of reinforcement learning, a method also known as ‘learning based on experiment and reward’, the artificial reflex network was trained and optimized on the computer”, Mathias Lechner explains. And indeed, the team succeeded in teaching the virtual nerve system to balance a pole. “The result is a controller, which can solve a standard technology problem – stabilizing a pole, balanced on its tip. But no human being has written even one line of code for this controller, it just emerged by training a biological nerve system”, says Radu Grosu.

The team is going to explore the capabilities of such control-circuits further. The project raises the question, whether there is a fundamental difference between living nerve systems and computer code. Is machine learning and the activity of our brain the same on a fundamental level? At least we can be pretty sure that the simple nematode C. elegans does not care whether it lives as a worm in the ground or as a virtual worm on a computer hard drive.

Learn more: Worm Uploaded to a Computer and Trained to Balance a Pole

The Latest on: Reinforcement learning

[google_news title=”” keyword=”reinforcement learning” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Reinforcement learning

- OpenAI Teases Model Spec To Control Its Algorithm, But There’s a Catchon May 8, 2024 at 1:49 pm

OpenAI has launched a new Model Spec for its algorithms as CEO Sam Altman highlight immediate limitations to watch out for ...

- New approach uses generative AI to imitate human motionon May 8, 2024 at 10:11 am

An international group of researchers has created a new approach to imitating human motion by combining central pattern generators (CPGs) and deep reinforcement learning (DRL). The method not only ...

- OpenAI's new Model Spec reveals more about how it wants AI to behaveon May 8, 2024 at 7:12 am

The document gives users deeper insight into the company's AI models. You have until May 22 to review and submit feedback.

- Reinforcement Learning Market Leaders: Profiles and Achievements of Top Companieson May 7, 2024 at 2:41 pm

Report Ocean published the latest research report on the Reinforcement Learning market. In order to comprehend a market holistically, a variety of factors must be evaluated, including demographics, ...

- Collaborative hunting in artificial agents with deep reinforcement learningon May 7, 2024 at 1:20 am

Collaborative hunting, characterized by the division of roles among predators, has emerged within a group of artificial agents through deep reinforcement learning.

- Nvidia’s DrEureka outperforms humans in training robotics systemson May 6, 2024 at 7:53 pm

DrEureka takes a robotic task description and uses an LLM to generate software implementations for a reward function that measures success in that task.

- 'People don't realize the innovation that happens here' AI at work in Houston's oil and gas industryon May 6, 2024 at 4:56 am

A recent report predicts the value of AI in the oil and gas sector will rise to nearly $6 billion by 2028, with Houston being a hub for innovation in the development of AI in the industry.

- Forest walker robot takes a brutal beating and keeps marchingon May 5, 2024 at 5:25 pm

Somebody better break it to the Ewoks: crafty log tricks aren't gonna cut the mustard. LimX Dynamics has released video of its P1 Biped – heavily inspired by the AT-ST walker from Star Wars: Return of ...

- Using sim-to-real reinforcement learning to train robots to do simple tasks in broad environmentson April 19, 2024 at 11:14 pm

A team of roboticists at the University of California, Berkeley, reports that it is possible to train robots to do relatively simple tasks by using sim-to-real reinforcement learning to train them.

via Bing News