A machine learning algorithm can detect signs of anxiety and depression in the speech patterns of young children, potentially providing a fast and easy way of diagnosing conditions that are difficult to spot and often overlooked in young people, according to new research published in the Journal of Biomedical and Health Informatics.

Around one in five children suffer from anxiety and depression, collectively known as “internalizing disorders.” But because children under the age of eight can’t reliably articulate their emotional suffering, adults need to be able to infer their mental state, and recognise potential mental health problems. Waiting lists for appointments with psychologists, insurance issues, and failure to recognise the symptoms by parents all contribute to children missing out on vital treatment.

“We need quick, objective tests to catch kids when they are suffering,” says Ellen McGinnis, a clinical psychologist at the University of Vermont Medical Center’s Vermont Center for Children, Youth and Families and lead author of the study. “The majority of kids under eight are undiagnosed.”

Early diagnosis is critical because children respond well to treatment while their brains are still developing, but if they are left untreated they are at greater risk of substance abuse and suicide later in life. Standard diagnosis involves a 60-90 minute semi-structured interview with a trained clinician and their primary care-giver. McGinnis, along with University of Vermont biomedical engineer and study senior author Ryan McGinnis, has been looking for ways to use artificial intelligence and machine learning to make diagnosis faster and more reliable.

The researchers used an adapted version of a mood induction task called the Trier-Social Stress Task, which is intended to cause feelings of stress and anxiety in the subject. A group of 71 children between the ages of three and eight were asked to improvise a three-minute story, and told that they would be judged based on how interesting it was. The researcher acting as the judge remained stern throughout the speech, and gave only neutral or negative feedback. After 90 seconds, and again with 30 seconds left, a buzzer would sound and the judge would tell them how much time was left.

“The task is designed to be stressful, and to put them in the mindset that someone was judging them,” says Ellen McGinnis.

The children were also diagnosed using a structured clinical interview and parent questionnaire, both well-established ways of identifying internalizing disorders in children.

The researchers used a machine learning algorithm to analyze statistical features of the audio recordings of each kid’s story and relate them to the child’s diagnosis. They found the algorithm was highly successful at diagnosing children, and that the middle phase of the recordings, between the two buzzers, was the most predictive of a diagnosis.

“The algorithm was able to identify children with a diagnosis of an internalizing disorder with 80 percent accuracy, and in most cases that compared really well to the accuracy of the parent checklist,” says Ryan McGinnis. It can also give the results much more quickly – the algorithm requires just a few seconds of processing time once the task is complete to provide a diagnosis.

The algorithm identified eight different audio features of the children’s speech, but three in particular stood out as highly indicative of internalizing disorders: low-pitched voices, with repeatable speech inflections and content, and a higher-pitched response to the surprising buzzer. Ellen McGinnis says these features fit well with what you might expect from someone suffering from depression. “A low-pitched voice and repeatable speech elements mirrors what we think about when we think about depression: speaking in a monotone voice, repeating what you’re saying,” says Ellen McGinnis.

The higher-pitched response to the buzzer is also similar to the response the researchers found in their previous work, where children with internalizing disorders were found to exhibit a larger turning-away response from a fearful stimulus in a fear induction task.

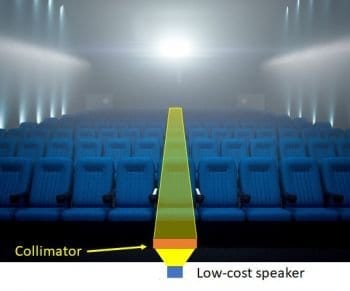

The voice analysis has a similar accuracy in diagnosis to the motion analysis in that earlier work, but Ryan McGinnis thinks it would be much easier to use in a clinical setting. The fear task requires a darkened room, toy snake, motion sensors attached to the child and a guide, while the voice task only needs a judge, a way to record speech and a buzzer to interrupt. “This would be more feasible to deploy,” he says.

Ellen McGinnis says the next step will be to develop the speech analysis algorithm into a universal screening tool for clinical use, perhaps via a smartphone app that could record and analyze results immediately. The voice analysis could also be combined with the motion analysis into a battery of technology-assisted diagnostic tools to help identify children at risk of anxiety and depression before even their parents suspect that anything is wrong.

Learn more: UVM Study: AI Can Detect Depression in a Child’s Speech

The Latest on: Voice analysis

[google_news title=”” keyword=”voice analysis” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Voice analysis

- AI voice analysis gives suicide hotline workers an emotional dashboardon May 1, 2024 at 12:11 am

An AI model accurately tracks emotions like fear and worry in the voices of crisis line callers, according to new research. The model’s developer hopes it can provide real-time assistance to phone ...

- Why Did The Voice Referendum Fail? We Crunched The Data And Found 6 Reasonson April 30, 2024 at 10:54 pm

Since Australia's First Nations Voice to Parliament referendum in October 2023, diverse commentaries have sought to explain why it failed. But what ...

- G7 Ministers Agree To Phase Out Coal Use By 2035on April 30, 2024 at 5:16 pm

Environment Ministers from the G7 nations committed themselves to phase out the use of coal by 2035 as part of a wider effort to r ...

- Study explores how African American English speakers adapt their speech to be understood by voice technologyon April 30, 2024 at 8:00 am

Interactions with voice technology, such as Amazon's Alexa, Apple's Siri, and Google Assistant, can make life easier by increasing efficiency and productivity. However, errors in generating and ...

- BBC presenter’s likeness used in advert after company fooled by AI-generated voiceon April 30, 2024 at 12:23 am

BBC presenter’s likeness used in advert after company fooled by AI-generated voice - ‘It does feel like a violation,’ Liz Bonnin said ...

- Police arrest high school athletic director for deepfaking principal's voiceon April 26, 2024 at 9:17 am

The primary voice in the audio clip sounded like Pikesville High's principal ... added a background track of ambient noises to make it sound more natural. A second analysis by the University of ...

- Analysis of the first round of the NFL Drafton April 26, 2024 at 3:16 am

Southern California quarterback Caleb Williams, top, celebrated after being chosen by the Chicago Bears with the first overall pick during the NFL Draft on Thursday.

- Bob Cole, the voice of hockey in Canada for a half-century, dies at 90on April 25, 2024 at 8:23 pm

TORONTO (AP) — Bob Cole, the voice of hockey in Canada for a half century who served as the soundtrack for some of the national sport’s biggest moments, has died. He was 90.

- Gears of War voice actor says next game in the series could be announced in Juneon April 25, 2024 at 7:01 am

This statement could be hinting that Microsoft and The Coalition may be announcing a new title in the Gears of War franchise later this year in June, most likely at the Summer Game Fest event on June ...

- DANA Air: NSIB begins analysis of flight data, cockpit voice recorderson April 23, 2024 at 5:00 pm

The Nigerian Safety Investigation Bureau (NSIB) has said its officials have retrieved the flight data and cockpit voice recorders of the DANA Air ... would also be included for forensic analysis and ...

via Bing News