Research Image

CREDIT: POSTECH

Breast cancer undisputedly has the highest incidence rate in female patients. Moreover, out of the six major cancers, it is the only one that has shown an increasing trend over the past 20 years.

The chance of survival would be higher if breast cancer is detected and treated early. However, the survival rate drastically decreases to less than 75% after stage 3, which means early detection with regular medical check-ups is critical for reducing patient mortality. . Recently a research team at POSTECH developed an AI network system for ultrasonography to accurately detect and diagnose breast cancer.

A team of researchers from POSTECH led by Professor Chulhong Kim (Department of Convergence IT Engineering, the Department of Electrical Engineering, and the Department of Mechanical Engineering), and Sampa Misra and Chiho Yoon (Department of Electrical Engineering) has developed a deep learning-based multimodal fusion network for segmentation and classification of breast cancers using B-mode and strain elastography ultrasound images. The findings from the study were published in Bioengineering & Translational Medicine.

Ultrasonography is one of the key medical imaging modalities for evaluating breast lesions. To distinguish benign from malignant lesions, computer-aided diagnosis (CAD) systems have offered radiologists a great deal of help by automatically segmenting and identifying features of lesions.

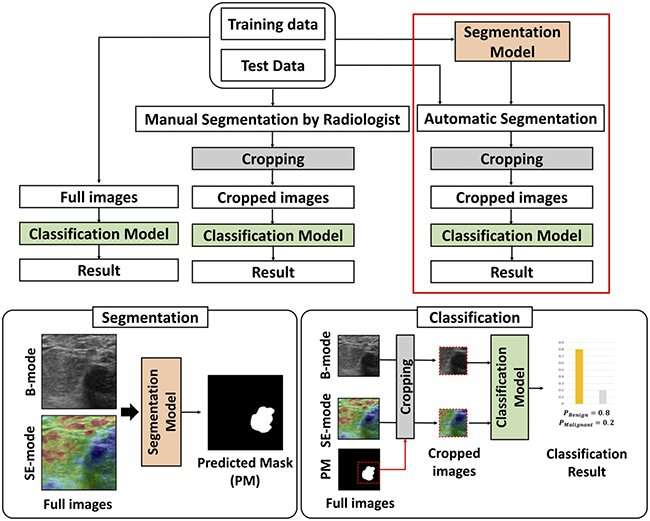

Here, the team presented deep learning (DL)-based methods to segment the lesions and then classify them as benign or malignant, using both B-mode and strain elastography (SE-mode) images. First of all, the team constructed a ‘weighted multimodal U-Net (W-MM-U-Net) model’ where the optimum weight is assigned on different imaging modalities to segment lesions, utilizing a weighted-skip connection method. Also, they proposed a ‘multimodal fusion framework (MFF)’ on cropped B-mode and SE-mode ultrasound (US) lesion images to classify benign and malignant lesions.

The MFF consists of an integrated feature network (IFN) and a decision network (DN). Unlike other recent fusion methods, the proposed MFF method can simultaneously learn complementary information from convolutional neural networks (CNN) that are trained with B-mode and SE-mode US images. The features of the CNN are ensembled using the multimodal EmbraceNet model, while DN classifies the images using those features.

The method predicted seven benign patients as being benign in three out of the five trials and six malignant patients as being malignant in five out of the five trials, according to the experimental results on the clinical data. This means the proposed method outperforms the conventional single and multimodal methods and would potentially enhance the classification accuracy of radiologists for breast cancer detection in US images.

Professor Chulhong Kim explained, “We were able to increase the accuracy of lesion segmentation by determining the importance of each input modal and automatically giving the proper weight.” He added, “We trained each deep learning model and the ensemble model at the same time to have a much better classification performance than the conventional single modal or other multimodal methods.”

Original Article: AI-powered ultrasound imaging that detects breast cancer

More from: Pohang University of Science and Technology

The Latest Updates from Bing News

Go deeper with Bing News on:

Detecting breast cancer

- Aroostook breast cancer detection innovator vies for statewide business award

Kendra Batchelder, co-founder and CEO of Waved Medical LLC in Presque Isle, is one of two County entrepreneurs set to compete for $25,000 in the state Top Gun Showcase Pitch Event on May 16 in ...

- Mammogram guideline sparks debate among breast cancer experts

An influential task force advised mammograms every other year instead of annually — a move that left some doctors frustrated and many patients confused.

- Doctors Hope Breast Cancer Survival Rates Increase With New Screening Guidelines

As more data comes out regarding breast cancer, there's been debate about what age is best to start screening, and some governing bodies are changing their minds.

- Q&A: Do you know your risks for breast cancer?

The reminders are everywhere. When a woman turns 40, doctors say she should begin receiving yearly mammograms to detect breast cancer. You see it on posters, ads and buttons.

- New breast cancer screening guidelines: what you need to know

actually decreases the ability to detect small lumps, and is a risk factor for breast cancer. Takeaway tip: If you are 40 or older, it is recommended you know your risk factors for breast cancer and ...

Go deeper with Bing News on:

Multimodal fusion framework

- Turning the promise of fusion into reality

As a leader in fusion research, UT is expanding its capabilities to address the most pressing challenges to fusion technology feasibility.

- Framework’s software and firmware have been a mess, but it’s working on them

Since Framework showed off its first prototypes in February 2021, we've generally been fans of the company's modular, repairable, upgradeable laptops. Not that the company's hardware releases to d ...

- Reka releases Reka Core, its multimodal language model to rival GPT-4 and Claude 3 Opus

Find out how you can attend here. Reka, a San Francisco-based AI startup founded by researchers from DeepMind, Google and Meta, is introducing a new multimodal language model called Reka Core.

- Groundbreaking AI Framework Revolutionizes Drug Discovery

Following this, it integrates these features through a fusion process and ... plans to enhance the framework's capabilities, including the exploration of multimodal pre-training strategies.

- Advancing drug discovery with AI: Introducing the KEDD framework

Following this, it integrates these features through a fusion process and ... plans to enhance the framework's capabilities, including the exploration of multimodal pre-training strategies.