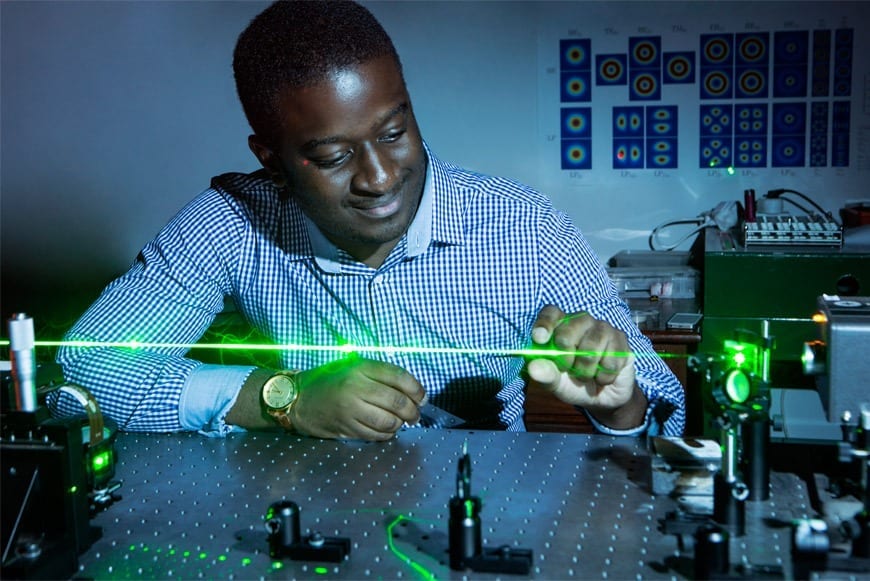

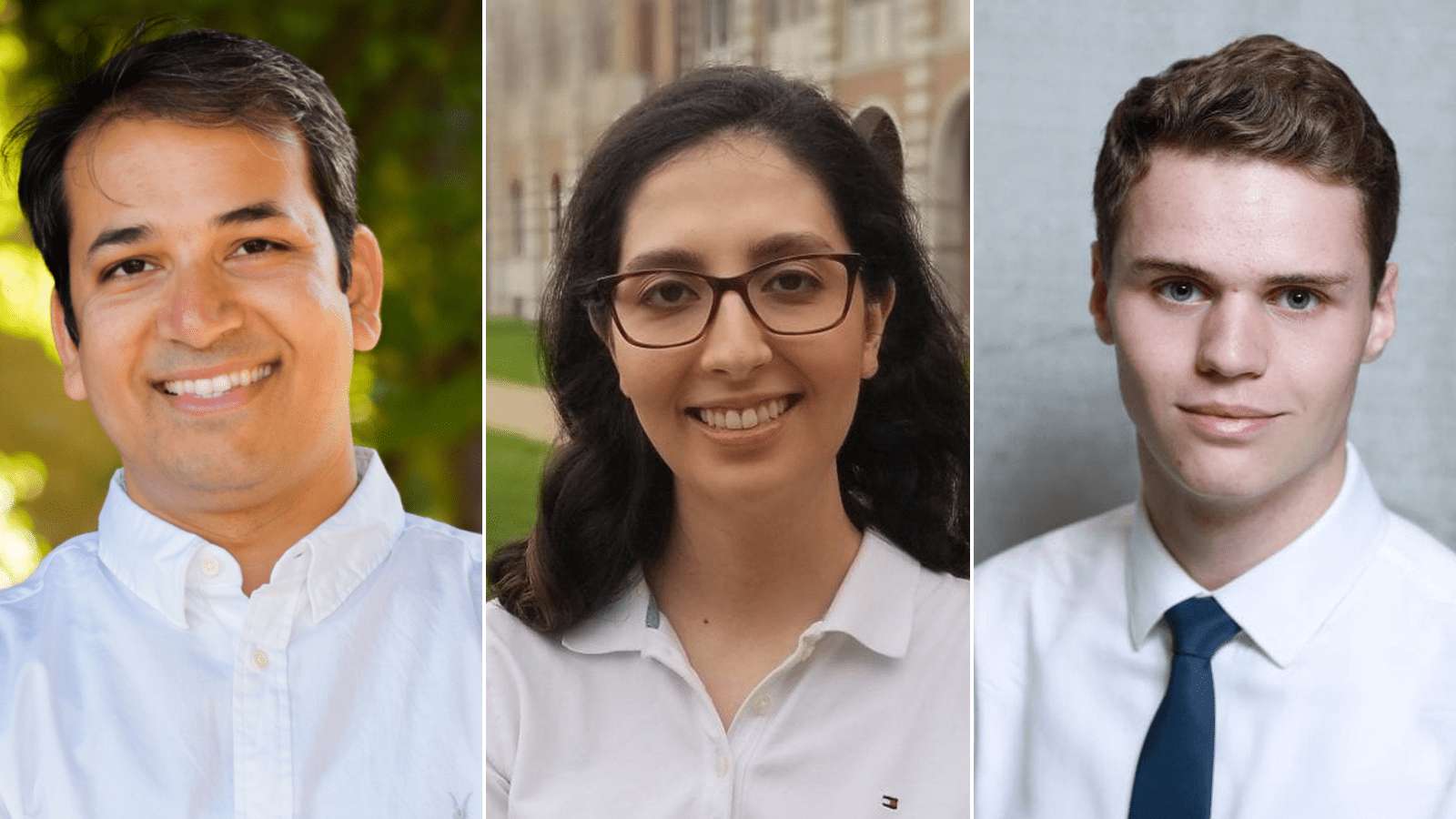

Anshumali Shrivastava, Shabnam Daghaghi, Nicholas Meisburger

Rice University computer scientists have demonstrated artificial intelligence (AI) software that runs on commodity processors and trains deep neural networks 15 times faster than platforms based on graphics processors.

“The cost of training is the actual bottleneck in AI,” said Anshumali Shrivastava, an assistant professor of computer science at Rice’s Brown School of Engineering. “Companies are spending millions of dollars a week just to train and fine-tune their AI workloads.”

Shrivastava and collaborators from Rice and Intel will present research that addresses that bottleneck April 8 at the machine learning systems conference MLSys.

Deep neural networks (DNN) are a powerful form of artificial intelligence that can outperform humans at some tasks. DNN training is typically a series of matrix multiplication operations, an ideal workload for graphics processing units (GPUs), which cost about three times more than general purpose central processing units (CPUs).

“The whole industry is fixated on one kind of improvement — faster matrix multiplications,” Shrivastava said. “Everyone is looking at specialized hardware and architectures to push matrix multiplication. People are now even talking about having specialized hardware-software stacks for specific kinds of deep learning. Instead of taking an expensive algorithm and throwing the whole world of system optimization at it, I’m saying, ‘Let’s revisit the algorithm.’”

Shrivastava’s lab did that in 2019, recasting DNN training as a search problem that could be solved with hash tables. Their “sub-linear deep learning engine” (SLIDE) is specifically designed to run on commodity CPUs, and Shrivastava and collaborators from Intel showed it could outperform GPU-based training when they unveiled it at MLSys 2020.

The study they’ll present this week at MLSys 2021 explored whether SLIDE’s performance could be improved with vectorization and memory optimization accelerators in modern CPUs.

“Hash table-based acceleration already outperforms GPU, but CPUs are also evolving,” said study co-author Shabnam Daghaghi, a Rice graduate student. “We leveraged those innovations to take SLIDE even further, showing that if you aren’t fixated on matrix multiplications, you can leverage the power in modern CPUs and train AI models four to 15 times faster than the best specialized hardware alternative.”

Study co-author Nicholas Meisburger, a Rice undergraduate, said “CPUs are still the most prevalent hardware in computing. The benefits of making them more appealing for AI workloads cannot be understated.”

Original Article: Rice, Intel optimize AI training for commodity hardware

More from: Rice University

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Deep neural networks

- Scientists uncover quantum-inspired vulnerabilities in neural networks

In a recent study merging the fields of quantum physics and computer science, Dr. Jun-Jie Zhang and Prof. Deyu Meng have explored the vulnerabilities of neural networks through the lens of the ...

- Microscopic Brain Tissue Map Reveals Vast Neural Networks

Researchers created the largest 3D reconstruction of human brain tissue at synaptic resolution, capturing detailed images of a cubic millimeter of human temporal cortex.

- Generative AI Market to Skyrocket: Projected Worth of US$ 10.9 Billion in 2023, Expected to Surpass US$ 167.4 Billion by 2033

Generative AI Market expected to hit worth US$ 167.4 Billion at CAGR of 31.3% during forecast period of 2023 to 2033 | Data analysis Future Market Insights, Inc.

- AI Deep Learning Improves Brain-Computer Interface Performance

AI deep learning powers a brain-computer interface that enables humans to continuously control a cursor using thoughts.

- New Deep Instinct AI assistant bridges the gap in malware analysis

Threat protection-focused startup Deep Instinct Ltd. today announced the launch of DIANNA — short for Deep Instinct’s Artificial Neural Network Assistant, a new generative artificial intelligence ...

Go deeper with Google Headlines on:

Deep neural networks

[google_news title=”” keyword=”deep neural networks” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Training deep neural networks

- AI Deep Learning Improves Brain-Computer Interface Performance

AI deep learning powers a brain-computer interface that enables humans to continuously control a cursor using thoughts.

- AI And The Municipal Bond Market

AI is rapidly reengineering the $4 trillion Municipal Bond Market. No part of the market is going to be untouched.

- Apple builds a slimmed-down AI model using Stanford, Google innovations

The phone giant's open-source large language model beats previous models by melding the insights of many researchers.

- Top 10 Best Python Libraries for Deep Learning in 2024

To understand deep learning, it’s important to have a basic understanding of machine learning and neural networks. Machine learning is a type of artificial intelligence that involves training ...

- Generative AI that imitates human motion

DRL extends traditional reinforcement learning by leveraging deep neural networks to handle more complex tasks and ... with new situations or environments it hasn't encountered during training. Its ...

Go deeper with Google Headlines on:

Training deep neural networks

[google_news title=”” keyword=”training deep neural networks” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]