A massively parallel amplitude-only Fourier neural network

Researchers invent an optical convolutional neural network accelerator for machine learning

SUMMARY

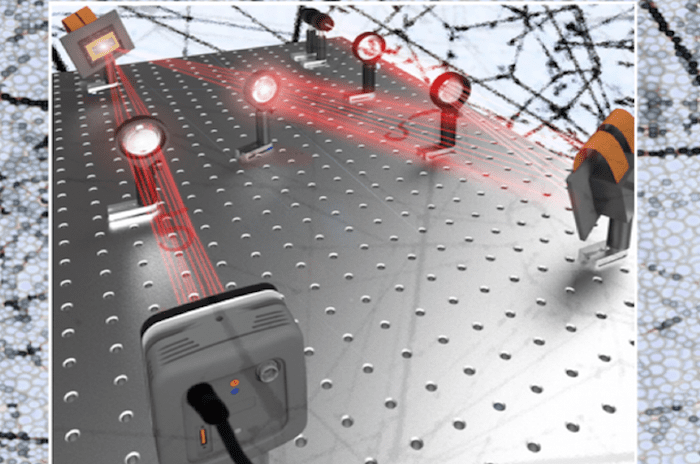

Researchers at the George Washington University, together with researchers at the University of California, Los Angeles, and the deep-tech venture startup Optelligence LLC, have developed an optical convolutional neural network accelerator capable of processing large amounts of information, on the order of petabytes, per second. This innovation, which harnesses the massive parallelism of light, heralds a new era of optical signal processing for machine learning with numerous applications, including in self-driving cars, 5G networks, data-centers, biomedical diagnostics, data-security and more.

THE SITUATION

Global demand for machine learning hardware is dramatically outpacing current computing power supplies. State-of-the-art electronic hardware, such as graphics processing units and tensor processing unit accelerators, help mitigate this, but are intrinsically challenged by serial data processing that requires iterative data processing and encounters delays from wiring and circuit constraints. Optical alternatives to electronic hardware could help speed up machine learning processes by simplifying the way information is processed in a non-iterative way. However, photonic-based machine learning is typically limited by the number of components that can be placed on photonic integrated circuits, limiting the interconnectivity, while free-space spatial-light-modulators are restricted to slow programming speeds.

THE SOLUTION

To achieve a breakthrough in this optical machine learning system, the researchers replaced spatial light modulators with digital mirror-based technology, thus developing a system over 100 times faster. The non-iterative timing of this processor, in combination with rapid programmability and massive parallelization, enables this optical machine learning system to outperform even the top-of-the-line graphics processing units by over one order of magnitude, with room for further optimization beyond the initial prototype.

Unlike the current paradigm in electronic machine learning hardware that processes information sequentially, this processor uses the Fourier optics, a concept of frequency filtering which allows for performing the required convolutions of the neural network as much simpler element-wise multiplications using the digital mirror technology.

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Machine intelligence

- CBSE Class 10 Artificial Intelligence Latest Syllabus Free PDF Download

May/1052024/cbse-class-10-ai-syllabus.jpg" width="1200" height="675" /> CBSE Class 10 Latest Syllabus 2024-25: Download CBSE Class 10 Artificial Intelligence Syllabus for the current academic session ...

- Is This Beaten-Down Artificial Intelligence Stock a No-Brainer Buy Right Now?

Founded in 1993, The Motley Fool is a financial services company dedicated to making the world smarter, happier, and richer. The Motley Fool reaches millions of people every month through our premium ...

- Machine learning tool flags undiagnosed immunodeficiency disease

A machine learning model can effectively sift through EHR data to rank patients' likelihood of having common variable immunodeficiency disease.

- Five Takeaways: Artificial Intelligence & Machine Learning

The Journal of Business hosted Yanek Kondryszyn, intellectual property attorney and partner at Lee & Hayes PLLC, for its most recent Elevating The Conversation podcast.

- Human Expertise Meets Machine Intelligence: The Winning Formula for Modern Financial Planning

Learn how AI is augmenting, not replacing, human advisors. Discover how this collaboration is creating a more robust and personalized financial planning experience.

Go deeper with Google Headlines on:

Machine intelligence

[google_news title=”” keyword=”machine intelligence” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Machine learning hardware

- Apple’s AI future can be summed up with a big question mark

It’s fascinating to wonder where Apple Inc. would be today and what it would be doing in artificial intelligence if co-founder Steve Jobs were still at the helm. Apple’s current AI strategy can be ...

- Cisco reimagines cybersecurity at RSAC 2024 with AI and kernel-level visibility

Defending against adversarial AI-based attacks and the torrent of new tradecraft attackers are creating requires a new approach to cybersecurity.

- Machine learning enables cheaper and safer low-power MRI

Machine learning enables cheaper and safer low-power magnetic resonance imaging (MRI) without sacrificing accuracy, according to a new study.

- Accelerating material characterization: Machine learning meets X-ray absorption spectroscopy

Lawrence Livermore National Laboratory (LLNL) scientists have developed a new approach that can rapidly predict the structure and chemical composition of heterogeneous materials.

- Dexterous robot hand can take a beating in the name of AI research

A robotics company likely most famous for a demo of its dexterous robot hand at ICRA 2019 with Jeff Bezos has now unveiled a new robust model designed for machine learning research, which was ...

Go deeper with Google Headlines on:

Machine learning hardware

[google_news title=”” keyword=”machine learning hardware” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]