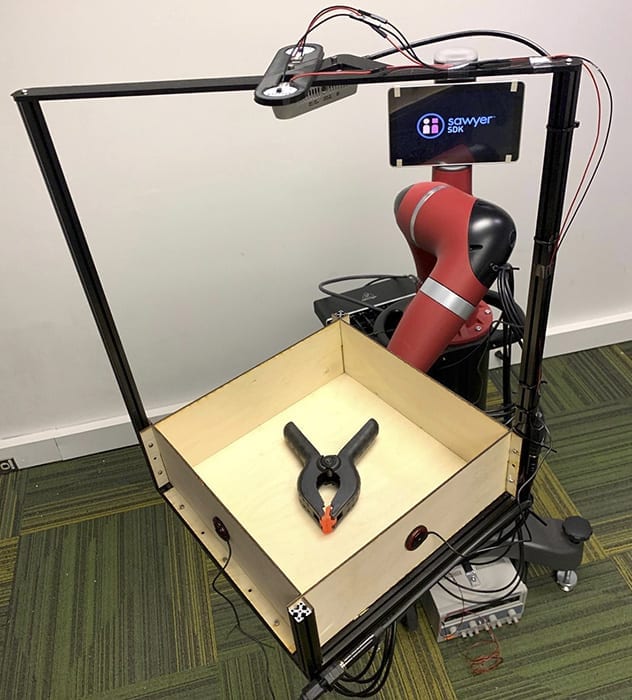

To prove that sound can be an asset to robots, SCS researchers built a dataset by recording video and audio of 60 common objects as they rolled around a tray. The team captured these interactions using Tilt-Bot — a square tray attached to the arm of a Sawyer robot.

Carnegie Mellon builds dataset capturing interaction of sound, action, vision

People rarely use just one sense to understand the world, but robots usually only rely on vision and, increasingly, touch. Carnegie Mellon University researchers find that robot perception could improve markedly by adding another sense: hearing.

In what they say is the first large-scale study of the interactions between sound and robotic action, researchers at CMU’s Robotics Institute found that sounds could help a robot differentiate between objects, such as a metal screwdriver and a metal wrench. Hearing also could help robots determine what type of action caused a sound and help them use sounds to predict the physical properties of new objects.

“A lot of preliminary work in other fields indicated that sound could be useful, but it wasn’t clear how useful it would be in robotics,” said Lerrel Pinto, who recently earned his Ph.D. in robotics at CMU and will join the faculty of New York University this fall. He and his colleagues found the performance rate was quite high, with robots that used sound successfully classifying objects 76 percent of the time.

The results were so encouraging, he added, that it might prove useful to equip future robots with instrumented canes, enabling them to tap on objects they want to identify.

The researchers presented their findings last month during the virtual Robotics Science and Systems conference. Other team members included Abhinav Gupta, associate professor of robotics, and Dhiraj Gandhi, a former master’s student who is now a research engineer at Facebook Artificial Intelligence Research’s Pittsburgh lab.

To perform their study, the researchers created a large dataset, simultaneously recording video and audio of 60 common objects — such as toy blocks, hand tools, shoes, apples and tennis balls — as they slid or rolled around a tray and crashed into its sides. They have since released this dataset, cataloging 15,000 interactions, for use by other researchers.

The team captured these interactions using an experimental apparatus they called Tilt-Bot — a square tray attached to the arm of a Sawyer robot. It was an efficient way to build a large dataset; they could place an object in the tray and let Sawyer spend a few hours moving the tray in random directions with varying levels of tilt as cameras and microphones recorded each action.

They also collected some data beyond the tray, using Sawyer to push objects on a surface.

Though the size of this dataset is unprecedented, other researchers have also studied how intelligent agents can glean information from sound. For instance, Oliver Kroemer, assistant professor of robotics, led research into using sound to estimate the amount of granular materials, such as rice or pasta, by shaking a container, or estimating the flow of those materials from a scoop.

Pinto said the usefulness of sound for robots was therefore not surprising, though he and the others were surprised at just how useful it proved to be. They found, for instance, that a robot could use what it learned about the sound of one set of objects to make predictions about the physical properties of previously unseen objects.

“I think what was really exciting was that when it failed, it would fail on things you expect it to fail on,” he said. For instance, a robot couldn’t use sound to tell the difference between a red block or a green block. “But if it was a different object, such as a block versus a cup, it could figure that out.”

The Latest Updates from Bing News & Google News

Go deeper with Bing News on:

Robot hearing

- KRQE Newsfeed: Suspect arrested, Motion hearing, Warming weekend, Session priorities, Robot dog

Friday’s Top Stories Friday’s Five Facts [1] APD investigating police shooting near Central and Mesilla – APD is investigating a police shooting that happened Thursday night near Central and Mesilla.

- IEEE Honors Technological Heroes At Its Annual Honors Event

The IEEE VICS and Honors Ceremony provided insights into important technologies and recognizes technology heroes who have changed the world.

- Wild NASA proposal envisions magnetic hover trains on the moon

“FLOAT robots have no moving parts and levitate over the track to minimize lunar dust abrasion / wear, unlike lunar robots with wheels, legs, or tracks,” FLOAT’s project description explains, adding ...

- Best Robot Vacuums for 2024

Originally hailing from Troy, Ohio, Ry Crist is a writer, a text-based adventure connoisseur, a lover of terrible movies and an enthusiastic yet mediocre cook. A CNET editor since 2013, Ry's beats ...

- The best robot vacuum for pet hair 2024: reviewed by experts

If you're a dog or cat owner, one of the best robot vacuums for pet hair could be a major game-changer. As much as we love our furry friends, but the stray hair that turns into fluff balls that ...

Go deeper with Google Headlines on:

Robot hearing

[google_news title=”” keyword=”robot hearing” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]

Go deeper with Bing News on:

Robot perception

- Robot Talk Episode 85 – Margarita Chli

Claire chatted to Margarita Chli from the University of Cyprus all about vision, navigation, and small aerial drones.

- Yaskawa exhibits robots for welding, materials handling, and bin picking

Yaskawa showed several technologies with partners at Automate, including cobots for welding and vision-guided robots for machine tending.

- GARMI Care Robot from Technical University of Munich Evolves into Universal Assistant

The GARMI assistance robot is becoming increasingly versatile and intelligent. As researchers from TUM's Munich Institute of Robotics and Machine Intelligence (MIRMI) demonstrated at the 2024 Internat ...

- Chinese Robot Maker Creates Humanoid Robot that Can Do Almost All Human Tasks

A Shenzhen-based company launched Astribot S1, the newest humanoid robot that mimics typical human actions like slicing a fruit, folding laundry, and more.

- New neural tech could power insect-sized intelligent flying robots

Researchers have developed a neuromorphic vision-to-control mechanism that makes autonomous drone flights possible. Neuromorphic ...

Go deeper with Google Headlines on:

Robot perception

[google_news title=”” keyword=”robot perception” num_posts=”5″ blurb_length=”0″ show_thumb=”left”]