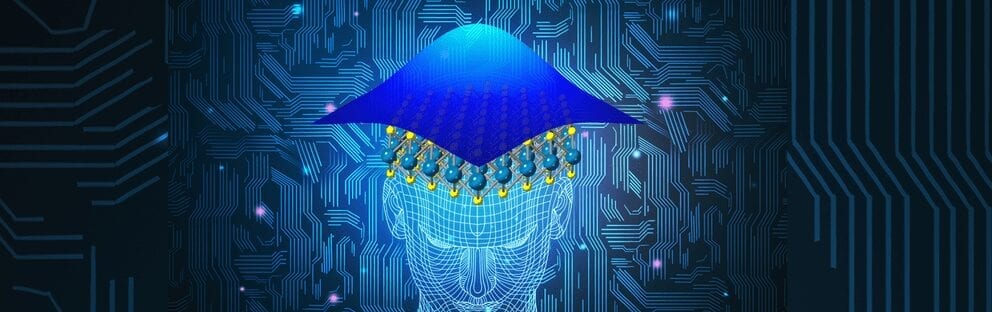

While computers have become smaller and more powerful and supercomputers and parallel computing have become the standard, we are about to hit a wall in energy and miniaturization. Now, Penn State researchers have designed a 2D device that can provide more than yes-or-no answers and could be more brainlike than current computing architectures.

“Complexity scaling is also in decline owing to the non-scalability of traditional von Neumann computing architecture and the impending ‘Dark Silicon’ era that presents a severe threat to multi-core processor technology,” the researchers note in today’s (Sept 13) online issue of Nature Communications.

The Dark Silicon era is already upon us to some extent and refers to the inability of all or most of the devices on a computer chip to be powered up at once. This happens because of too much heat generated from a single device. Von Neumann architecture is the standard structure of most modern computers and relies on a digital approach — “yes” or “no” answers — where program instruction and data are stored in the same memory and share the same communications channel.

“Because of this, data operations and instruction acquisition cannot be done at the same time,” said Saptarshi Das, assistant professor of engineering science and mechanics. “For complex decision-making using neural networks, you might need a cluster of supercomputers trying to use parallel processors at the same time — a million laptops in parallel — that would take up a football field. Portable healthcare devices, for example, can’t work that way.”

The solution, according to Das, is to create brain-inspired, analog, statistical neural networks that do not rely on devices that are simply on or off, but provide a range of probabilistic responses that are then compared with the learned database in the machine. To do this, the researchers developed a Gaussian field-effect transistor that is made of 2D materials — molybdenum disulfide and black phosphorus. These devices are more energy efficient and produce less heat, which makes them ideal for scaling up systems.

“The human brain operates seamlessly on 20 watts of power,” said Das. “It is more energy efficient, containing 100 billion neurons, and it doesn’t use von Neumann architecture.”

The researchers note that it isn’t just energy and heat that have become problems, but that it is becoming difficult to fit more in smaller spaces.

“Size scaling has stopped,” said Das. “We can only fit approximately 1 billion transistors on a chip. We need more complexity like the brain.”

The idea of probabilistic neural networks has been around since the 1980s, but it needed specific devices for implementation.

“Similar to the working of a human brain, key features are extracted from a set of training samples to help the neural network learn,” said Amritanand Sebastian, graduate student in engineering science and mechanics.

The researchers tested their neural network on human electroencephalographs, graphical representation of brain waves. After feeding the network with many examples of EEGs, the network could then take a new EEG signal and analyze it and determine if the subject was sleeping.

“We don’t need as extensive a training period or base of information for a probabilistic neural network as we need for an artificial neural network,” said Das.

The researchers see statistical neural network computing having applications in medicine, because diagnostic decisions are not always 100% yes or no. They also realize that for the best impact, medical diagnostic devices need to be small, portable and use minimal energy.

Das and colleagues call their device a Gaussian synapse and it is based on a two-transistor setup where the molybdenum disulfide is an electron conductor, while the black phosphorus conducts through missing electrons, or holes. The device is essentially two variable resistors in series and the combination produces a graph with two tails, which matches a Gaussian function.

Learn more: Brain-inspired computing could tackle big problems in a small way

The Latest on: Probabilistic computing

[google_news title=”” keyword=”probabilistic computing” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Probabilistic computing

- How to leverage quantum computingon May 14, 2024 at 1:38 am

The birth of quantum payments, for instance, would eradicate the industry’s challenge of money laundering over the blockchain. This is because a quantum state is unfalsifiable; it cannot be replicated ...

- Next Generation Computing Market: Leading the Charge Towards US$ 783.64 Billion by 2033 with a 19.1% CAGRon May 13, 2024 at 8:04 pm

The next generation computing market is poised to significantly influence the technology landscape, expected to achieve an impressive CAGR of 19.1%. This growth is projected to result in a substantial ...

- Quantum computing takes a giant leap forward with breakthrough discoveryon May 12, 2024 at 2:45 pm

Scientists have produced an enhanced, ultra-pure form of silicon that is crucial for paving the way towards scalable quantum computing.

- Beyond Binary: 2D Magnetic Devices Enable Brain-Like Probabilistic Computerson May 10, 2024 at 8:05 pm

University of Wyoming researchers have developed a new method to control magnetic states in 2D materials, promising revolutionary advancements in computing technology and energy efficiency. Imagine a ...

- Quantum computing may be the future of technology: what does that really mean?on May 10, 2024 at 1:41 pm

The University of Colorado Boulder is among the list of schools researching quantum with the CUbit Quantum Initiative. By Hope Munoz ...

- The second quantum computing revolutionon May 10, 2024 at 12:45 pm

Madison, students regularly trap single electrons inside silicon chips. They know where the electrons are. They know when they move. And they can monitor that motion in ...

- Researchers develop compiler acceleration technology for quantum computerson May 9, 2024 at 1:47 pm

Researchers have succeeded in developing a technique to quickly search for the optimal quantum gate sequence for a quantum computer using a probabilistic method.

- Molham Aref Creates RelationalAI As AI Coprocessor For Data Cloudson May 8, 2024 at 6:50 am

RelationalAI provides a cloud-based relational knowledge graph management system, with probabilistic processing, to make developing data applications easier.

- Research Bits: May 7on May 7, 2024 at 12:01 am

Researchers led by Deep Jariwala and Roy Olsson have developed a first-of-its-kind high-temperature-resistant memory device that can reliably store data at temperatures as high as 600° Celsius.

- Scientists show that there is indeed an 'entropy' of quantum entanglementon May 3, 2024 at 3:23 am

Bartosz Regula from the RIKEN Center for Quantum Computing and Ludovico Lami from the University of Amsterdam have shown, through probabilistic calculations, that there is indeed, as had been ...

via Bing News