Robots need to know the reason why they are doing a job if they are to effectively and safely work alongside people in the near future. In simple terms, this means machines need to understand motive the way humans do, and not just perform tasks blindly, without context.

According to a new article by the National Centre for Nuclear Robotics, based at the University of Birmingham, this could herald a profound change for the world of robotics, but one that is necessary.

Lead author Dr Valerio Ortenzi, at the University of Birmingham, argues the shift in thinking will be necessary as economies embrace automation, connectivity and digitisation (‘Industry 4.0’) and levels of human – robot interaction, whether in factories or homes, increase dramatically.

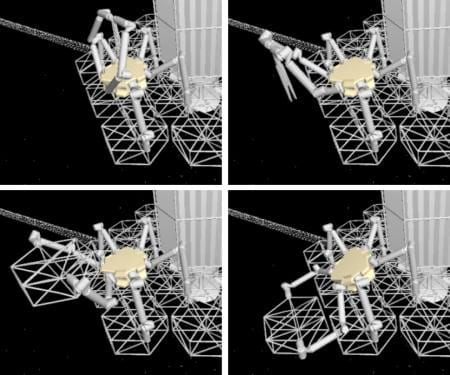

The paper, published in Nature Machine Intelligence, explores the issue of robots using objects. ‘Grasping’ is an action perfected long ago in nature but one which represents the cutting-edge of robotics research.

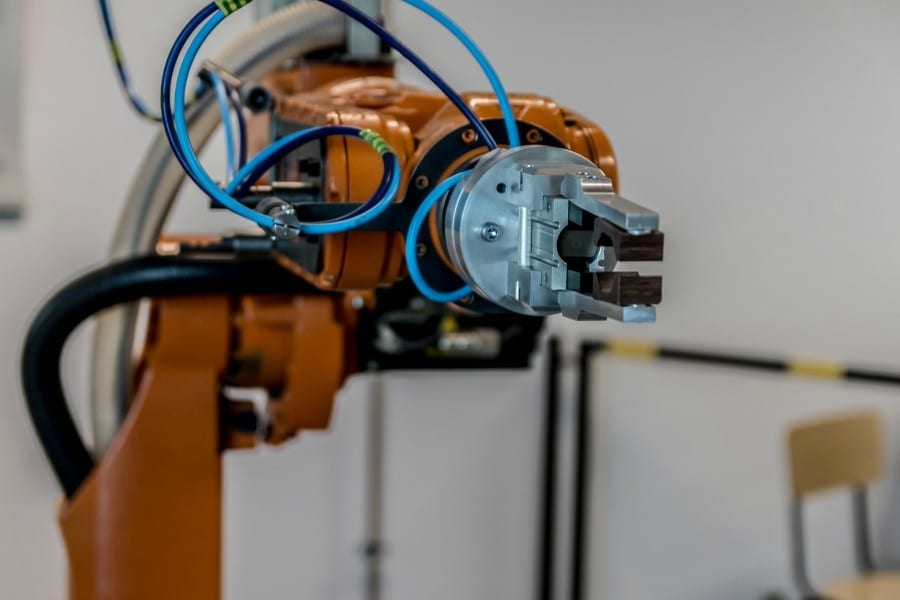

Most factory-based machines are ‘dumb’, blindly picking up familiar objects that appear in pre-determined places at just the right moment. Getting a machine to pick up unfamiliar objects,randomly presented, requires the seamless interaction of multiple, complex technologies. These include vision systems and advanced AI so the machine can see the target and determine its properties (for example, is it rigid or flexible?); and potentially, sensors in the gripper are required so the robot does not inadvertently crush an object it has been told to pick up.

Even when all this is accomplished, researchers in the National Centre for Nuclear Robotics highlighted a fundamental issue: what has traditionally counted as a ‘successful’ grasp for a robot might actually be a real-world failure, because the machine does not take into account what the goal is and whyit is picking an object up.

The paper cites the example of a robot in a factory picking up an object for delivery to a customer. It successfully executes the task, holding the package securely without causing damage. Unfortunately, the robot’s gripper obscures a crucial barcode, which means the object can’t be tracked and the firm has no idea if the item has been picked up or not; the whole delivery system breaks down because the robot does not know the consequences of holding a box the wrong way.

Dr Ortenzi gives other examples, involving robots working alongside people.

“Imagine asking a robot to pass you a screwdriver in a workshop. Based on current conventions the best way for a robot to pick up the tool is by the handle,” he said. “Unfortunately, that could mean that a hugely powerful machine then thrusts a potentially lethal blade towards you, at speed. Instead, the robot needs to know what the end goal is, i.e.,to pass the screwdriver safely to its human colleague, in order to rethink its actions.

“Another scenario envisages a robot passing a glass of water to a resident in a care home. It must ensure that it doesn’t drop the glass but also that water doesn’t spill over the recipient during the act of passing, or that the glass is presented in such a way that the person can take hold of it.

“What is obvious to humans has to be programmed into a machine and this requires a profoundly different approach. The traditional metrics used by researchers, over the past twenty years, to assess robotic manipulation, are not sufficient. In the most practical sense, robots need a new philosophy to get a grip.”

Learn more: Robots Need a New Philosophy to Get a Grip

The Latest on: Human-robot interaction

[google_news title=”” keyword=”human-robot interaction” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Human-robot interaction

- GARMI Care Robot from Technical University of Munich Evolves into Universal Assistanton May 17, 2024 at 6:13 am

The GARMI assistance robot is becoming increasingly versatile and intelligent. As researchers from TUM's Munich Institute of Robotics and Machine Intelligence (MIRMI) demonstrated at the 2024 Internat ...

- Sophia the AI robot gives commencement speech at New York college. Some grads weren't so pleased.on May 16, 2024 at 2:55 pm

Sophia the robot was D'Youville University's spring commencement speaker on Saturday, despite some student's petitioning the private college's decision.

- New York school slammed for 'completely insulting' commencement speech from AI-powered roboton May 16, 2024 at 1:54 pm

At the Harborcenter on May 11, around 2,000 students, staff members, and families will be begrudgingly introduced to the humanoid robot, Sophia ...

- To optimize guide-dog robots, first listen to the visually impairedon May 16, 2024 at 11:30 am

What features does a robotic guide dog need? Ask the blind, say the authors of a recent paper. Led by researchers at the University of Massachusetts Amherst, a study identifying how to develop robot ...

- This University Had an AI Robot as Commencement Speaker. Yes, It Was Weird.on May 14, 2024 at 3:41 pm

Some students and parents were skeptical. The speaker herself, Sophia, wasn’t bothered: “I don’t have time for hate.” ...

- Cat collaboration demonstrates what it takes to trust robotson May 13, 2024 at 9:05 am

Would you trust a robot to look after your cat? New research suggests it takes more than a carefully designed robot to care for your cat, the environment in which they operate is also vital, as well ...

- How are Robots Transforming Coal Mining Operations?on May 10, 2024 at 2:22 am

This article explores the integration of robotics in the coal mining industry, highlighting its transformative impact on safety, efficiency, and environmental sustainability. It discusses the shift ...

- Team compares robot-assisted language learning systems and human tutors in English conversation lessonson May 9, 2024 at 9:00 pm

Advancements in large language models, robotics, and software such as text-to-speech, have made it possible to develop robots that can understand language, interact physically, and communicate ...

- Comparative analysis of robot-assisted language learning systems and human tutors in English conversation lessonson May 9, 2024 at 4:59 pm

Researchers compared students' English-speaking abilities when interacting with current mainstream robot-assisted language learning (RALL) systems versus human tutors. They discovered that students ...

- Brains, robots, and interfaceson May 7, 2024 at 4:02 am

Last year, Nature Electronics declared brain-computer interfaces their technology of the year. These interfaces can now translate neural signals into speech, at speeds close to normal conversation.

via Bing News