Smarter, faster algorithm cuts number of steps to solve problems

What if a large class of algorithms used today — from the algorithms that help us avoid traffic to the algorithms that identify new drug molecules — worked exponentially faster?

Computer scientists at the Harvard John A. Paulson School of Engineering and Applied Sciences (SEAS) have developed a completely new kind of algorithm, one that exponentially speeds up computation by dramatically reducing the number of parallel steps required to reach a solution.

The researchers will present their novel approach at two upcoming conferences: the ACM Symposium on Theory of Computing (STOC), June 25-29 and International Conference on Machine Learning (ICML), July 10 -15.

A lot of so-called optimization problems, problems that find the best solution from all possible solutions, such as mapping the fastest route from point A to point B, rely on sequential algorithms that haven’t changed since they were first described in the 1970s. These algorithms solve a problem by following a sequential step-by-step process. The number of steps is proportional to the size of the data. But this has led to a computational bottleneck, resulting in lines of questions and areas of research that are just too computationally expensive to explore.

“These optimization problems have a diminishing returns property,” said Yaron Singer, Assistant Professor of Computer Science at SEAS and senior author of the research. “As an algorithm progresses, its relative gain from each step becomes smaller and smaller.”

Singer and his colleague asked: what if, instead of taking hundreds or thousands of small steps to reach a solution, an algorithm could take just a few leaps?

“This algorithm and general approach allows us to dramatically speed up computation for an enormously large class of problems across many different fields, including computer vision, information retrieval, network analysis, computational biology, auction design, and many others,” said Singer. “We can now perform computations in just a few seconds that would have previously taken weeks or months.”

“This new algorithmic work, and the corresponding analysis, opens the doors to new large-scale parallelization strategies that have much larger speedups than what has ever been possible before,” said Jeff Bilmes, Professor in the Department of Electrical Engineering at the University of Washington, who was not involved in the research. “These abilities will, for example, enable real-world summarization processes to be developed at unprecedented scale.”

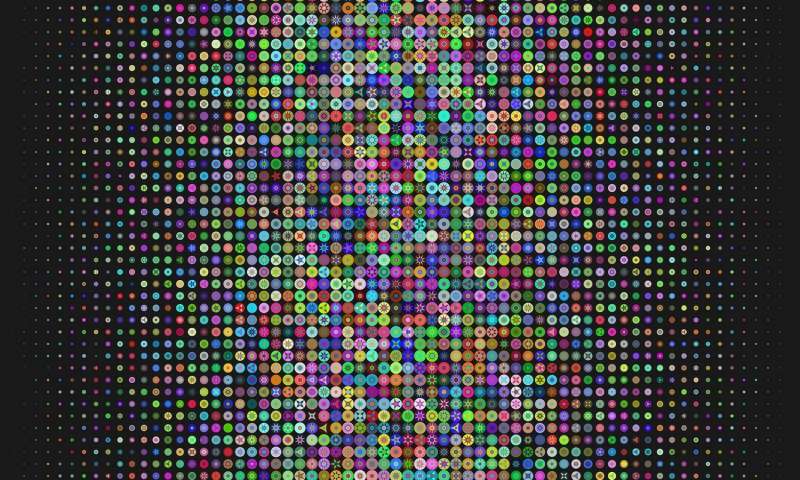

Traditionally, algorithms for optimization problems narrow down the search space for the best solution one step at a time. In contrast, this new algorithm samples a variety of directions in parallel. Based on that sample, the algorithm discards low-value directions from its search space and chooses the most valuable directions to progress towards a solution.

Take this toy example:

You’re in the mood to watch a movie similar to The Avengers. A traditional recommendation algorithm would sequentially add a single movie in every step which has similar attributes to those of The Avengers. In contrast, the new algorithm samples a group of movies at random, discarding those that are too dissimilar to The Avengers. What’s left is a batch of movies that are diverse (after all, you don’t want ten Batman movies) but similar to The Avengers. The algorithm continues to add batches in every step until it has enough movies to recommend.

This process of adaptive sampling is key to the algorithm’s ability to make the right decision at each step.

“Traditional algorithms for this class of problem greedily add data to the solution while considering the entire dataset at every step,” said Eric Balkanski, graduate student at SEAS and co-author of the research. “The strength of our algorithm is that in addition to adding data, it also selectively prunes data that will be ignored in future steps.”

In experiments, Singer and Balkanski demonstrated that their algorithm could sift through a data set which contained 1 million ratings from 6,000 users on 4,000 movies and recommend a personalized and diverse collection of movies for an individual user 20 times faster than the state-of-the-art.

The researchers also tested the algorithm on a taxi dispatch problem, where there are a certain number of taxis and the goal is to pick the best locations to cover the maximum number of potential customers. Using a data set of two million taxi trips from the New York City taxi and limousine commission, the adaptive-sampling algorithm found solutions 6 times faster.

“This gap would increase even more significantly on larger scale applications, such as clustering biological data, sponsored search auctions, or social media analytics,” said Balkanski.

Of course, the algorithm’s potential extends far beyond movie recommendations and taxi dispatch optimizations. It could be applied to:

- designing clinical trials for drugs to treat Alzheimer’s, multiple sclerosis, obesity, diabetes, hepatitis C, HIV and more

- evolutionary biology to find good representative subsets of different collections of genes from large datasets of genes from different species

- designing sensor arrays for medical imaging

- identifying drug-drug interaction detection from online health forums

This process of active learning is key to the algorithm’s ability to make the right decision at each step and solves the problem of diminishing returns.

“This research is a real breakthrough for large-scale discrete optimization,” said Andreas Krause, professor of Computer Science at ETH Zurich, who was not involved in the research. “One of the biggest challenges in machine learning is finding good, representative subsets of data from large collections of images or videos to train machine learning models. This research could identify those subsets quickly and have substantial practical impact on these large-scale data summarization problems.”

Singer-Balkanski model and variants of the algorithm developed in the paper could also be used to more quickly assess the accuracy of a machine learning model, said Vahab Mirrokni, a principal scientist at Google Research, who was not involved in the research.

“In some cases, we have a black-box access to the model accuracy function which is time-consuming to compute,” said Mirrokni. “At the same time, computing model accuracy for many feature settings can be done in parallel. This adaptive optimization framework is a great model for these important settings and the insights from the algorithmic techniques developed in this framework can have deep impact in this important area of machine learning research.”

Learn more: ‘Breakthrough’ algorithm exponentially faster than any previous one

The Latest on: Machine learning

[google_news title=”” keyword=”machine learning” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Machine learning

- Researchers use machine-learning modeling tools to improve zinc-finger nuclease editing technologyon May 17, 2024 at 7:23 am

Genome editing is making inroads into biomedical research and medicine. By employing biomolecule modeling tools, a Japanese research team is accelerating the pace and cutting the cost of zinc finger ...

- Poshmark introduces machine learning feature to better promote productson May 17, 2024 at 2:56 am

Resale marketplace Poshmark has put a new machine learning feature into place that hopes to accelerate sales for sellers while driving increased ...

- How the State Department used AI and machine learning to revolutionize records managementon May 16, 2024 at 12:34 pm

A pilot approach helped the State Department streamline the document declassification process and improve the customer experience for FOIA requestors.

- machine learning ideon May 15, 2024 at 5:00 pm

Machine is an IDE for building machine learning systems using TensorFlow. You can sign up for the alpha, but first, have a look at the video below to see what it is all about. You’ll see in the ...

- Simulating diffusion using 'kinosons' and machine learningon May 15, 2024 at 7:04 am

Researchers from the University of Illinois Urbana-Champaign have recast diffusion in multicomponent alloys as a sum of individual contributions, called "kinosons." Using machine learning to compute ...

- Artificial intelligence and machine learning in agricultureon May 15, 2024 at 5:27 am

HCR Law experts walk us through what we need to know about artificial intelligence and machine learning in agriculture ...

- Optimizing Machine Learning Controllers with Digital Twinson May 14, 2024 at 4:35 pm

How can machine learning be improved to provide better efficiency in the future? This is what a recent study published in Nature Communications hopes to ad | Technology ...

- Machine learning model uncovers new drug design opportunitieson May 14, 2024 at 12:18 pm

Pathogens are nothing if not adaptable, and their ability to protect themselves against antibiotics increasingly poses a public health concern. A research team led by Los Alamos National Laboratory ...

- Machine learning and AI aid in predicting molecular selectivity of chemical reactionson May 13, 2024 at 2:32 pm

There are few problems now that AI and machine learning cannot help overcome. Researchers from the Yokohama National University are using this modern advantage to resolve what conventional methods ...

via Bing News