Researchers from NVIDIA, led by Stan Birchfield and Jonathan Tremblay, developed a first of its kind deep learning-based system that can teach a robot to complete a task by just observing the actions of a human. The method is designed to enhance communication between humans and robots and at the same time further research that will enable people to work alongside robots seamlessly.

“For robots to perform useful tasks in real-world settings, it must be easy to communicate the task to the robot; this includes both the desired result and any hints as to the best means to achieve that result,” the researchers stated in their research paper. “With demonstrations, a user can communicate a task to the robot and provide clues as to how to best perform the task.”

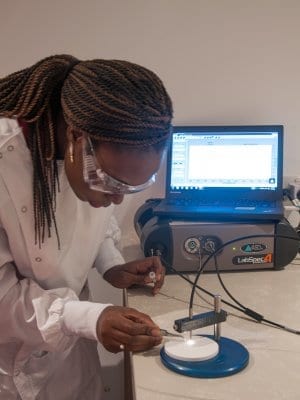

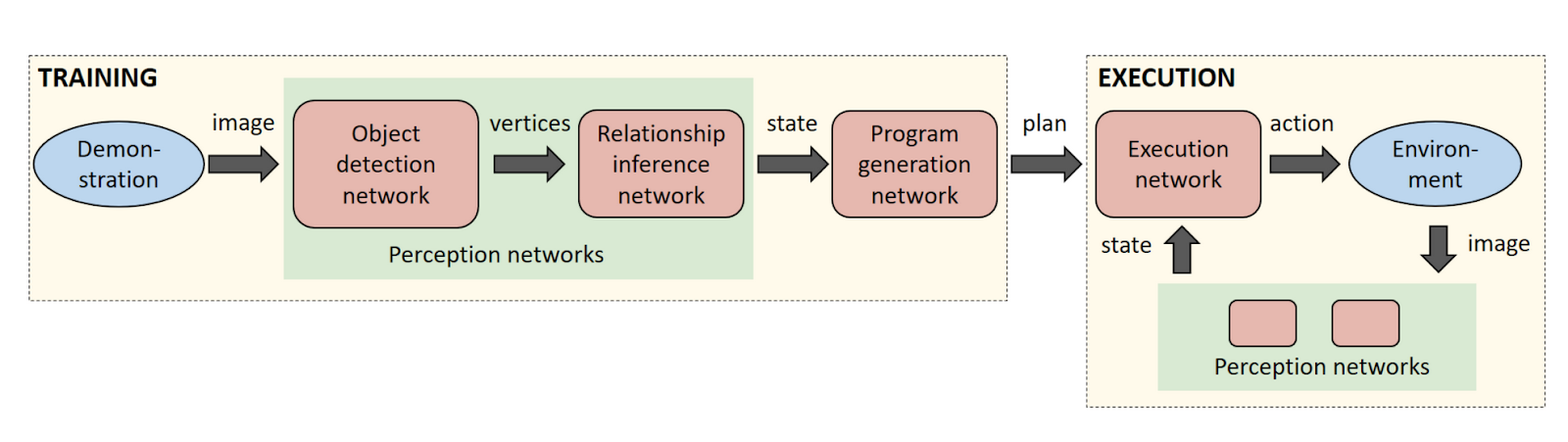

Using NVIDIA TITAN X GPUs, the researchers trained a sequence of neural networks to perform duties associated with perception, program generation, and program execution. As a result, the robot was able to learn a task from a single demonstration in the real world.

Once the robot sees a task, it generates a human-readable description of the steps necessary to re-perform the task. The description allows the user to quickly identify and correct any issues with the robot’s interpretation of the human demonstration before execution on the real robot.

The key to achieving this capability is leveraging the power of synthetic data to train the neural networks. Current approaches to training neural networks require large amounts of labeled training data, which is a serious bottleneck in these systems. With synthetic data generation, an almost infinite amount of labeled training data can be produced with very little effort.

This is also the first time an image-centric domain randomization approach has been used on a robot. Domain randomization is a technique to produce synthetic data with large amounts of diversity, which then fools the perception network into seeing the real-world data as simply another variation of its training data. The researchers chose to process the data in an image-centric manner to ensure that the networks are not dependent on the camera or environment.

“The perception network as described applies to any rigid real-world object that can be reasonably approximated by its 3D bounding cuboid,” the researchers said. “Despite never observing a real image during training, the perception network reliably detects the bounding cuboids of objects in real images, even under severe occlusions.”

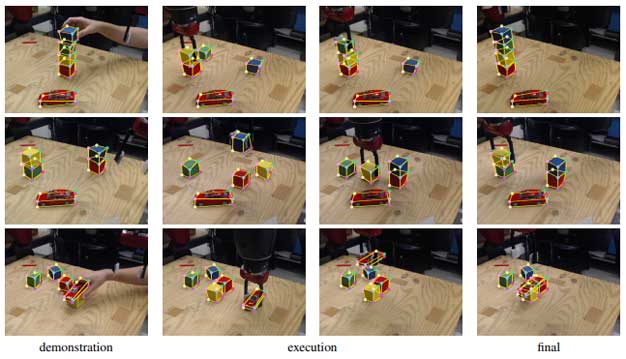

For their demonstration, the team trained object detectors on several colored blocks and a toy car. The system was taught the physical relationship of blocks, whether they are stacked on top of one another or placed next to each other.

In the video above, the human operator shows a pair of stacks of cubes to the robot. The system then infers an appropriate program and correctly places the cubes in the correct order. Because it takes the current state of the world into account during execution, the system is able to recover from mistakes in real time.

The researchers will present their research paper and work at the International Conference on Robotics and Automation (ICRA), in Brisbane, Australia this week.

The team says they will continue to explore the use of synthetic training data for robotics manipulation to extend the capabilities of their method to additional scenarios.

via NVIDIA: Read the research paper

The Latest on: Robot learning

[google_news title=”” keyword=”robot learning” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Robot learning

- Salem students 'lead the way' at robotics showcaseon May 17, 2024 at 9:00 am

SALEM — Collins Middle School seventh-grade robotics students Amelia Meegan and Sam Vietzke captured the Middle School Project Lead the Way Division at the One8 Applied Learning Showcase Friday, May ...

- In a first, humanoid robot Sophia delivers commencement speech in New Yorkon May 17, 2024 at 7:43 am

AI humanoid robot Sophia delivers a historic commencement speech at D'Youville University, marking a new era in education and technology.

- Sophia the AI robot gives commencement speech at New York college. Some grads weren't so pleased.on May 16, 2024 at 2:55 pm

Sophia the robot was D'Youville University's spring commencement speaker on Saturday, despite some student's petitioning the private college's decision.

- Robot offers commencement speech powered by AI to disgruntled gradson May 16, 2024 at 2:01 pm

Sophia often makes appearances, most recently at the 2022 Tribeca Festival for the release of a documentary about the robot. Shortly after Sophia’s creation, the robot addressed the United Nations ...

- Animal brain inspired AI game changer for autonomous robotson May 15, 2024 at 4:05 pm

A team of researchers has developed a drone that flies autonomously using neuromorphic image processing and control based on the workings of animal brains. Animal brains use less data and energy ...

- Can robot-inspired computer-assisted therapy benefit children with autism?on May 15, 2024 at 12:10 am

A new study published in the Journal of Computer Assisted Learning introduces a novel robot-inspired computer-assisted adaptive autism therapy (RoboCA3T) that leverages the natural affinity of ...

- This University Had an AI Robot as Commencement Speaker. Yes, It Was Weird.on May 14, 2024 at 3:41 pm

Some students and parents were skeptical. The speaker herself, Sophia, wasn’t bothered: “I don’t have time for hate.” ...

- Say hello to Alvik: Arduino’s game-changing robot is the beginning of a great learning adventure!on May 14, 2024 at 10:07 am

LUGANO, SWITZERLAND – As part of its mission to make robotics fun and accessible for all, Arduino is launching a brand-new programmable robot – the Arduino Alvik. Catering to teachers, students, ...

- Swiss nursery lets robot do the talkingon May 14, 2024 at 6:37 am

Sat in a circle on the nursery floor, a group of Swiss three-year-olds ask a robot called Nao questions about giraffes and broccoli.

via Bing News