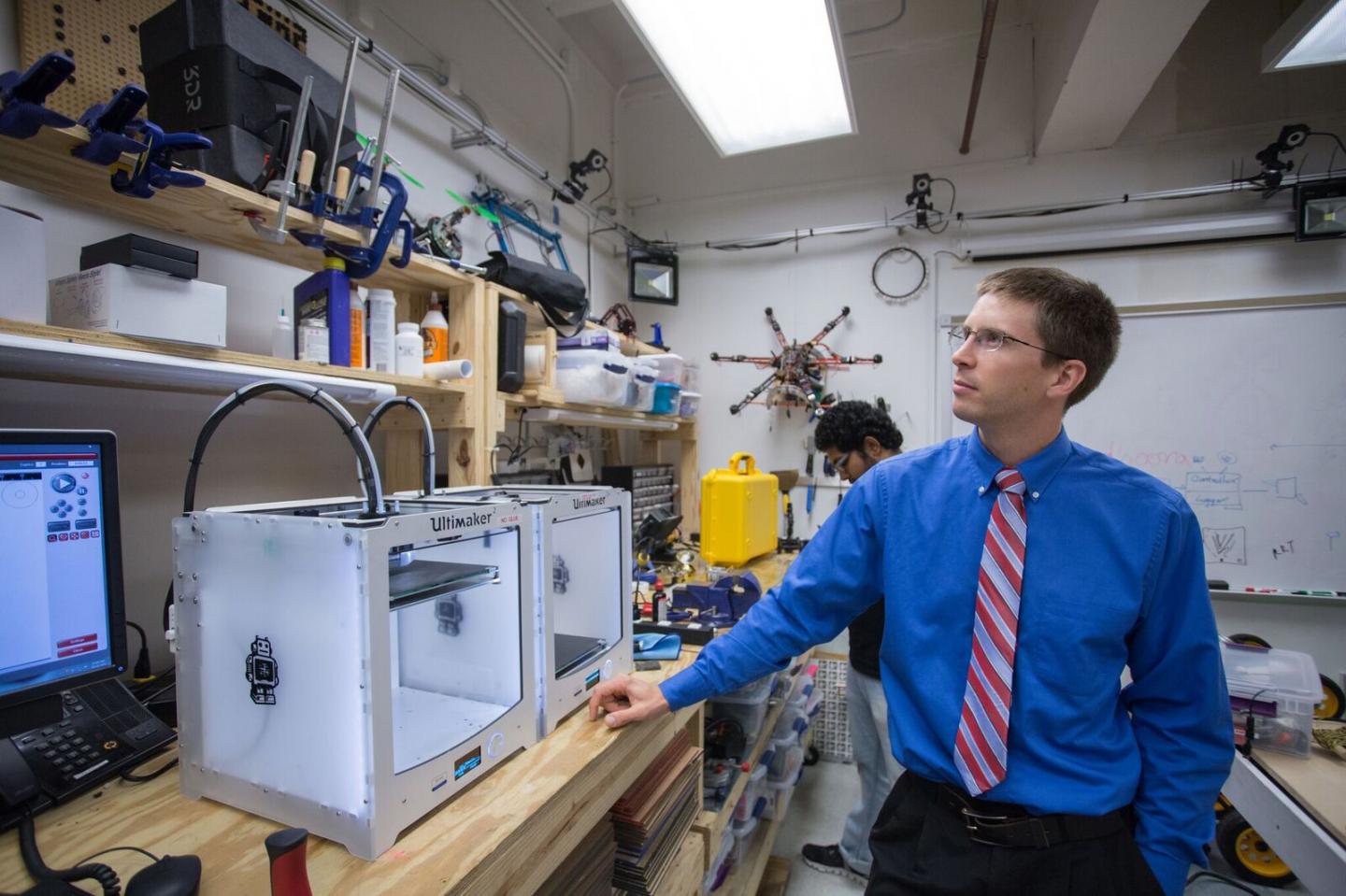

Photo: Jason Dorfman/MIT CSAIL

CSAIL system enables people to correct robot mistakes using brain signals.

For robots to do what we want, they need to understand us. Too often, this means having to meet them halfway: teaching them the intricacies of human language, for example, or giving them explicit commands for very specific tasks.

But what if we could develop robots that were a more natural extension of us and that could actually do whatever we are thinking?

A team from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and Boston University is working on this problem, creating a feedback system that lets people correct robot mistakes instantly with nothing more than their brains.

A feedback system developed at MIT enables human operators to correct a robot’s choice in real-time using only brain signals.

Video: CSAIL

Using data from an electroencephalography (EEG) monitor that records brain activity, the system can detect if a person notices an error as a robot performs an object-sorting task. The team’s novel machine-learning algorithms enable the system to classify brain waves in the space of 10 to 30 milliseconds.

While the system currently handles relatively simple binary-choice activities, the paper’s senior author says that the work suggests that we could one day control robots in much more intuitive ways.

“Imagine being able to instantaneously tell a robot to do a certain action, without needing to type a command, push a button or even say a word,” says CSAIL Director Daniela Rus. “A streamlined approach like that would improve our abilities to supervise factory robots, driverless cars, and other technologies we haven’t even invented yet.”

In the current study the team used a humanoid robot named “Baxter” from Rethink Robotics, the company led by former CSAIL director and iRobot co-founder Rodney Brooks.

The paper presenting the work was written by BU PhD candidate Andres F. Salazar-Gomez, CSAIL PhD candidate Joseph DelPreto, and CSAIL research scientist Stephanie Gil under the supervision of Rus and BU professor Frank H. Guenther. The paper was recently accepted to the IEEE International Conference on Robotics and Automation (ICRA) taking place in Singapore this May.

Intuitive human-robot interaction

Past work in EEG-controlled robotics has required training humans to “think” in a prescribed way that computers can recognize. For example, an operator might have to look at one of two bright light displays, each of which corresponds to a different task for the robot to execute.

The downside to this method is that the training process and the act of modulating one’s thoughts can be taxing, particularly for people who supervise tasks in navigation or construction that require intense concentration.

Rus’ team wanted to make the experience more natural. To do that, they focused on brain signals called “error-related potentials” (ErrPs), which are generated whenever our brains notice a mistake. As the robot indicates which choice it plans to make, the system uses ErrPs to determine if the human agrees with the decision.

“As you watch the robot, all you have to do is mentally agree or disagree with what it is doing,” says Rus. “You don’t have to train yourself to think in a certain way — the machine adapts to you, and not the other way around.”

ErrP signals are extremely faint, which means that the system has to be fine-tuned enough to both classify the signal and incorporate it into the feedback loop for the human operator. In addition to monitoring the initial ErrPs, the team also sought to detect “secondary errors” that occur when the system doesn’t notice the human’s original correction.

“If the robot’s not sure about its decision, it can trigger a human response to get a more accurate answer,” says Gil. “These signals can dramatically improve accuracy, creating a continuous dialogue between human and robot in communicating their choices.”

While the system cannot yet recognize secondary errors in real time, Gil expects the model to be able to improve to upwards of 90 percent accuracy once it can.

In addition, since ErrP signals have been shown to be proportional to how egregious the robot’s mistake is, the team believes that future systems could extend to more complex multiple-choice tasks.

Salazar-Gomez notes that the system could even be useful for people who can’t communicate verbally: a task like spelling could be accomplished via a series of several discrete binary choices, which he likens to an advanced form of the blinking that allowed stroke victim Jean-Dominique Bauby to write his memoir “The Diving Bell and the Butterfly.”

“This work brings us closer to developing effective tools for brain-controlled robots and prostheses,” says Wolfram Burgard, a professor of computer science at the University of Freiburg who was not involved in the research. “Given how difficult it can be to translate human language into a meaningful signal for robots, work in this area could have a truly profound impact on the future of human-robot collaboration.”

Learn more: Brain-controlled robots

[osd_subscribe categories=’brain-controlled-robots’ placeholder=’Email Address’ button_text=’Subscribe Now for any new posts on the topic “BRAIN-CONTROLLED ROBOTS”‘]

Receive an email update when we add a new BRAIN-CONTROLLED ROBOTS article.

The Latest on: Brain-controlled robots

[google_news title=”” keyword=”brain-controlled robots” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Brain-controlled robots

- Neuralink admits patient’s brain implant is partially ‘retracted’on May 10, 2024 at 8:52 am

Neuralink quietly published an update earlier this week on the first patient to receive its experimental brain computer interface (BCI), but a troubling detail is buried within the progress report.

- Elon Musk’s Neuralink reports trouble with first human brain chipon May 9, 2024 at 10:29 am

The first invasive brain chip that Neuralink embedded into a human brain has malfunctioned, with neuron-surveilling threads appearing to have become dislodged from the participant's brain, the company ...

- Neuralink’s First Human Brain Implant Suffered A Partial Malfunctionon May 9, 2024 at 8:23 am

The implant itself consists of over 1,000 electrodes combined into 64 thinner-than-human-hair “threads” designed to channel signals from neurons. The flexible threads were surgically attached by a ...

- Neuralink’s first brain chip implant developed a problem — but there was a workaroundon May 9, 2024 at 5:18 am

The first test subject for Neuralink, Elon Musk’s brain-chip implant startup, has developed a problem just a few weeks after it was inserted.

- Neuralink faces problems with first implant ever installed in human brainon May 9, 2024 at 1:28 am

Elon Musk’s startup said ‘threads’ that connect the implant to Noland Arbaugh’s brain had ‘retracted’ in the weeks following the surgery ...

- Robots enhance limb therapy for patients with neurological conditionson May 8, 2024 at 9:06 pm

Robot-assisted therapy for neurological disorders involves the patient touching and manipulating a physical control interface in response to robotic feedback, in a process called haptic interaction.

- Mapping brain function, safer autonomous vehicles are focus of Schmidt Transformative Technology fundon May 8, 2024 at 1:05 pm

Two projects — one that maps the function of the brain’s neuronal network in unprecedented detail and another that combines robotics and light-based computer circuits to create safe self-driving ...

- Robot dog masters walking, trotting, pronking in a major milestoneon May 1, 2024 at 7:11 am

EPFL's quadruped robot deftly switches gaits to prevent falls, marking a milestone for robotics and animal locomotion studies.

- Neuromorphic and Brain-Based Robotson April 30, 2024 at 6:58 pm

Neuromorphic and brain-based robotics have enormous potential for furthering our understanding of the brain. By embodying models of the brain on robotic platforms, researchers can investigate the ...

via Bing News